Generative diffusion fashions like Steady Diffusion, Flux, and video fashions reminiscent of Hunyuan depend on information acquired throughout a single, resource-intensive coaching session utilizing a hard and fast dataset. Any ideas launched after this coaching – known as the information cut-off – are absent from the mannequin except supplemented by fine-tuning or exterior adaptation strategies like Low Rank Adaptation (LoRA).

It will due to this fact be splendid if a generative system that outputs pictures or movies may attain out to on-line sources and produce them into the era course of as wanted. On this approach, as an example, a diffusion mannequin that is aware of nothing in regards to the very newest Apple or Tesla launch may nonetheless produce pictures containing these new merchandise.

In regard to language fashions, most of us are acquainted with methods reminiscent of Perplexity, Pocket book LM and ChatGPT-4o, that may incorporate novel exterior info in a Retrieval Augmented Era (RAG) mannequin.

RAG processes make ChatGPT 4o’s responses extra related. Supply: https://chatgpt.com/

Nonetheless, that is an unusual facility in terms of producing pictures, and ChatGPT will confess its personal limitations on this regard:

ChatGPT 4o has made guess in regards to the visualization of a model new watch launch, based mostly on the final line and on descriptions it has interpreted; nevertheless it can’t ‘take in’ and combine new pictures right into a DALL-E-based era.

Incorporating externally retrieved information right into a generated picture is difficult as a result of the incoming picture should first be damaged down into tokens and embeddings, that are then mapped to the mannequin’s nearest educated area information of the topic.

Whereas this course of works successfully for post-training instruments like ControlNet, such manipulations stay largely superficial, primarily funneling the retrieved picture by a rendering pipeline, however with out deeply integrating it into the mannequin’s inside illustration.

Because of this, the mannequin lacks the flexibility to generate novel views in the best way that neural rendering methods like NeRF can, which assemble scenes with true spatial and structural understanding.

Mature Logic

An analogous limitation applies to RAG-based queries in Massive Language Fashions (LLMs), reminiscent of Perplexity. When a mannequin of this kind processes externally retrieved information, it features very like an grownup drawing on a lifetime of information to deduce possibilities a few matter.

Nonetheless, simply as an individual can’t retroactively combine new info into the cognitive framework that formed their elementary worldview – when their biases and preconceptions had been nonetheless forming – an LLM can’t seamlessly merge new information into its pre-trained construction.

As an alternative, it may well solely ‘influence’ or juxtapose the brand new information towards its current internalized information, utilizing discovered rules to research and conjecture somewhat than to synthesize on the foundational stage.

This short-fall in equivalency between juxtaposed and internalized era is more likely to be extra evident in a generated picture than in a language-based era: the deeper community connections and elevated creativity of ‘native’ (somewhat than RAG-based) era has been established in varied research.

Hidden Dangers of RAG-Succesful Picture Era

Even when it had been technically possible to seamlessly combine retrieved web pictures into newly synthesized ones in a RAG-style method, safety-related limitations would current an extra problem.

Many datasets used for coaching generative fashions have been curated to reduce the presence of specific, racist, or violent content material, amongst different delicate classes. Nonetheless, this course of is imperfect, and residual associations can persist. To mitigate this, methods like DALL·E and Adobe Firefly depend on secondary filtering mechanisms that display each enter prompts and generated outputs for prohibited content material.

Because of this, a easy NSFW filter – one which primarily blocks overtly specific content material – can be inadequate for evaluating the acceptability of retrieved RAG-based information. Such content material may nonetheless be offensive or dangerous in ways in which fall outdoors the mannequin’s predefined moderation parameters, doubtlessly introducing materials that the AI lacks the contextual consciousness to correctly assess.

Discovery of a current vulnerability within the CCP-produced DeepSeek, designed to suppress discussions of banned political content material, has highlighted how various enter pathways will be exploited to bypass a mannequin’s moral safeguards; arguably, this is applicable additionally to arbitrary novel information retrieved from the web, when it’s meant to be integrated into a brand new picture era.

RAG for Picture Era

Regardless of these challenges and thorny political points, a lot of initiatives have emerged that try to make use of RAG-based strategies to include novel information into visible generations.

ReDi

The 2023 Retrieval-based Diffusion (ReDi) mission is a learning-free framework that hastens diffusion mannequin inference by retrieving comparable trajectories from a precomputed information base.

Values from a dataset will be ‘borrowed’ for a brand new era in ReDi. Supply: https://arxiv.org/pdf/2302.02285

Within the context of diffusion fashions, a trajectory is the step-by-step path that the mannequin takes to generate a picture from pure noise. Usually, this course of occurs progressively over many steps, with every step refining the picture a bit extra.

ReDi speeds this up by skipping a bunch of these steps. As an alternative of calculating each single step, it retrieves an identical previous trajectory from a database and jumps forward to a later level within the course of. This reduces the variety of calculations wanted, making diffusion-based picture era a lot quicker, whereas nonetheless holding the standard excessive.

ReDi doesn’t modify the diffusion mannequin’s weights, however as an alternative makes use of the information base to skip intermediate steps, thereby decreasing the variety of perform estimations wanted for sampling.

After all, this isn’t the identical as incorporating particular pictures at will right into a era request; nevertheless it does relate to comparable sorts of era.

Launched in 2022, the 12 months that latent diffusion fashions captured the general public creativeness, ReDi seems to be among the many earliest diffusion-based method to lean on a RAG methodology.

Although it must be talked about that in 2021 Fb Analysis launched Occasion-Conditioned GAN, which sought to situation GAN pictures on novel picture inputs, this type of projection into the latent house is extraordinarily widespread within the literature, each for GANs and diffusion fashions; the problem is to make such a course of training-free and useful in real-time, as LLM-focused RAG strategies are.

RDM

One other early foray into RAG-augmented picture era is Retrieval-Augmented Diffusion Fashions (RDM), which introduces a semi-parametric method to generative picture synthesis. Whereas conventional diffusion fashions retailer all discovered visible information inside their neural community parameters, RDM depends on an exterior picture database:

Retrieved nearest neighbors in an illustrative pseudo-query in RDM*.

Throughout coaching the mannequin retrieves nearest neighbors (visually or semantically comparable pictures) from the exterior database, to information the era course of. This enables the mannequin to situation its outputs on real-world visible cases.

The retrieval course of is powered by CLIP embeddings, designed to power the retrieved pictures to share significant similarities with the question, and in addition to supply novel info to enhance era.

This reduces reliance on parameters, facilitating smaller fashions that obtain aggressive outcomes with out the necessity for intensive coaching datasets.

The RDM method helps post-hoc modifications: researchers can swap out the database at inference time, permitting for zero-shot adaptation to new types, domains, and even completely totally different duties reminiscent of stylization or class-conditional synthesis.

Within the decrease rows, we see the closest neighbors drawn into the diffusion course of in RDM*.

A key benefit of RDM is its potential to enhance picture era with out retraining the mannequin. By merely altering the retrieval database, the mannequin can generalize to new ideas it was by no means explicitly educated on. That is significantly helpful for purposes the place area shifts happen, reminiscent of producing medical imagery based mostly on evolving datasets, or adapting text-to-image fashions for inventive purposes.

Negatively, retrieval-based strategies of this type rely on the standard and relevance of the exterior database, which makes information curation an essential consider attaining high-quality generations; and this method stays removed from a picture synthesis equal of the type of RAG-based interactions typical in industrial LLMs.

ReMoDiffuse

ReMoDiffuse is a retrieval-augmented movement diffusion mannequin designed for 3D human movement era. In contrast to conventional movement era fashions that rely purely on discovered representations, ReMoDiffuse retrieves related movement samples from a big movement dataset and integrates them into the denoising course of, in a schema just like RDM (see above).

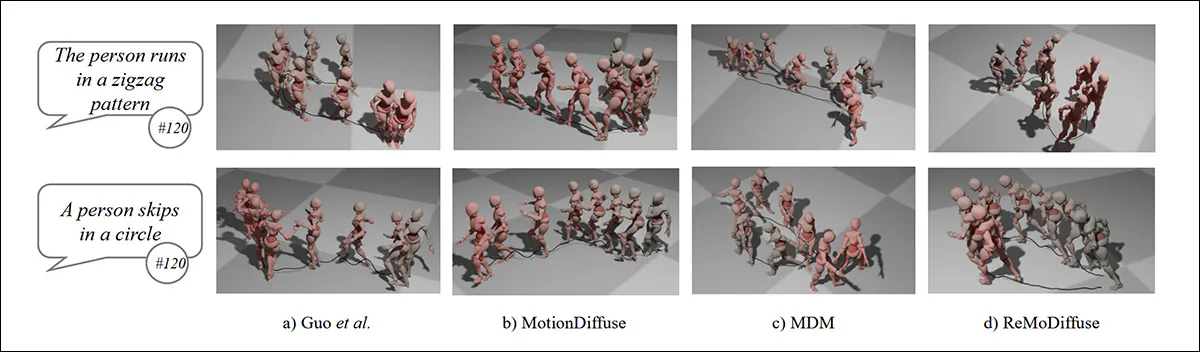

Comparability of RAG-augmented ReMoDiffuse (right-most) to prior strategies. Supply: https://arxiv.org/pdf/2304.01116

This enables the mannequin to generate movement sequences designed to be extra pure and numerous, in addition to semantically trustworthy to the person’s textual content prompts.

ReMoDiffuse makes use of an progressive hybrid retrieval mechanism, which selects movement sequences based mostly on each semantic and kinematic similarities, with the intention of guaranteeing that the retrieved motions are usually not simply thematically related but additionally bodily believable when built-in into the brand new era.

The mannequin then refines these retrieved samples utilizing a Semantics-Modulated Transformer, which selectively incorporates information from the retrieved motions whereas sustaining the attribute qualities of the generated sequence:

Schema for ReMoDiffuse’s pipeline.

The mission’s Situation Combination approach enhances the mannequin’s potential to generalize throughout totally different prompts and retrieval circumstances, balancing retrieved movement samples with textual content prompts throughout era, and adjusting how a lot weight every supply will get at every step.

This will help forestall unrealistic or repetitive outputs, even for uncommon prompts. It additionally addresses the scale sensitivity problem that always arises within the classifier-free steering strategies generally utilized in diffusion fashions.

RA-CM3

Stanford’s 2023 paper Retrieval-Augmented Multimodal Language Modeling (RA-CM3) permits the system to entry real-world info at inference time:

Stanford’s Retrieval-Augmented Multimodal Language Modeling (RA-CM3) mannequin makes use of internet-retrieved pictures to reinforce the era course of, however stays a prototype with out public entry. Supply: https://cs.stanford.edu/~myasu/information/RACM3_slides.pdf

RA-CM3 integrates retrieved textual content and pictures into the era pipeline, enhancing each text-to-image and image-to-text synthesis. Utilizing CLIP for retrieval and a Transformer because the generator, the mannequin refers to pertinent multimodal paperwork earlier than composing an output.

Benchmarks on MS-COCO present notable enhancements over DALL-E and comparable methods, attaining a 12-point Fréchet Inception Distance (FID) discount, with far decrease computational price.

Nonetheless, as with different retrieval-augmented approaches, RA-CM3 doesn’t seamlessly internalize its retrieved information. Somewhat, it superimposes new information towards its pre-trained community, very like an LLM augmenting responses with search outcomes. Whereas this technique can enhance factual accuracy, it doesn’t change the necessity for coaching updates in domains the place deep synthesis is required.

Moreover, a sensible implementation of this method doesn’t seem to have been launched, even to an API-based platform.

RealRAG

A new launch from China, and the one which has prompted this take a look at RAG-augmented generative picture methods, known as Retrieval-Augmented Life like Picture Era (RealRAG).

Exterior pictures drawn into RealRAG (decrease center). Supply: https://arxiv.o7rg/pdf/2502.00848

RealRAG retrieves precise pictures of related objects from a database curated from publicly out there datasets reminiscent of ImageNet, Stanford Automobiles, Stanford Canines, and Oxford Flowers. It then integrates the retrieved pictures into the era course of, addressing information gaps within the mannequin.

A key part of RealRAG is self-reflective contrastive studying, which trains a retrieval mannequin to search out informative reference pictures, somewhat than simply choosing visually comparable ones.

The authors state:

‘Our key perception is to coach a retriever that retrieves pictures staying off the era house of the generator, but closing to the illustration of textual content prompts.

‘To this [end], we first generate pictures from the given textual content prompts after which make the most of the generated pictures as queries to retrieve essentially the most related pictures within the real-object-based database. These most related pictures are utilized as reflective negatives.’

This method ensures that the retrieved pictures contribute lacking information to the era course of, somewhat than reinforcing current biases within the mannequin.

Left-most, the retrieved reference picture; middle, with out RAG; rightmost, with using the retrieved picture.

Nonetheless, the reliance on retrieval high quality and database protection signifies that its effectiveness can differ relying on the provision of high-quality references. If a related picture doesn’t exist within the dataset, the mannequin should battle with unfamiliar ideas.

RealRAG is a really modular structure, providing compatibility with a number of different generative architectures, together with U-Web-based, DiT-based, and autoregressive fashions.

Basically the retrieving and processing of exterior pictures provides computational overhead, and the system’s efficiency is determined by how nicely the retrieval mechanism generalizes throughout totally different duties and datasets.

Conclusion

This can be a consultant somewhat than exhaustive overview of image-retrieving multimodal generative methods. Some methods of this kind use retrieval solely to enhance imaginative and prescient understanding or dataset curation, amongst different numerous motives, somewhat than in search of to generate pictures. One instance is Web Explorer.

Most of the different RAG-integrated initiatives within the literature stay unreleased. Prototypes, with solely revealed analysis, embrace Re-Imagen, which – regardless of its provenance from Google – can solely entry pictures from a neighborhood customized database.

Additionally, In November 2024, Baidu introduced Picture-Primarily based Retrieval-Augmented Era (iRAG), a brand new platform that makes use of retrieved pictures ‘from a database’. Although iRAG is reportedly out there on the Ernie platform, there appear to be no additional particulars about this retrieval course of, which appears to depend on a native database (i.e., native to the service and never straight accessible to the person).

Additional, the 2024 paper Unified Textual content-to-Picture Era and Retrieval provides yet one more RAG-based technique of utilizing exterior pictures to reinforce outcomes at era time – once more, from a neighborhood database somewhat than from advert hoc web sources.

Pleasure round RAG-based augmentation in picture era is more likely to deal with methods that may incorporate internet-sourced or user-uploaded pictures straight into the generative course of, and which permit customers to take part within the selections or sources of pictures.

Nonetheless, it is a important problem for a minimum of two causes; firstly, as a result of the effectiveness of such methods normally is determined by deeply built-in relationships fashioned throughout a resource-intensive coaching course of; and secondly, as a result of issues over security, legality, and copyright restrictions, as famous earlier, make this an unlikely characteristic for an API-driven internet service, and for industrial deployment basically.

* Supply: https://proceedings.neurips.cc/paper_files/paper/2022/file/62868cc2fc1eb5cdf321d05b4b88510c-Paper-Convention.pdf

First revealed Tuesday, February 4, 2025