OKRs—Goals and Key Outcomes—have turn into a well-liked goal-setting

framework in tech and past. They had been designed to bridge the hole between

technique and execution, promising focus, alignment, and accountability. However

too typically, they’ve changed into one thing else completely: a quarterly ritual

of checklists, dashboards, and efficiency metrics that smother the unique

intent.

I’ve seen it occur in organizations huge and small. Targets are written

down, however nothing actually modifications. Groups comply at greatest—or disengage

fully.

This text is my response. It’s concerning the groups that break that

sample—those that use OKRs not as a administration instrument, however as a technique to personal

outcomes, align with technique, and ship actual leads to the messy,

fantastic actuality of constructing merchandise and serving prospects.

Widespread Pitfalls of OKRs in Follow

OKRs are all over the place. From startups to massive enterprises, they present up in

kickoff conferences, dashboards, and technique paperwork.

However in lots of organizations I’ve labored with, OKRs hardly ever change how groups

really work—or ship.

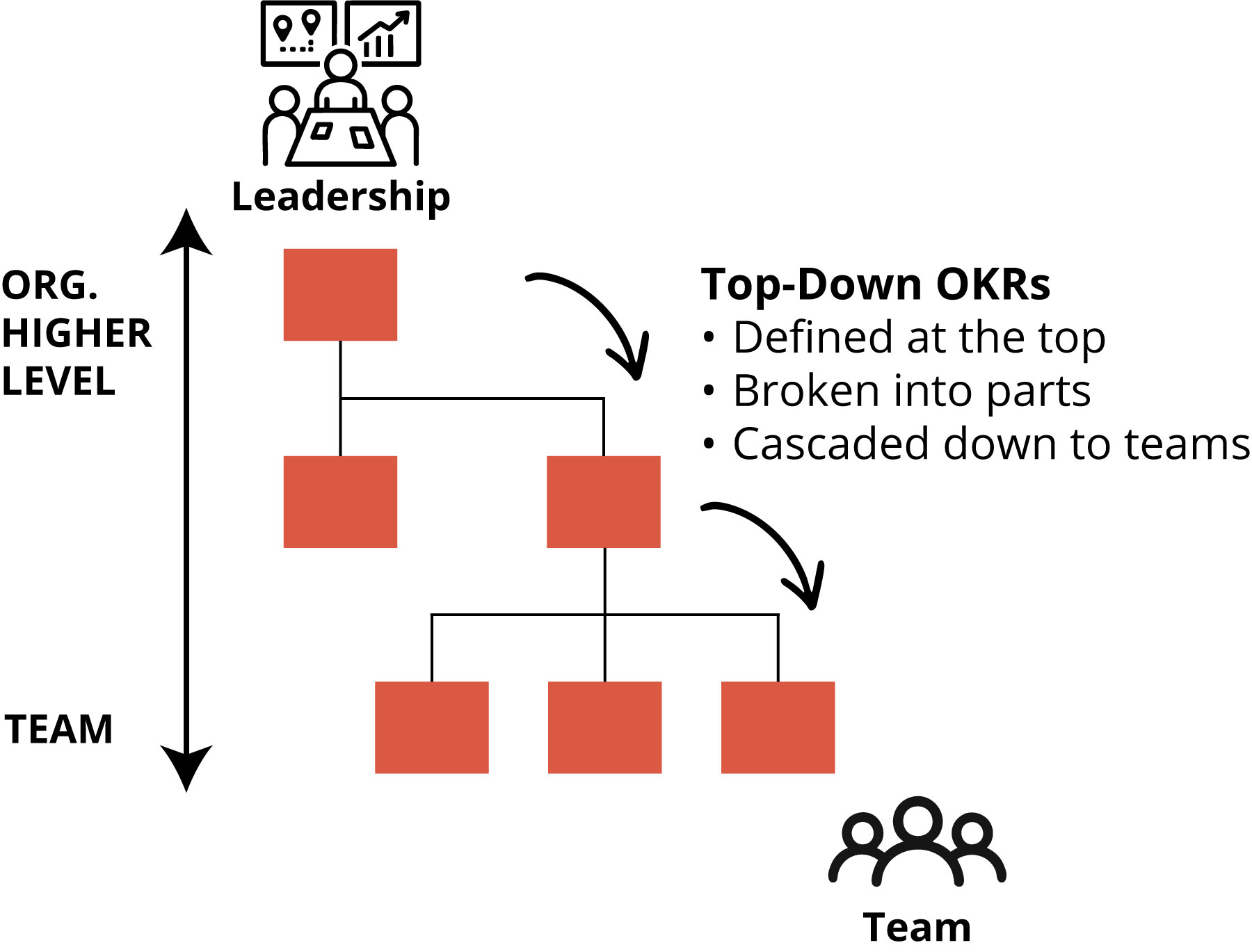

Too typically, targets are written down however fail to drive motion. Why? As a result of

they’re imposed from above. Management defines targets and key outcomes,

fingers them down, and expects groups to execute.

Generally these OKRs are nothing greater than KPIs with new labels. Different

instances, they’re imprecise slogans—disconnected from actual work. Both manner, the

outcome is similar: groups don’t personal the targets. With out possession, they comply

at greatest—or disengage completely. True dedication is uncommon.

When OKRs are handed down as an alternative of co-created, they lose their energy.

Moderately than driving focus and adaptation, they turn into static artifacts—one other

checkbox in a quarterly ritual.

This isn’t the one manner it will probably go. I’ve labored with groups that broke the

sample. Not by ready for somebody handy them higher targets—however by stepping

up, defining their very own, and proudly owning the outcomes.

These groups didn’t deal with OKRs like checklists or dashboards. They made them

a part of how they assume, plan, and ship daily.

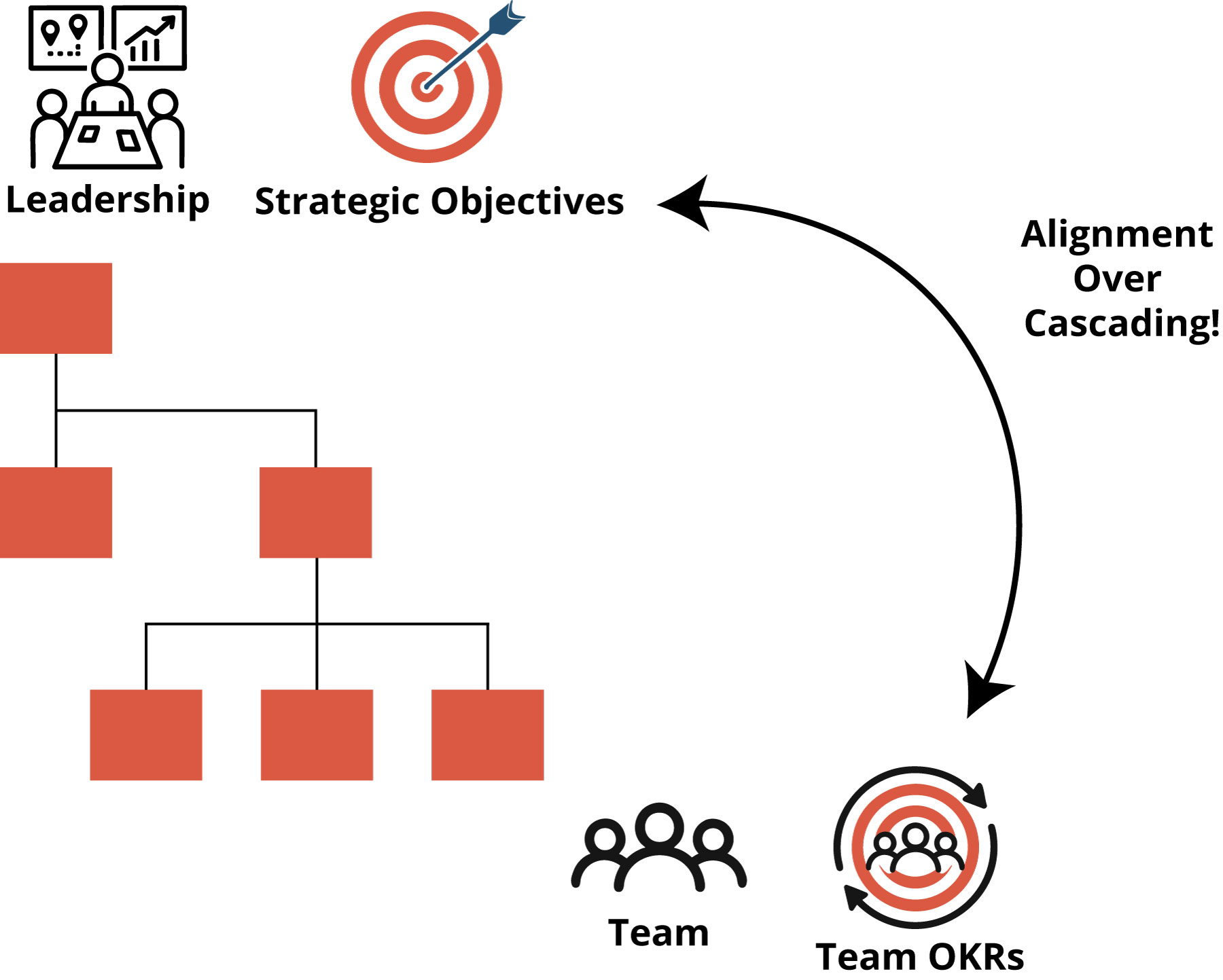

Bridging the Hole: Technique and Workforce OKRs

Workforce autonomy doesn’t must imply isolation, and strategic alignment

doesn’t require command and management. But in lots of organizations, these two

concepts typically conflict.

Management units strategic priorities and expects groups to “align.” Groups,

in the meantime, outline OKRs they consider matter—however these don’t at all times map to the

larger image. The result’s misalignment, frustration, and wasted

vitality.

Nice groups and nice leaders bridge that hole by assembly within the center.

Technique gives route; Workforce OKRs create dedication.

This isn’t a cascade. It’s a dialog.

In high-performing environments, management shares intent—the challenges to

resolve, the alternatives to grab, the metrics to maneuver. Groups pay attention, replicate,

and outline what they’ll personal. As one staff would possibly body it:

“Primarily based on what we all know and might affect, right here’s what we consider we will

obtain—and the way we’ll measure progress.”

Right here, possession isn’t assigned; it’s assumed. Workforce OKRs allow not simply

strategic compliance however strategic contribution.

What Makes Workforce OKRs Totally different

Workforce OKRs aren’t assigned, nor are they dropped into trackers by

management. They’re assumed—created by the staff, for the staff.

This shift issues. It marks the distinction between executing somebody

else’s priorities and committing to an consequence the staff actually believes in.

With Workforce OKRs, the method seems to be completely different:

- The staff defines the Goal, rooted within the strategic context. It’s not

only a fancy slogan—it’s a transparent and significant assertion of what the staff desires

to attain and why it issues.

- The staff identifies Key Outcomes—clear indicators of progress that present actual,

measurable change. A Key End result typically isn’t a KPI itself, however a motion in a

KPI. It’s about route and affect, not simply numbers.

- The staff commits to the result, not simply doing duties. They take actual

possession, keep versatile, and concentrate on what actually brings worth.

Leaders nonetheless lead, however their position modifications. As a substitute of dictating the how,

they make clear the why. They share route, invite dialogue, and help groups

in constructing actual possession.

This isn’t chaos. It’s alignment via belief.

From Technique to Workforce OKR

Workforce OKRs don’t exist in isolation. They emerge from context—formed by

imaginative and prescient, guided by technique, and grounded in actuality.

This layered mannequin reveals how intent flows into motion:

- Imaginative and prescient units the long-term route.

- Technique defines present priorities.

- Workforce OKRs make clear what every staff will personal.

- Backlog connects intent to concrete work.

Every layer helps the subsequent. When imaginative and prescient is unclear, technique struggles to

concentrate on what issues most subsequent. With no clear technique, Workforce OKRs lose

alignment and function. And when Workforce OKRs are imprecise, backlogs fill with

scattered duties quite than deliberate steps towards significant outcomes.

However when these layers align, groups can confidently translate high-level

intent into centered, significant motion.

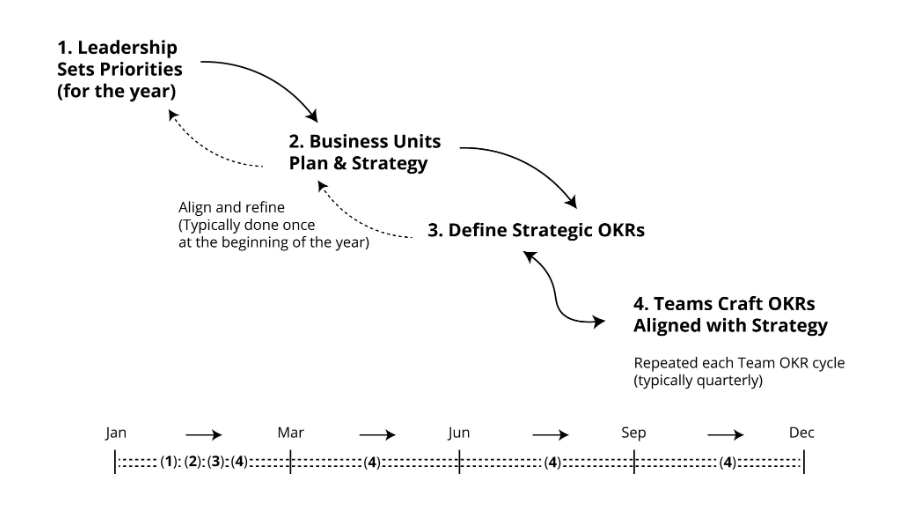

From Path to Definition: Key Conversations

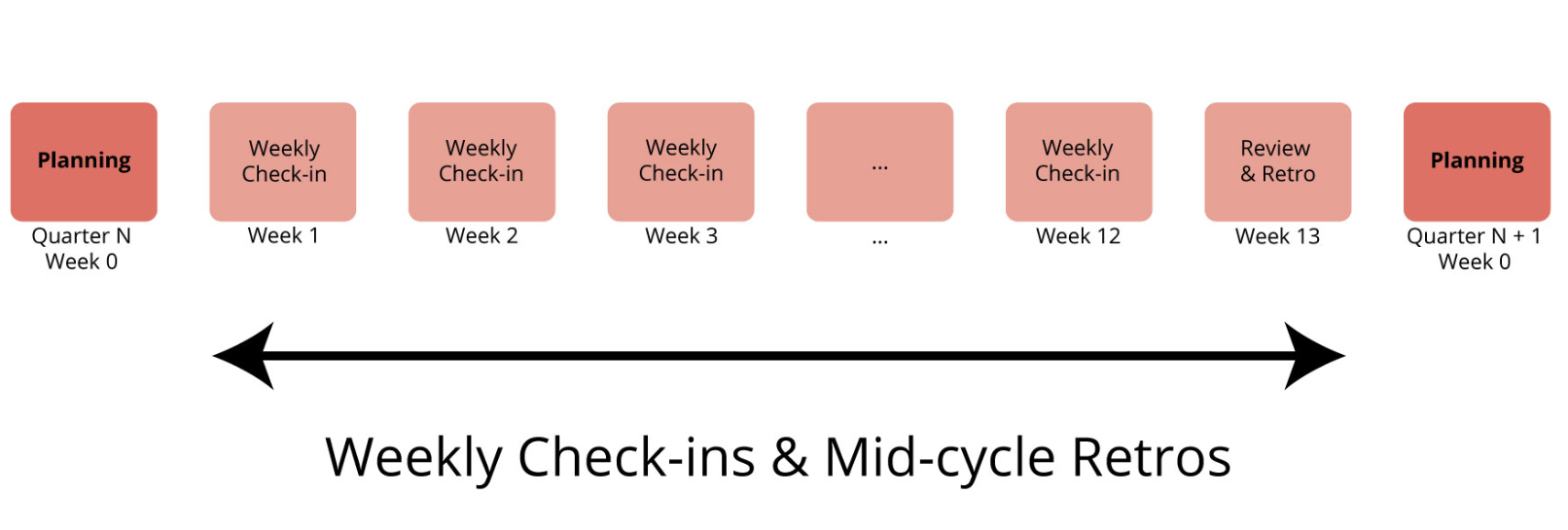

Alignment doesn’t occur in a single assembly; it evolves via a rhythm

of structured conversations. This timeline illustrates how technique turns into

significant staff motion:

- Strategic Alignment Workshop: Management shares intent, not

deliverables.

- Workforce OKR Planning Workshop: Groups replicate and outline what they’ll

pursue.

- From Targets to Work: OKRs circulation into backlog objects and initiatives.

This isn’t a inflexible cascade. It’s a rhythm of dialogue and iteration,

constructing alignment with out sacrificing autonomy.

A Actual Instance: From Technique to Dedication

I’ve labored with many massive organizations, and I get it: management wants

construction, a gradual rhythm, and alignment throughout enterprise items. Strategic

OKRs will be extremely highly effective when used the suitable manner.

Right here’s how one massive Brazilian monetary establishment created a easy but

efficient technique to join technique and execution.

Management Defines Firm Priorities

At the beginning of the 12 months, management set three daring priorities: simplify

onboarding for brand new prospects, develop into the small-business phase, and

enhance resilience in essential techniques.

This wasn’t a want listing. Leaders intentionally centered on a couple of

high-impact bets, creating house for enterprise items and groups to take

significant possession.

Enterprise Items Construct Their Plans

The Digital Companies Enterprise Unit—liable for the net banking

platform—centered on precedence #1: simplifying onboarding. They outlined their

Strategic OKR:

Goal: Delight new prospects by reworking the first-week

expertise.

Key Outcomes:

- Cut back first-week buyer drop-off price by 25%

- Enhance total first-week NPS from 20 to 35.

- Decrease common help name time for brand new customers by 15%.

This Strategic OKR grew to become a north star for a number of groups, providing

route with out prescribing options.

Strategic OKRs Are Refined in Dialog

Strategic OKRs at each firm and BU ranges had been refined via

dialogue, not decree. Leaders challenged assumptions, clarified metrics, and

aligned on the place every BU may create probably the most affect.

Word that this Strategic OKR was later pushed by a number of groups.

Larger-level management, although they’d entry to all staff OKRs, selected not

to trace them immediately. As a substitute, they reviewed a month-to-month report centered on

the BU’s Strategic OKR—a realistic strategy for big organizations the place

prime leaders can’t realistically observe each staff’s targets.

Groups Outline Their OKRs

When BU-level targets reached groups, they arrived as context, not orders.

BU leaders shared supporting information—consumer analytics, drop-off factors, buyer

complaints—then stepped again.

The Discover Workforce, liable for cellular app onboarding within the Digital

Companies BU, requested themselves: “What a part of this may we personal? What would

success seem like from our perspective?”

Their Workforce OKR:

Goal: Make the primary week seamless and confidence-boosting for

new customers.

Key Outcomes:

- Enhance onboarding completion from 65% to 90%.

- Increase tutorial engagement from 15% to 50%.

- Cut back help tickets about account setup by 30%.

Over the quarter, the Discover Workforce redesigned onboarding flows, examined

tutorials, and improved contextual assist. Weekly check-ins and mid-cycle

retrospectives stored them adaptive and accountable. By the top of the cycle,

they’d delivered measurable enhancements in buyer outcomes, immediately

supporting the BU’s Strategic OKR.

Word on Adaptation: This instance attracts from actual patterns I’ve noticed

in massive organizations. To respect confidentiality, particulars have been

modified, however the essence of how Strategic OKRs and Workforce OKRs join

stays intact.

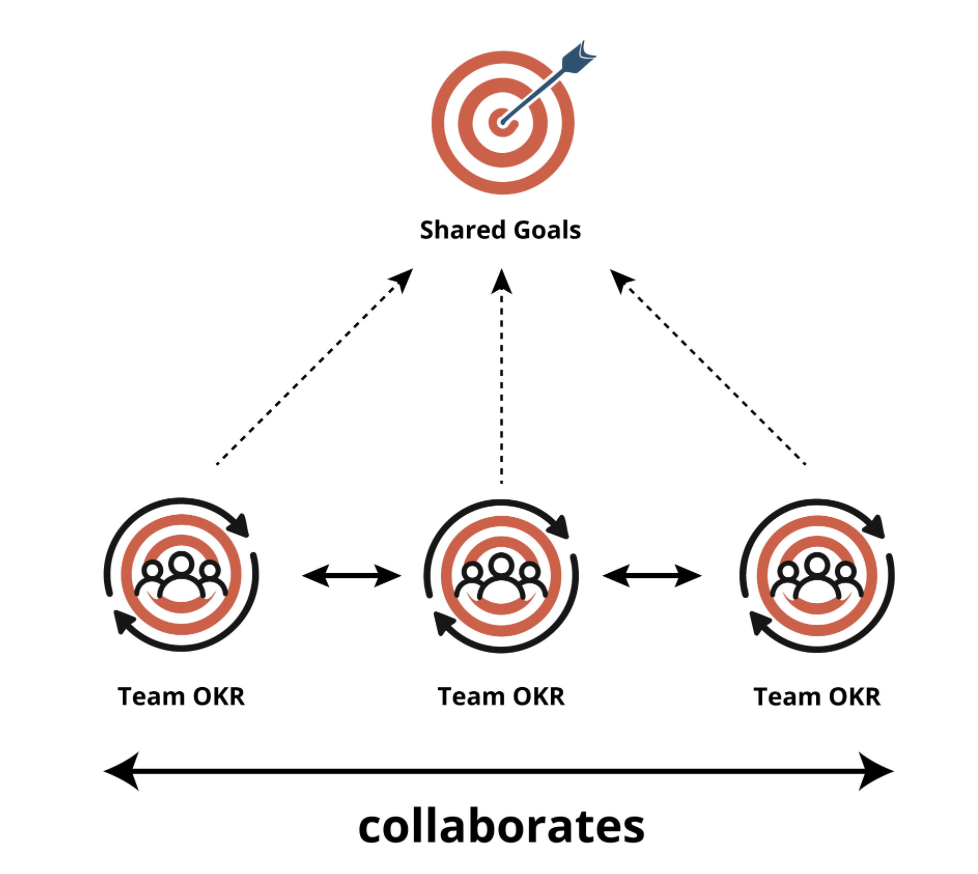

Align Up. Align Throughout: Constructing Strategic Alignment With out Shedding Workforce Autonomy

After I speak about alignment in massive organizations, I don’t simply imply

aligning as much as management’s technique. That’s solely half the story. The opposite

half—and infrequently the trickier one—is aligning throughout peer groups. Each dimensions

are important for making Workforce OKRs work at scale.

That is what I name vertical and horizontal alignment.

- Vertical alignment connects a staff’s OKRs to the group’s strategic

targets (some individuals name this connecting tactical OKRs to strategic OKRs). It

solutions a essential query: “How does our work contribute to the larger

image?”

- Horizontal alignment ensures that groups working in the identical enterprise unit—or

throughout items—coordinate and collaborate successfully. It asks: How will we help

one another to achieve shared outcomes?

Assume of a big enterprise unit like a fleet of ships. Every staff (or “ship”)

has its personal captain and crew, charting their course. However they’re not

navigating alone. They’re transferring collectively towards the identical North Star. That’s

the essence of horizontal alignment.

Every staff chases its personal Workforce OKR, tailor-made to its experience and sphere of

affect. However their efforts are interconnected—like gears in a machine. The

magic occurred in how they modify to one another’s progress in actual time,

conserving the bigger goal in sight.

That is alignment with out rigidity. Groups nonetheless owned their OKRs and have

autonomy over how they contribute. However they aren’t working in silos; they’re

navigating collectively.

So how do groups preserve alignment alive—with out dropping autonomy? That is the place

the Workforce OKR Cycle helps. It’s a easy rhythm that helps focus,

collaboration, and flexibility.

The Workforce OKR Cycle

To assist groups put this into observe, I like to recommend a light-weight, repeatable

cycle. It retains groups centered, aligned, and capable of adapt as circumstances

change.

The Workforce OKR Cycle revolves round three key moments:

- Workforce OKR Planning (sometimes quarterly): A second for alignment. The

staff connects with management, understands the strategic context, and defines

its OKRs—clarifying what they wish to obtain and the way they’ll measure

progress.

- Workforce OKR Test-in (weekly): A light-weight sync led by the staff. They

evaluate key outcomes, talk about progress, determine blockers, and modify course as

wanted—catching points earlier than they derail momentum.

- Workforce OKR Retrospective (mid-cycle and finish): A mirrored image level the place the

staff seems to be again not simply at supply, however at affect. These retrospectives assist

refine each intent and execution for future cycles.

This rhythm transforms OKRs from a one-time planning train right into a residing

system—a steady loop of alignment and adaptation.

Workforce OKR Planning Workshop

The Workforce OKR Planning Workshop occurs at first of every cycle. It’s

when the staff comes collectively to outline its Goal and Key Outcomes, aligning

with their BU’s strategic route.

This isn’t a top-down handoff; it’s a co-creation second that units

route and fosters possession.

One facilitation method I typically use is the Time Machine exercise:

“Please enter the Time Machine. Think about it’s the top of the quarter. You’re

happy with what the staff has achieved. What occurred?”

Every staff member writes their imagined success story. These reflections

floor themes and insights, that are then translated into measurable

indicators of progress. These indicators turn into the Key Outcomes.

When groups run this exercise, OKRs shift from static targets to expressions

of actual intent and shared dedication.

Workforce OKR Test-ins

That is the place many groups lose momentum—and the place the perfect groups stand

out.

A Workforce OKR Test-in is a brief, recurring second (for instance, Fridays at 2

p.m.) the place the staff displays collectively. It’s not a standing report; it’s a

dialog about progress and priorities.

Groups ask:

- Are we making significant progress?

- Are we measuring the suitable issues?

- What’s working—and what’s getting in the way in which?

- Do we have to modify course?

These questions rework OKRs from static artifacts into dynamic, residing

conversations.

I name check-ins the heartbeat of the OKR cycle. They preserve the staff

aligned—not simply on progress, however on confidence and vitality.

Do Your Test-in with GRIP

To maintain check-ins centered and actionable, I information groups with a easy

framework:

GRIP

- Purpose confidence: How assured are we in reaching the Goal?

- Outcomes progress: What’s the present standing of every Key End result?

- Points: What’s getting in the way in which?

- Plan ahead: What’s subsequent?

A fast GRIP check-in turns OKRs into energetic conversations—not only a

evaluate, however a possibility to regulate course earlier than points escalate.

In lots of groups I’ve labored with, the GRIP check-in grew to become a 15-minute weekly

anchor. It created a shared language—“What’s our confidence this week?”—and

helped groups see the place they wanted help or the place to double down. Like a

pilot scanning devices mid-flight, GRIP gave them readability to navigate

ahead.

Workforce OKR Retrospective

On the finish of the cycle, the staff doesn’t simply rating the OKR—they replicate

on the journey:

- Did we obtain what we got down to do?

- What did we be taught?

- What stunned us?

- What’s going to we do in a different way subsequent time?

That is the place studying occurs. One of the simplest ways to help it’s with a

retrospective.

You’ll discover dozens of efficient codecs at

FunRetrospectives.com and within the ebook

FunRetrospectives.

However don’t wait till the top to replicate. Mid-cycle retrospectives will be

simply as highly effective—particularly when the staff feels caught, misaligned, or not sure

about progress. They provide an opportunity to regroup whereas there’s nonetheless time to

course-correct.

Mid-cycle retrospectives aren’t obligatory, however they’re extremely useful

when the staff senses misalignment, stalled progress, or shifting priorities.

Some groups schedule them proactively on the midpoint of their OKR cycle;

others use them as a versatile instrument after they really feel momentum is slipping or

context modifications unexpectedly.

One format I typically use mid-cycle is Attractors and Detractors, a easy

but highly effective exercise for unpacking the systemic forces influencing the OKR so

far. It highlights:

- Attractors: What pulled us towards the OKR?

- Detractors: What pushed us away from it?

This exercise helps groups make sense of their work—clarifying what aligns

with their OKR and what doesn’t. It sharpens focus and prioritization,

particularly for groups severe about reaching their targets.

In a single staff I labored with, a mid-cycle retrospective utilizing this format

uncovered a brand new organizational initiative that was unintentionally diverting

effort away from the staff’s OKR. That perception helped them realign and regain

focus, resulting in significant affect by the top of the cycle.

What Units Nice Groups Aside

The distinction isn’t within the course of or the instrument. It’s within the mindset.

Groups that personal their OKRs don’t simply align with technique—they form it.

They don’t simply ship outputs—they ship outcomes.

That’s what makes them stand out. And that’s what makes Workforce OKRs work.