Greater than 1,000,000 domains — together with many registered by Fortune 100 companies and model safety corporations — are weak to takeover by cybercriminals because of authentication weaknesses at quite a lot of massive webhosting suppliers and area registrars, new analysis finds.

Picture: Shutterstock.

Your Internet browser is aware of the best way to discover a website like instance.com because of the worldwide Area Title System (DNS), which serves as a sort of telephone e-book for the Web by translating human-friendly web site names (instance.com) into numeric Web addresses.

When somebody registers a site title, the registrar will usually present two units of DNS data that the client then must assign to their area. These data are essential as a result of they permit Internet browsers to search out the Web handle of the internet hosting supplier that’s serving that area.

However potential issues can come up when a site’s DNS data are “lame,” which means the authoritative title server doesn’t have sufficient details about the area and might’t resolve queries to search out it. A site can grow to be lame in quite a lot of methods, equivalent to when it isn’t assigned an Web handle, or as a result of the title servers within the area’s authoritative document are misconfigured or lacking.

The rationale lame domains are problematic is that quite a lot of Website hosting and DNS suppliers permit customers to assert management over a site with out accessing the true proprietor’s account at their DNS supplier or registrar.

If this risk sounds acquainted, that’s as a result of it’s hardly new. Again in 2019, KrebsOnSecurity wrote about thieves using this technique to grab management over hundreds of domains registered at GoDaddy, and utilizing these to ship bomb threats and sextortion emails (GoDaddy says they fastened that weak spot of their methods not lengthy after that 2019 story).

Within the 2019 marketing campaign, the spammers created accounts on GoDaddy and had been capable of take over weak domains just by registering a free account at GoDaddy and being assigned the identical DNS servers because the hijacked area.

Three years earlier than that, the identical pervasive weak spot was described in a weblog put up by safety researcher Matthew Bryant, who confirmed how one might commandeer no less than 120,000 domains by way of DNS weaknesses at a few of the world’s largest internet hosting suppliers.

Extremely, new analysis collectively launched at present by safety consultants at Infoblox and Eclypsium finds this identical authentication weak spot continues to be current at quite a lot of massive internet hosting and DNS suppliers.

“It’s straightforward to use, very onerous to detect, and it’s totally preventable,” stated Dave Mitchell, principal risk researcher at Infoblox. “Free providers make it simpler [to exploit] at scale. And the majority of those are at a handful of DNS suppliers.”

SITTING DUCKS

Infoblox’s report discovered there are a number of cybercriminal teams abusing these stolen domains as a globally dispersed “visitors distribution system,” which can be utilized to masks the true supply or vacation spot of internet visitors and to funnel Internet customers to malicious or phishous web sites.

Commandeering domains this fashion can also permit thieves to impersonate trusted manufacturers and abuse their constructive or no less than impartial status when sending e mail from these domains, as we noticed in 2019 with the GoDaddy assaults.

“Hijacked domains have been used immediately in phishing assaults and scams, in addition to massive spam methods,” reads the Infoblox report, which refers to lame domains as “Sitting Geese.” “There’s proof that some domains had been used for Cobalt Strike and different malware command and management (C2). Different assaults have used hijacked domains in focused phishing assaults by creating lookalike subdomains. A number of actors have stockpiled hijacked domains for an unknown function.”

Eclypsium researchers estimate there are presently about a million Sitting Duck domains, and that no less than 30,000 of them have been hijacked for malicious use since 2019.

“As of the time of writing, quite a few DNS suppliers allow this by weak or nonexistent verification of area possession for a given account,” Eclypsium wrote.

The safety companies stated they discovered quite a lot of compromised Sitting Duck domains had been initially registered by model safety corporations specializing in defensive area registrations (reserving look-alike domains for high manufacturers earlier than these names may be grabbed by scammers) and combating trademark infringement.

For instance, Infoblox discovered cybercriminal teams utilizing a Sitting Duck area known as clickermediacorp[.]com, which was a CBS Interactive Inc. area initially registered in 2009 at GoDaddy. Nevertheless, in 2010 the DNS was up to date to DNSMadeEasy.com servers, and in 2012 the area was transferred to MarkMonitor.

One other hijacked Sitting Duck area — anti-phishing[.]org — was registered in 2003 by the Anti-Phishing Working Group (APWG), a cybersecurity not-for-profit group that intently tracks phishing assaults.

In lots of circumstances, the researchers found Sitting Duck domains that seem to have been configured to auto-renew on the registrar, however the authoritative DNS or internet hosting providers weren’t renewed.

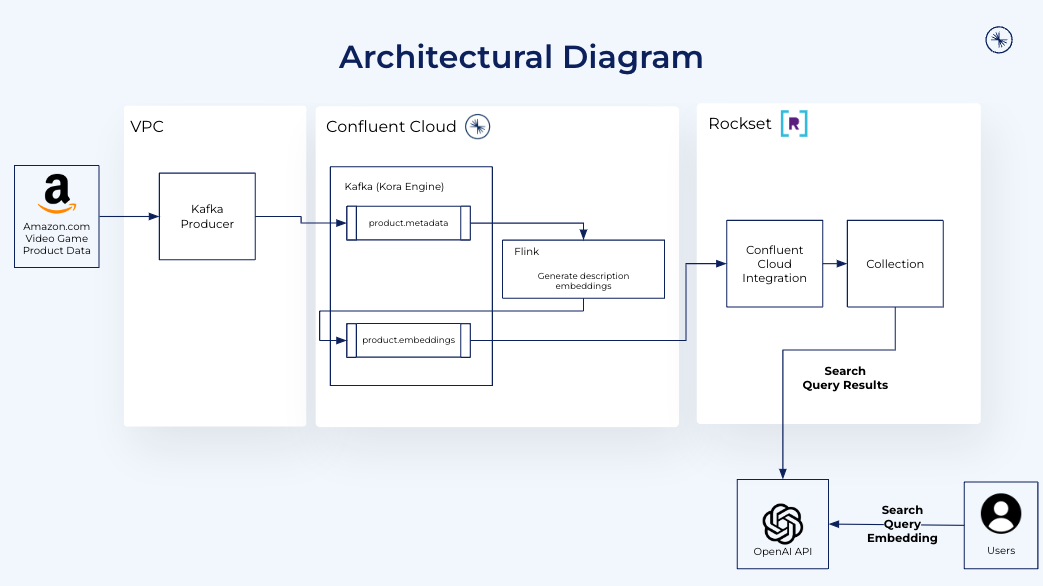

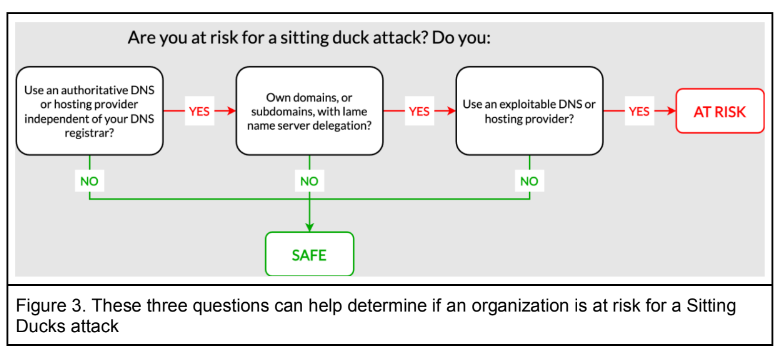

The researchers say Sitting Duck domains all possess three attributes that makes them weak to takeover:

1) the area makes use of or delegates authoritative DNS providers to a distinct supplier than the area registrar;

2) the authoritative title server(s) for the area doesn’t have details about the Web handle the area ought to level to;

3) the authoritative DNS supplier is “exploitable,” i.e. an attacker can declare the area on the supplier and arrange DNS data with out entry to the legitimate area proprietor’s account on the area registrar.

Picture: Infoblox.

How does one know whether or not a DNS supplier is exploitable? There’s a often up to date record printed on GitHub known as “Can I take over DNS,” which has been documenting exploitability by DNS supplier over the previous a number of years. The record contains examples for every of the named DNS suppliers.

Within the case of the aforementioned Sitting Duck area clickermediacorp[.]com, the area seems to have been hijacked by scammers by claiming it on the webhosting agency DNSMadeEasy, which is owned by Digicert, one of many trade’s largest issuers of digital certificates (SSL/TLS certificates).

In an interview with KrebsOnSecurity, DNSMadeEasy founder and senior vice chairman Steve Job stated the issue isn’t actually his firm’s to resolve, noting that DNS suppliers who’re additionally not area registrars haven’t any possible way of validating whether or not a given buyer legitimately owns the area being claimed.

“We do shut down abusive accounts once we discover them,” Job stated. “But it surely’s my perception that the onus must be on the [domain registrants] themselves. In the event you’re going to purchase one thing and level it someplace you don’t have any management over, we will’t stop that.”

Infoblox, Eclypsium, and the DNS wiki itemizing at Github all say that webhosting big Digital Ocean is among the many weak internet hosting companies. In response to questions, Digital Ocean stated it was exploring choices for mitigating such exercise.

“The DigitalOcean DNS service will not be authoritative, and we’re not a site registrar,” Digital Ocean wrote in an emailed response. “The place a site proprietor has delegated authority to our DNS infrastructure with their registrar, they usually have allowed their possession of that DNS document in our infrastructure to lapse, that turns into a ‘lame delegation’ below this hijack mannequin. We imagine the basis trigger, in the end, is poor administration of area title configuration by the proprietor, akin to leaving your keys in your unlocked automobile, however we acknowledge the chance to regulate our non-authoritative DNS service guardrails in an effort to assist decrease the influence of a lapse in hygiene on the authoritative DNS stage. We’re related with the analysis groups to discover further mitigation choices.”

In a press release supplied to KrebsOnSecurity, the internet hosting supplier and registrar Hostinger stated they had been working to implement an answer to stop lame duck assaults within the “upcoming weeks.”

“We’re engaged on implementing an SOA-based area verification system,” Hostinger wrote. “Customized nameservers with a Begin of Authority (SOA) document can be used to confirm whether or not the area actually belongs to the client. We intention to launch this user-friendly resolution by the top of August. The ultimate step is to deprecate preview domains, a performance generally utilized by clients with malicious intents. Preview domains can be deprecated by the top of September. Legit customers will have the ability to use randomly generated short-term subdomains as a substitute.”

What did DNS suppliers which have struggled with this subject up to now do to deal with these authentication challenges? The safety companies stated that to assert a site title, the most effective observe suppliers gave the account holder random title servers that required a change on the registrar earlier than the domains might go stay. Additionally they discovered the most effective observe suppliers used numerous mechanisms to make sure that the newly assigned title server hosts didn’t match earlier title server assignments.

[Side note: Infoblox observed that many of the hijacked domains were being hosted at Stark Industries Solutions, a sprawling hosting provider that appeared two weeks before Russia invaded Ukraine and has become the epicenter of countless cyberattacks against enemies of Russia].

Each Infoblox and Eclypsium stated that with out extra cooperation and fewer finger-pointing by all stakeholders within the world DNS, assaults on sitting duck domains will proceed to rise, with area registrants and common Web customers caught within the center.

“Authorities organizations, regulators, and requirements our bodies ought to take into account long-term options to vulnerabilities within the DNS administration assault floor,” the Infoblox report concludes.