Success in a DevSecOps enterprise hinges on delivering worth to the tip consumer, not merely finishing intermediate steps alongside the best way. Organizations and applications usually wrestle to attain this resulting from quite a lot of elements, reminiscent of a scarcity of clear possession and accountability for the potential to ship software program, useful siloes versus built-in groups and processes, lack of efficient instruments for groups to make use of, and a scarcity of efficient assets for group members to leverage to shortly rise up to hurry and enhance productiveness.

An absence of a central driving pressure may end up in siloed items inside a given group or program, fragmented determination making, and an absence of outlined key efficiency metrics. Consequently, organizations could also be hindered of their means to ship functionality on the velocity of relevance. A siloed DevSecOps infrastructure, the place disjointed environments are intertwined to kind an entire pipeline, causes builders to expend vital effort to construct an utility with out the assist of documentation and steerage for working inside the supplied platforms. Groups can’t create repeatable options within the absence of an end-to-end built-in utility supply pipeline. With out one, effectivity suffers, and pointless practices bathroom down the whole course of.

Step one in reaching the worth DevSecOps can convey is to know how we outline it:

a socio-technical system made up of a set of each software program instruments and processes. It’s not a computer-based system to be constructed or acquired; it’s a mindset that depends on outlined processes for the fast improvement, fielding, and operations of software program and software-based programs using automation the place possible to attain the specified throughput of growing, fielding, and sustaining new product options and capabilities.

DevSecOps is thus a mindset that builds on automation the place possible.

The target of an efficient DevSecOps evaluation is to know the software program improvement course of and make suggestions for enhancements that can positively affect the worth, high quality, and velocity of supply of merchandise to the tip consumer in an operationally steady and safe method. A complete evaluation of present capabilities should embody each quantitative and qualitative approaches to gathering information and figuring out exactly the place challenges reside within the product supply course of. The scope of an evaluation should take into account all processes which can be required to subject and function a software program product as a part of the worth supply processes. The aperture via which a DevSecOps evaluation group focuses its work is wider than the instruments and processes sometimes regarded as the software program improvement pipeline. The evaluation should embody the broader context of the whole product supply pipeline, together with planning phases, the place functionality (or worth) wants are outlined and translated into necessities, in addition to post-deployment operational phases. This wider view permits an evaluation group to find out how effectively organizations ship worth.

There are a myriad of overlapping influences that may trigger dysfunction inside a DevSecOps enterprise. Trying from the skin it may be tough to peel again the layers and successfully discover the foremost causes. This weblog focuses on methods to conduct a DevSecOps evaluation with an strategy that makes use of 4 methodologies to investigate an enterprise from the angle of the practitioner utilizing the instruments and processes to construct and ship worthwhile software program. Taking the angle of the practitioner permits the evaluation group to floor probably the most instantly related challenges dealing with the enterprise.

A 4-Pronged Evaluation Methodology

To border the expertise of a practitioner, a complete evaluation requires a layered strategy. This type of strategy can assist assessors collect sufficient information to know each the total scope and the particular particulars of the builders’ experiences, each optimistic and destructive. We take a four-pronged strategy:

- Immersion: The evaluation group immerses itself into the event course of by both growing a small, consultant utility from scratch, becoming a member of an current improvement group, or different technique of gaining firsthand expertise and perception within the course of. Avoiding particular therapy is vital to collect real-world information, so the evaluation group ought to use means to turn into a “secret shopper” wherever potential. This additionally permits the evaluation group to determine what the true, not simply documented, course of is to ship worth.

- Remark: The evaluation group instantly observes current utility improvement groups as they work to construct, check, ship, and deploy their functions to the tip customers. Observations ought to cowl as a lot of the value-delivery course of as practicable, reminiscent of consumer engagement, product design, dash planning, demos, retrospectives, and software program releases.

- Engagement: The evaluation group conducts interviews and centered dialogue with improvement groups and different related stakeholders to make clear and collect context for his or her expertise and observations. Ask the practitioners to point out the evaluation group how they work.

- Benchmarking: The evaluation group captures accessible metrics from the enterprise and its processes and compares them with anticipated outcomes for related organizations.

To attain this, an evaluation group can use ethnographic analysis strategies as described within the Luma Institute Innovating for Individuals System. Interviewing, fly-on-the-wall remark, and contextual inquiry permit the evaluation group to look at product groups working, conduct follow-up interviews about what they noticed, and ask questions on conduct and expectations that they didn’t observe. By utilizing the walk-a-mile immersion approach, the evaluation group can communicate firsthand to their experiences utilizing the group’s present instruments and processes.

These strategies assist be sure that the evaluation group understands the method by getting firsthand expertise and doesn’t overly depend on documentation or the biases of remark or engagement topics. Additionally they allow the group to higher perceive what they’re observing or listening to about from different practitioners and determine the elements of the worth supply course of the place enhancements are extra possible available.

The two Dimensions of Assessing DevSecOps Capabilities

To precisely assess DevSecOps processes, one wants each quantitative information (e.g., metrics) to pinpoint and prioritize challenges primarily based on affect and qualitative information (e.g., expertise and suggestions) to know the context and develop focused options. Whereas the evaluation methodology mentioned above supplies a repeatable strategy for amassing the mandatory quantitative and qualitative information, it isn’t ample as a result of it doesn’t inform the assessor what information is required, what inquiries to ask, what DevSecOps capabilities are anticipated, and so forth. To handle these questions whereas assessing a company’s DevSecOps capabilities, the next dimensions needs to be thought-about:

- a quantitative evaluation of a company’s efficiency in opposition to tutorial and trade benchmarks of efficiency

- a qualitative evaluation of a company’s adherence to established greatest practices of high-performing DevSecOps organizations

Inside every dimension, the evaluation group should take a look at a number of essential elements of the worth supply course of:

- Worth Definition: How are consumer wants captured and translated into merchandise and options?

- Developer Expertise: Are the instruments and processes that builders are anticipated to make use of intuitive, and do they cut back toil?

- Platform Engineering: Are the instruments and processes effectively built-in, and are the precise elements automated?

- Software program Improvement Efficiency: How efficient and environment friendly are the event processes at constructing and delivering useful software program?

Since 2013, Google has printed an annual DevSecOps Analysis and Evaluation (DORA) Speed up State of DevOps Report. These reviews assemble information from hundreds of practitioners worldwide and compile them right into a complete report breaking down four-to-five key metrics to find out the general state of DevSecOps practices throughout all kinds of enterprise sorts and sectors. An evaluation group can use these reviews to shortly key in on the metrics and thresholds that analysis has proven to be vital indicators of total efficiency. Along with the DORA metrics, the evaluation group can conduct a literature seek for different publications that present metrics associated to a particular software program architectural sample, reminiscent of real-time resource-constrained cyber-physical programs.

To have the ability to examine a company or program to trade benchmarks, such because the DORA metrics or case research, the evaluation group should be capable of collect organizationally consultant information that may be equated to the metrics discovered within the given benchmark or case research. This may be achieved in a mixture of how, together with amassing information manually because the evaluation group shadows the group’s builders or stitching collectively information collected from automated instruments and interviews. As soon as the info is collected, visualizations such because the determine under may be created to point out the place the given group or program compares to the benchmark.

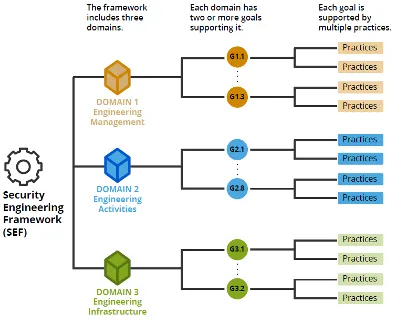

From a qualitative perspective, the evaluation group can use the SEI’s DevSecOps Platform Unbiased Mannequin (PIM), which incorporates greater than 200 necessities one would anticipate to see in a high-performing DevSecOps group. The PIM permits applications to map their present or proposed capabilities onto the set of capabilities and necessities of the PIM to make sure that the DevSecOps ecosystem into consideration or evaluation implements the perfect practices. For assessments, the PIM supplies the potential for applications to search out potential gaps by trying throughout their present ecosystem and processes and mapping them to necessities that specific the extent of high quality of outcomes anticipated. The determine under exhibits an instance abstract output of the qualitative evaluation when it comes to the ten DevSecOps capabilities outlined inside the PIM and total maturity stage of the group beneath evaluation. Check with the DevSecOps Maturity Mannequin for extra info relating to using the PIM for qualitative evaluation.

Charting Your Course to DevSecOps Success

By using a multi-faceted evaluation methodology that mixes immersion, remark, engagement, and benchmarking, organizations can acquire a holistic view of their DevSecOps functionality. Leveraging benchmarks just like the DORA metrics and reference architectures just like the DevSecOps PIM supplies a structured strategy to measuring efficiency in opposition to trade requirements and figuring out particular areas for enchancment.

Purposefully taking the angle of the practitioners tasked with utilizing the instruments and processes to ship worth helps the assessor focus their suggestions for enhancements on the areas which can be prone to have the very best affect on the supply of worth in addition to determine these elements of the method that detract from the supply of worth.

Bear in mind, the journey in the direction of a high-performing DevSecOps atmosphere is iterative, ongoing, and centered on delivering worth to the tip consumer. By making use of data-driven quantitative and qualitative strategies in performing a two-dimensional DevSecOps evaluation, an evaluation group is effectively positioned to determine unbiased observations and make actionable strategic and tactical suggestions. Common assessments are very important to trace progress, adapt to evolving wants, and make sure you’re persistently delivering worth to your finish customers with velocity, safety, and effectivity.