Introduction to Operational Analytics

Operational analytics is a really particular time period for a sort of analytics which focuses on enhancing present operations. Any such analytics, like others, includes the usage of varied knowledge mining and knowledge aggregation instruments to get extra clear data for enterprise planning. The principle attribute that distinguishes operational analytics from different kinds of analytics is that it’s “analytics on the fly,” which signifies that indicators emanating from the assorted components of a enterprise are processed in real-time to feed again into immediate choice making for the enterprise. Some folks consult with this as “steady analytics,” which is one other method to emphasize the continual digital suggestions loop that may exist from one a part of the enterprise to others.

Operational analytics lets you course of varied kinds of data from completely different sources after which determine what to do subsequent: what motion to take, whom to speak to, what rapid plans to make. This type of analytics has turn out to be fashionable with the digitization development in nearly all trade verticals, as a result of it’s digitization that furnishes the info wanted for operational decision-making.

Examples of operational analytics

Let’s focus on some examples of operational analytics.

Software program sport builders

For example that you’re a software program sport developer and also you need your sport to robotically upsell a sure characteristic of your sport relying on the gamer’s taking part in habits and the present state of all of the gamers within the present sport. That is an operational analytics question as a result of it permits the sport developer to make immediate choices primarily based on evaluation of present occasions.

Product managers

Again within the day, product managers used to do quite a bit guide work, speaking to clients, asking them how they use the product, what options within the product gradual them down, and so forth. Within the age of operational analytics, a product supervisor can collect all these solutions by querying knowledge that data utilization patterns from the product’s consumer base; and she or he can instantly feed that data again to make the product higher.

Advertising and marketing managers

Equally, within the case of promoting analytics, a advertising supervisor would use to prepare a couple of focus teams, check out a couple of experiments primarily based on their very own creativity after which implement them. Relying on the outcomes of experimentation, they might then determine what to do subsequent. An experiment could take weeks or months. We at the moment are seeing the rise of the “advertising engineer,” an individual who’s well-versed in utilizing knowledge methods.

These advertising engineers can run a number of experiments directly, collect outcomes from experiments within the type of knowledge, terminate the ineffective experiments and nurture those that work, all by the usage of data-based software program methods. The extra experiments they’ll run and the faster the turnaround occasions of outcomes, the higher their effectiveness in advertising their product. That is one other type of operational analytics.

Definition of Operational Analytics Processing

An operational analytics system helps you make immediate choices from reams of real-time knowledge. You gather new knowledge out of your knowledge sources and so they all stream into your operational knowledge engine. Your user-facing interactive apps question the identical knowledge engine to fetch insights out of your knowledge set in actual time, and also you then use that intelligence to offer a greater consumer expertise to your customers.

Ah, you may say that you’ve seen this “beast” earlier than. In actual fact, you could be very, very aware of a system that…

- encompasses your knowledge pipeline that sources knowledge from varied sources

- deposits it into your knowledge lake or knowledge warehouse

- runs varied transformations to extract insights, after which…

- parks these nuggets of data in a key-value retailer for quick retrieval by your interactive user-facing functions

And you’d be completely proper in your evaluation: an equal engine that has your complete set of those above capabilities is an operational analytics processing system!

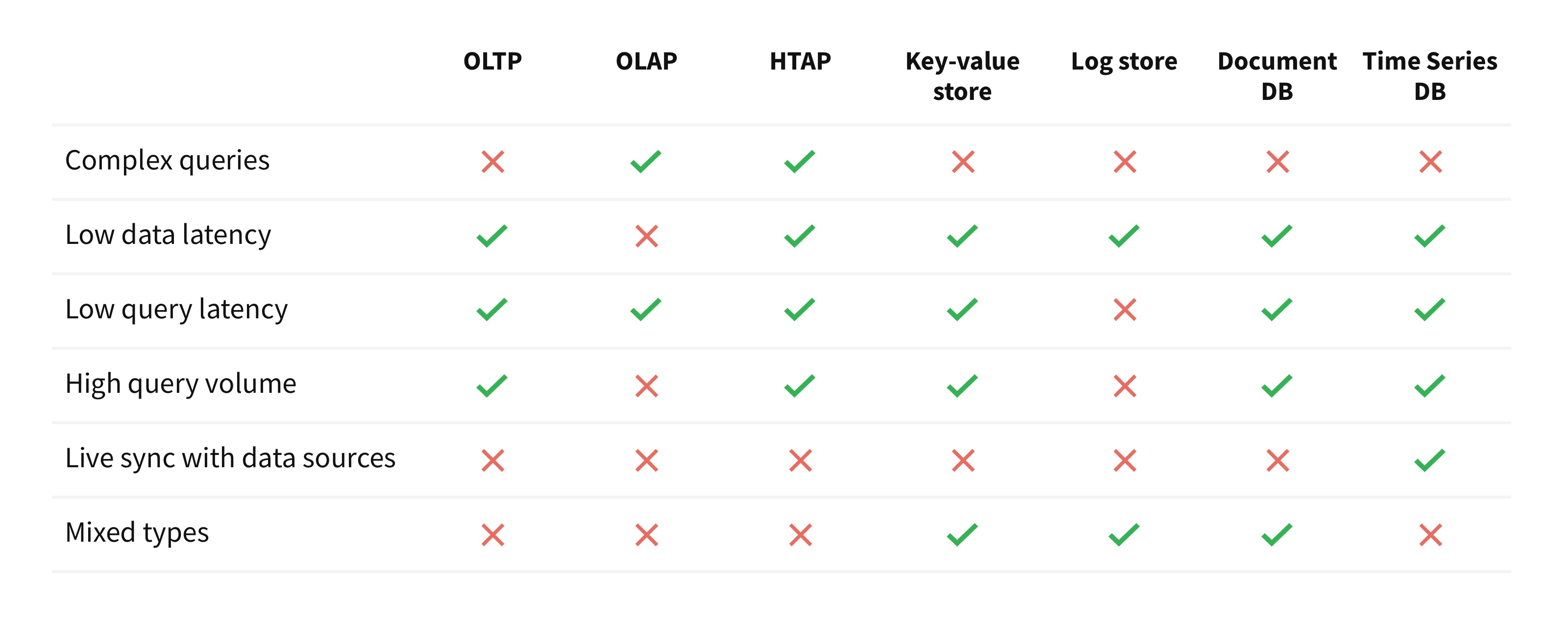

The definition of an operational analytics processing engine might be expressed within the type of the next six propositions:

- Complicated queries: Help for queries like joins, aggregations, sorting, relevance, and so forth.

- Low knowledge latency: An replace to any knowledge document is seen in question ends in below than a couple of seconds.

- Low question latency: A easy search question returns in below a couple of milliseconds.

- Excessive question quantity: Capable of serve at the least a couple of hundred concurrent queries per second.

- Stay sync with knowledge sources: Capability to maintain itself in sync with varied exterior sources with out having to jot down exterior scripts. This may be finished by way of change-data-capture of an exterior database, or by tailing streaming knowledge sources.

- Blended sorts: Permits values of various sorts in the identical column. That is wanted to have the ability to ingest new knowledge while not having to wash them at write time.

Let’s focus on every of the above propositions in higher element and focus on why every of the above options is important for an operational analytics processing engine.

Proposition 1: Complicated queries

A database, in any conventional sense, permits the applying to precise complicated knowledge operations in a declarative means. This permits the applying developer to not need to explicitly perceive knowledge entry patterns, knowledge optimizations, and so forth. and frees him/her to deal with the applying logic. The database would help filtering, sorting, aggregations, and so forth. to empower the applying to course of knowledge effectively and shortly. The database would help joins throughout two or extra knowledge units in order that an utility may mix the knowledge from a number of sources to extract intelligence from them.

For instance, SQL, HiveQL, KSQL and so forth. present declarative strategies to precise complicated knowledge operations on knowledge units. They’ve various expressive powers: SQL helps full joins whereas KSQL doesn’t.

Proposition 2: Low knowledge latency

An operational analytics database, not like a transactional database, doesn’t must help transactions. The functions that use any such a database use it to retailer streams of incoming knowledge; they don’t use the database to document transactions. The incoming knowledge charge is bursty and unpredictable. The database is optimized for high-throughout writes and helps an eventual consistency mannequin the place newly written knowledge turns into seen in a question inside a couple of seconds at most.

Proposition 3: Low question latency

An operational analytics database is ready to answer queries shortly. On this respect, it is rather much like transactional databases like Oracle, PostgreSQL, and so forth. It’s optimized for low-latency queries quite than throughput. Easy queries end in a couple of milliseconds whereas complicated queries scale out to complete shortly as properly. This is likely one of the fundamental necessities to have the ability to energy any interactive utility.

Proposition 4: Excessive question quantity

A user-facing utility sometimes makes many queries in parallel, particularly when a number of customers are utilizing the applying concurrently. For instance, a gaming utility might need many customers taking part in the identical sport on the similar time. A fraud detection utility could be processing a number of transactions from completely different customers concurrently and may must fetch insights about every of those customers in parallel. An operational analytics database is able to supporting a excessive question charge, starting from tens of queries per second (e.g. reside dashboard) to 1000’s of queries per second (e.g. a web based cellular app).

Proposition 5: Stay sync with knowledge sources

An internet analytics database lets you robotically and repeatedly sync knowledge from a number of exterior knowledge sources. With out this characteristic, you’ll create yet one more knowledge silo that’s tough to take care of and babysit.

You may have your personal system-of-truth databases, which could possibly be Oracle or DynamoDB, the place you do your transactions, and you’ve got occasion logs in Kafka; however you want a single place the place you wish to usher in all these knowledge units and mix them to generate insights. The operational analytics database has built-in mechanisms to ingest knowledge from quite a lot of knowledge sources and robotically sync them into the database. It could use change-data-capture to repeatedly replace itself from upstream knowledge sources.

Proposition 6: Blended sorts

An analytics system is tremendous helpful when it is ready to retailer two or extra several types of objects in the identical column. With out this characteristic, you would need to clear up the occasion stream earlier than you possibly can write it to the database. An analytics system can present low knowledge latency provided that cleansing necessities when new knowledge arrives is decreased to a minimal. Thus, an operational analytics database has the aptitude to retailer objects of blended sorts throughout the similar column.

The six above traits are distinctive to an OPerational Analytics Processing (OPAP) system.

Architectural Uniqueness of an OPAP System

The Database LOG

The Database is the LOG; it durably shops knowledge. It’s the “D” in ACID methods. Let’s analyze the three kinds of knowledge processing methods so far as their LOG is anxious.

The first use of an OLTP system is to ensure some types of robust consistency between updates and reads. In these circumstances the LOG is behind the database server(s) that serves queries. For instance, an OLTP system like PostgreSQL has a database server; updates arrive on the database server, which then writes it to the LOG. Equally, Amazon Aurora‘s database server(s) receives new writes, appends transactional data (like sequence quantity, transaction quantity, and so forth.) to the write after which persists it within the LOG. On each of those circumstances, the LOG is hidden behind the transaction engine as a result of the LOG must retailer metadata concerning the transaction.

Equally, many OLAP methods help some fundamental type of transactions as properly. For instance, the OLAP Snowflake Knowledge Warehouse explicitly states that it’s designed for bulk updates and trickle inserts (see Part 3.3.2 titled Concurrency Management). They use a copy-on-write strategy for complete datafiles and a world key-value retailer because the LOG. The database servers fronting the LOG signifies that streaming write charges are solely as quick because the database servers can deal with.

However, an OPAP system’s main purpose is to help a excessive replace charge and low question latency. An OPAP system doesn’t have the idea of a transaction. As such, an OPAP system has the LOG in entrance of the database servers, the reason is that the log is required just for sturdiness. Making the database be fronted by the log is advantageous: the log can function a buffer for giant write volumes within the face of sudden bursty write storms. A log can help a a lot greater write charge as a result of it’s optimized for writes and never for random reads.

Sort binding at question time and never at write time

OLAP databases affiliate a hard and fast sort for each column within the database. Which means that each worth saved in that column conforms to the given sort. The database checks for conformity when a brand new document is written to the database. If a discipline of a brand new document doesn’t adhere to the required sort of the column, the document is both discarded or a failure is signaled. To keep away from some of these errors, OLAP database are fronted by an information pipeline that cleans and validates each new document earlier than it’s inserted to the database.

Instance

Let’s say {that a} database has a column known as ‘zipcode’. We all know that zip code are integers within the US whereas zipcodes within the UK can have each letters and digits. In an OLAP database, now we have to transform each of those to the ‘string’ sort earlier than we will retailer them in the identical column. However as soon as we retailer them as strings within the database, we lose the flexibility to make integer comparisons as a part of the question on this column. For instance, a question of the kind choose depend(*) from desk the place zipcode > 1000 will throw an error as a result of we’re doing an integral vary examine however the column sort is a string.

However an OPAP database doesn’t have a hard and fast sort for each column within the database. As a substitute, the kind is related to each particular person worth saved within the column. The ‘zipcode’ discipline in an OPAP database is able to storing each some of these data in the identical column with out shedding the kind data of each discipline.

Going additional, for the above question choose depend(*) from desk the place zipcode > 1000, the database may examine and match solely these values within the column which might be integers and return a sound outcome set. Equally, a question choose depend(*) from desk the place zipcode=’NW89EU’ may match solely these data which have a worth of sort ‘string’ and return a sound outcome set.

Thus, an OPAP database can help a powerful schema, however implement the schema binding at question time quite than at knowledge insertion time. That is what’s termed robust dynamic typing.

Comparisons with Different Knowledge Methods

Now that we perceive the necessities of an OPAP database, let’s examine and distinction different present knowledge options. Specifically, let’s examine its options with an OLTP database, an OLAP knowledge warehouse, an HTAP database, a key-value database, a distributed logging system, a doc database and a time-series database. These are a number of the fashionable methods which might be in use immediately.

Examine with an OLTP database

An OLTP system is used to course of transactions. Typical examples of transactional methods are Oracle, Spanner, PostgreSQL, and so forth. The methods are designed for low-latency updates and inserts, and these writes are throughout failure domains in order that the writes are sturdy. The first design focus of those methods is to not lose a single replace and to make it sturdy. A single question sometimes processes a couple of kilobytes of knowledge at most. They will maintain a excessive question quantity, however not like an OPAP system, a single question shouldn’t be anticipated to course of megabytes or gigabytes of knowledge in milliseconds.

Examine with an OLAP knowledge warehouse

- An OLAP knowledge warehouse can course of very complicated queries on massive datasets and is much like an OPAP system on this regard. Examples of OLAP knowledge warehouses are Amazon Redshift and Snowflake. However that is the place the similarity ends.

- An OLAP system is designed for general system throughput whereas OPAP is designed for the bottom of question latencies.

- An OLAP knowledge warehouse can have an general excessive write charge, however not like a OPAP system, writes are batched and inserted into the database periodically.

- An OLAP database requires a strict schema at knowledge insertion time, which basically signifies that schema binding occurs at knowledge write time. However, an OPAP database natively understands semi-structured schema (JSON, XML, and so forth.) and the strict schema binding happens at question time.

- An OLAP warehouse helps a low variety of concurrent queries (e.g. Amazon Redshift helps as much as 50 concurrent queries), whereas a OPAP system can scale to help massive numbers of concurrent queries.

Examine with an HTAP database

An HTAP database is a mixture of each OLTP and OLAP methods. Which means that the variations talked about within the above two paragraphs apply to HTAP methods as properly. Typical HTAP methods embody SAP HANA and MemSQL.

Examine with a key-value retailer

Key-Worth (KV) shops are recognized for pace. Typical examples of KV shops are Cassandra and HBase. They supply low latency and excessive concurrency however that is the place the similarity with OPAP ends. KV shops don’t help complicated queries like joins, sorting, aggregations, and so forth. Additionally, they’re knowledge silos as a result of they don’t help the auto-sync of knowledge from exterior sources and thus violate Proposition 5.

Examine with a logging system

A log retailer is designed for prime write volumes. It’s appropriate for writing a excessive quantity of updates. Apache Kafka and Apache Samza are examples of logging methods. The updates reside in a log, which isn’t optimized for random reads. A logging system is nice at windowing capabilities however doesn’t help arbitrary complicated queries throughout your complete knowledge set.

Examine with a doc database

A doc database natively helps a number of knowledge codecs, sometimes JSON. Examples of a doc database are MongoDB, Couchbase and Elasticsearch. Queries are low latency and might have excessive concurrency however they don’t help complicated queries like joins, sorting and aggregations. These databases don’t help automated methods to sync new knowledge from exterior sources, thus violating Proposition 5.

Examine with a time-series database

A time-series database is a specialised operational analytics database. Queries are low latency and it might probably help excessive concurrency of queries. Examples of time-series databases are Druid, InfluxDB and TimescaleDB. It could actually help a fancy aggregations on one dimension and that dimension is ‘time’. However, an OPAP system can help complicated aggregations on any data-dimension and never simply on the ‘time’ dimension. Time sequence database usually are not designed to hitch two or extra knowledge units whereas OPAP methods can be part of two or extra datasets as a part of a single question.

References

- Techopedia: https://www.techopedia.com/definition/29495/operational-analytics

- Andreessen Horowitz: https://a16z.com/2019/05/16/everyone-is-an-analyst-opportunities-in-operational-analytics/

- Forbes: https://www.forbes.com/websites/forbestechcouncil/2019/06/11/from-good-to-great-how-operational-analytics-can-give-businesses-a-real-time-edge/

- Gartner: https://www.gartner.com/en/newsroom/press-releases/2019-02-18-gartner-identifies-top-10-data-and-analytics-technolo

- Tech Republic: https://www.techrepublic.com/article/how-data-scientists-can-help-operational-analytics-succeed/

- Quora: https://www.quora.com/What-is-Operations-Analytics