Picture by Creator | DALLE-3

It has at all times been tedious to coach Massive Language Fashions. Even with intensive help from public platforms like HuggingFace, the method consists of organising completely different scripts for every pipeline stage. From organising knowledge for pretraining, fine-tuning, or RLHF to configuring the mannequin from quantization and LORAs, coaching an LLM requires laborious guide efforts and tweaking.

The current launch of LLama-Manufacturing facility in 2024 goals to resolve the precise downside. The GitHub repository makes organising mannequin coaching for all levels in an LLM lifecycle extraordinarily handy. From pretraining to SFT and even RLHF, the repository supplies built-in help for the setup and coaching of all the most recent accessible LLMs.

Supported Fashions and Information Codecs

The repository helps all of the current fashions together with LLama, LLava, Mixtral Combination-of-Consultants, Qwen, Phi, and Gemma amongst others. The total record might be discovered right here. It helps pretraining, SFT, and main RL methods together with DPO, PPO, and, ORPO permits all the most recent methodologies from full-finetuning to freeze-tuning, LORAs, QLoras, and Agent Tuning.

Furthermore, in addition they present pattern datasets for every coaching step. The pattern datasets typically observe the alpaca template although the sharegpt format can also be supported. We spotlight the Alpaca knowledge formatting beneath for a greater understanding of how you can arrange your proprietary knowledge.

Notice that when utilizing your knowledge, you will need to edit and add details about your knowledge file within the dataset_info.json file within the Llama-Manufacturing facility/knowledge folder.

Pre-training Information

The info supplied is saved in a JSON file and solely the textual content column is used for coaching the LLM. The info must be within the format given beneath to arrange pre-training.

[

{"text": "document"},

{"text": "document"}

]

Supervised High quality-Tuning Information

In SFT knowledge, there are three required parameters; instruction, enter, and output. Nevertheless, system and historical past might be handed optionally and will probably be used to coach the mannequin accordingly if supplied within the dataset.

The overall alpaca format for SFT knowledge is as given:

[

{

"instruction": "human instruction (required)",

"input": "human input (optional)",

"output": "model response (required)",

"system": "system prompt (optional)",

"history": [

["human instruction in the first round (optional)", "model response in the first round (optional)"],

["human instruction in the second round (optional)", "model response in the second round (optional)"]

]

}

]

Reward Modeling Information

Llama-Manufacturing facility supplies help to coach an LLM for desire alignment utilizing RLHF. The info format should present two completely different responses for a similar instruction, which should spotlight the desire of the alignment.

The higher aligned response is handed to the chosen key and the more serious response is handed to the rejected parameter. The info format is as follows:

[

{

"instruction": "human instruction (required)",

"input": "human input (optional)",

"chosen": "chosen answer (required)",

"rejected": "rejected answer (required)"

}

]

Setup and Set up

The GitHub repository supplies help for straightforward set up utilizing a setup.py and necessities file. Nevertheless, it’s suggested to make use of a clear Python atmosphere when organising the repository to keep away from dependency and package deal clashes.

Although Python 3.8 is a minimal requirement, it is suggested to put in Python 3.11 or above. Clone the repository from GitHub utilizing the command beneath:

git clone --depth 1 https://github.com/hiyouga/LLaMA-Manufacturing facility.git

cd LLaMA-Manufacturing facility

We will now create a recent Python atmosphere utilizing the instructions beneath:

python3.11 -m venv venv

supply venv/bin/activate

Now, we have to set up the required packages and dependencies utilizing the setup.py file. We will set up them utilizing the command beneath:

pip set up -e ".[torch,metrics]"

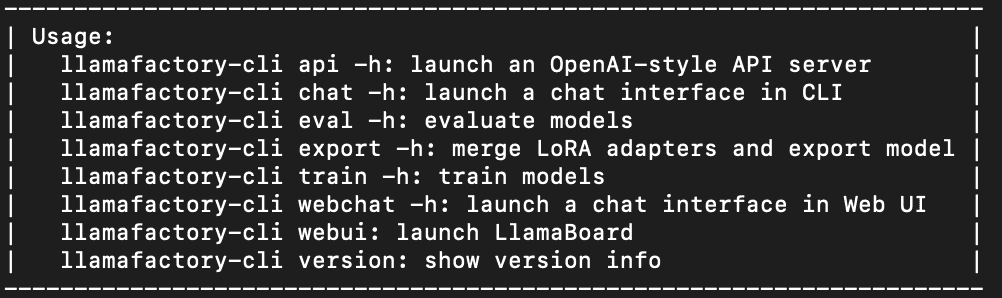

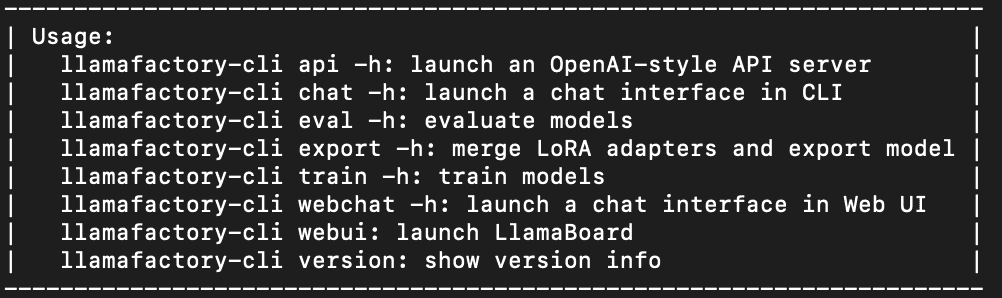

This can set up all required dependencies together with torch, trl, speed up, and different packages. To make sure right set up, we must always now be capable to use the command line interface for Llama-Manufacturing facility. Operating the command beneath ought to output the utilization assist info on the terminal as proven within the picture.

This needs to be printed on the command line if the set up was profitable.

Finetuning LLMs

We will now begin coaching an LLM! That is as simple as writing a configuration file and invoking a bash command.

Notice {that a} GPU is a should to coach an LLM utilizing Llama-factory.

We select a smaller mannequin to avoid wasting on GPU reminiscence and coaching assets. On this instance, we’ll carry out LORA-based SFT for Phi3-mini-Instruct. We select to create a yaml configuration file however you should utilize a JSON file as nicely.

Create a brand new config.yaml file as follows. This configuration file is for SFT coaching, and you’ll find extra examples of varied strategies within the examples listing.

### mannequin

model_name_or_path: microsoft/Phi-3.5-mini-instruct

### technique

stage: sft

do_train: true

finetuning_type: lora

lora_target: all

### dataset

dataset: alpaca_en_demo

template: llama3

cutoff_len: 1024

max_samples: 1000

overwrite_cache: true

preprocessing_num_workers: 16

### output

output_dir: saves/phi-3/lora/sft

logging_steps: 10

save_steps: 500

plot_loss: true

overwrite_output_dir: true

### practice

per_device_train_batch_size: 1

gradient_accumulation_steps: 8

learning_rate: 1.0e-4

num_train_epochs: 3.0

lr_scheduler_type: cosine

warmup_ratio: 0.1

bf16: true

ddp_timeout: 180000000

### eval

val_size: 0.1

per_device_eval_batch_size: 1

eval_strategy: steps

eval_steps: 500

Though it’s self-explanatory, we have to concentrate on two vital components of the configuration file.

Configuring Dataset for Coaching

The given identify of the dataset is a key parameter. Additional particulars for the dataset have to be added to the dataset_info.json file within the knowledge listing earlier than coaching. This info consists of needed details about the precise knowledge file path, the info format adopted, and the columns for use from the info.

For this tutorial, we use the alpaca_demo dataset which comprises questions and solutions associated to English. You possibly can view the whole dataset right here.

The info will then be routinely loaded from the supplied info. Furthermore, the dataset key accepts an inventory of comma-separated values. Given an inventory, all of the datasets will probably be loaded and used to coach the LLM.

Configuring Mannequin Coaching

Altering the coaching sort in Llama-Manufacturing facility is as simple as altering a configuration parameter. As proven beneath, we solely want the beneath parameters to arrange LORA-based SFT for the LLM.

### technique

stage: sft

do_train: true

finetuning_type: lora

lora_target: all

We will exchange SFT with pre-training and reward modeling with actual configuration information accessible within the examples listing. You possibly can simply change the SFT to reward modeling by altering the given parameters.

Begin Coaching an LLM

Now, now we have every part arrange. All that’s left is invoking a bash command passing the configuration file as a command line enter.

Invoke the command beneath:

llamafactory-cli practice config.yaml

This system will routinely arrange all required datasets, fashions, and pipelines for the coaching. It took me 10 minutes to coach one epoch on a TESLA T4 GPU. The output mannequin is saved within the output_dir supplied within the config.yaml.

Inference

The inference is even easier than coaching a mannequin. We want a configuration file much like coaching offering the bottom mannequin and the trail to the skilled LORA adapter.

Create a brand new infer_config.yaml file and supply values for the given keys:

model_name_or_path: microsoft/Phi-3.5-mini-instruct

adapter_name_or_path: saves/phi3-8b/lora/sft/ # Path to skilled mannequin

template: llama3

finetuning_type: lora

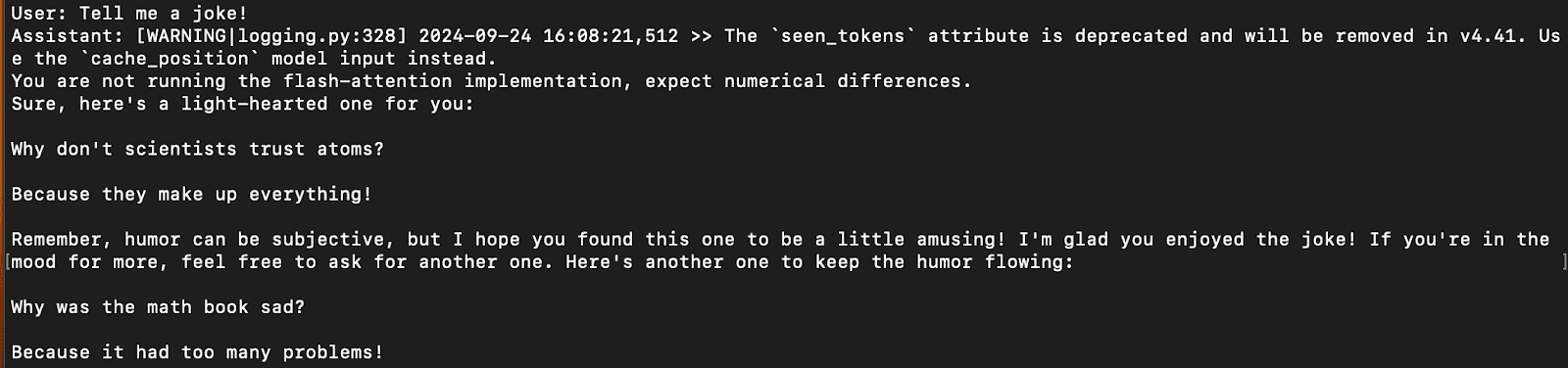

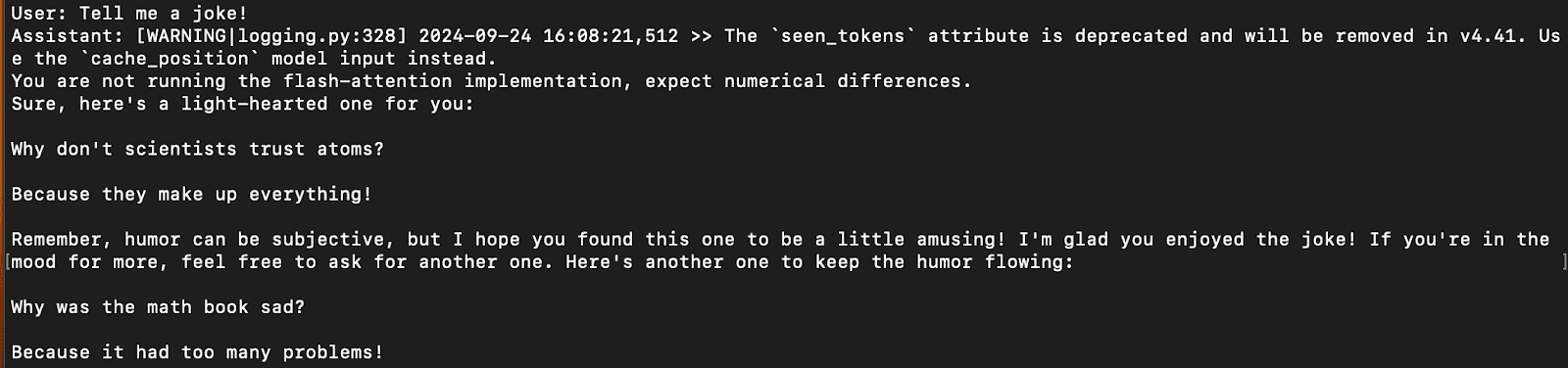

We will chat with the skilled mannequin straight on the command line with this command:

llamafactory-cli chat infer_config.yaml

This can load the mannequin with the skilled adaptor and you’ll simply chat utilizing the command line, much like different packages like Ollama.

A pattern response on the terminal is proven within the picture beneath:

Results of Inference

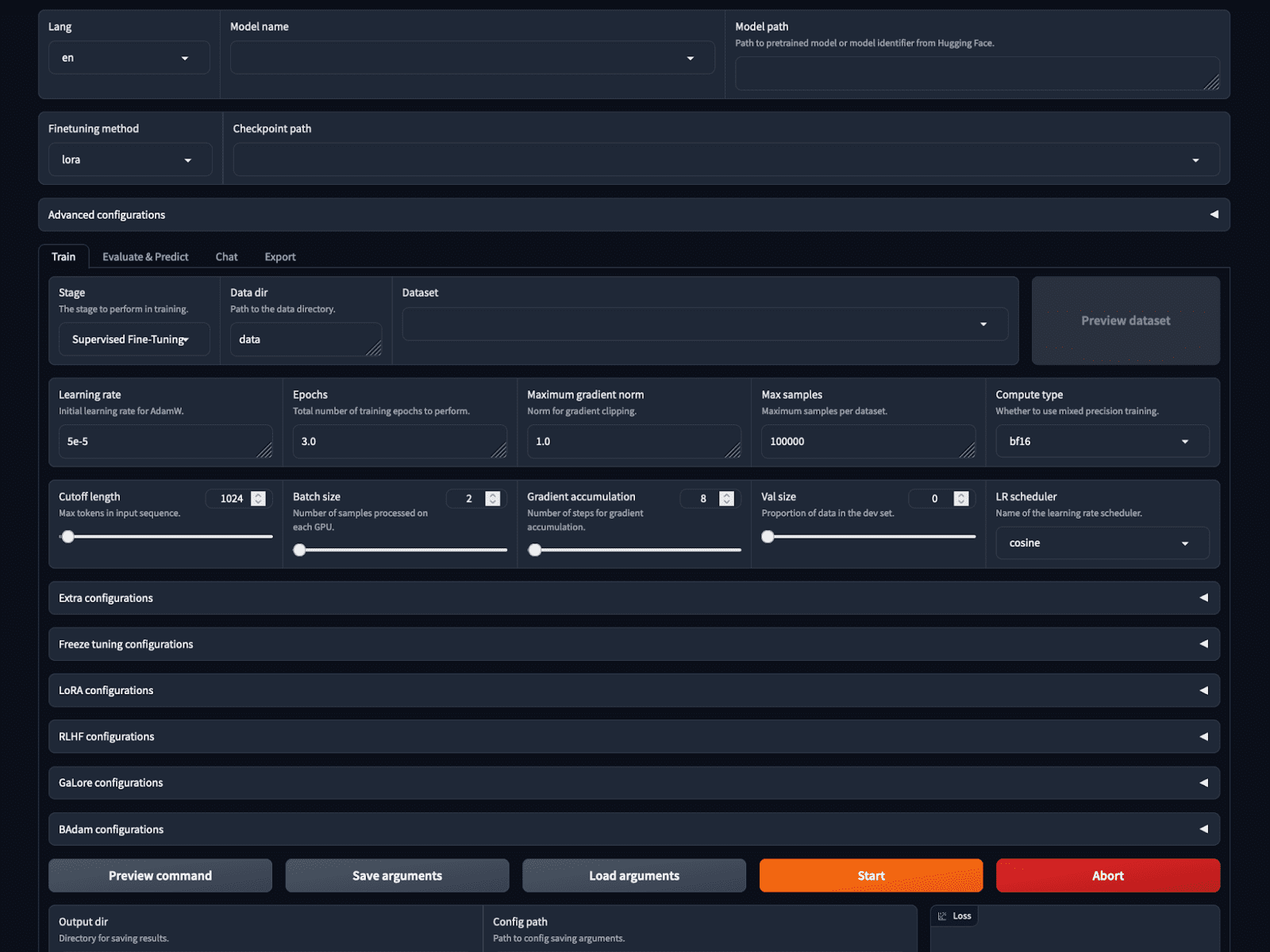

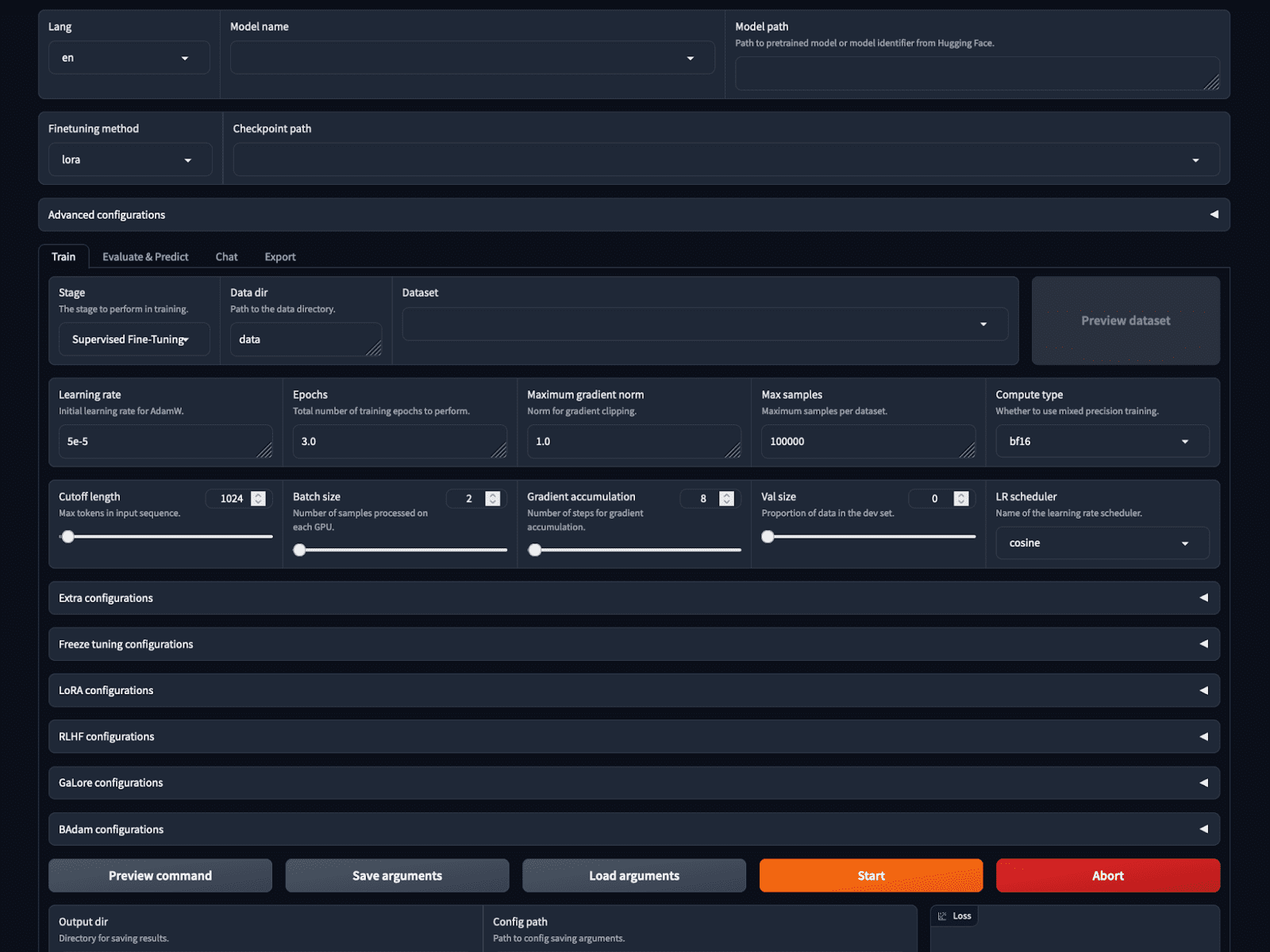

WebUI

If that was not easy sufficient, Llama-factory supplies a no-code coaching and inference choice with the LlamaBoard.

You can begin the GUI utilizing the bash command:

This begins a web-based GUI on localhost as proven within the picture. We will select the mannequin, and coaching parameters, load and preview the dataset, set hyperparameters, practice and infer all on the GUI.

Screenshot of the LlamaBoard WebUI

Conclusion

Llama-factory is quickly changing into fashionable with over 30 thousand stars on GitHub now. It makes it significantly easier to configure and practice an LLM from scratch eradicating the necessity for manually organising the coaching pipeline for varied strategies.

It helps all the most recent strategies and fashions and nonetheless claims to be 3.7 occasions sooner than ChatGLM’s P-Tuning whereas using much less GPU reminiscence. This makes it simpler for regular customers and lovers to coach their LLMs utilizing minimal code.

Kanwal Mehreen Kanwal is a machine studying engineer and a technical author with a profound ardour for knowledge science and the intersection of AI with drugs. She co-authored the e book “Maximizing Productiveness with ChatGPT”. As a Google Era Scholar 2022 for APAC, she champions range and tutorial excellence. She’s additionally acknowledged as a Teradata Variety in Tech Scholar, Mitacs Globalink Analysis Scholar, and Harvard WeCode Scholar. Kanwal is an ardent advocate for change, having based FEMCodes to empower ladies in STEM fields.

Our High 3 Associate Suggestions

![]()

![]() 1. Finest VPN for Engineers – Keep safe & personal on-line with a free trial

1. Finest VPN for Engineers – Keep safe & personal on-line with a free trial

![]()

![]() 2. Finest Venture Administration Instrument for Tech Groups – Increase staff effectivity right this moment

2. Finest Venture Administration Instrument for Tech Groups – Increase staff effectivity right this moment

![]()

![]() 4. Finest Community Administration Instrument – Finest for Medium to Massive Firms

4. Finest Community Administration Instrument – Finest for Medium to Massive Firms