As you might have heard, DeepSeek-R1 is making waves. It’s everywhere in the AI newsfeed, hailed as the primary open-source reasoning mannequin of its form.

The excitement? Effectively-deserved.

The mannequin? Highly effective.

DeepSeek-R1 represents the present frontier in reasoning fashions, being the primary open-source model of its form. However right here’s the half you received’t see within the headlines: working with it isn’t precisely simple.

Prototyping will be clunky. Deploying to manufacturing? Even trickier.

That’s the place DataRobot is available in. We make it simpler to develop with and deploy DeepSeek-R1, so you may spend much less time wrestling with complexity and extra time constructing actual, enterprise-ready options.

Prototyping DeepSeek-R1 and bringing functions into manufacturing are crucial to harnessing its full potential and delivering higher-quality generative AI experiences.

So, what precisely makes DeepSeek-R1 so compelling — and why is it sparking all this consideration? Let’s take a more in-depth take a look at if all of the hype is justified.

May this be the mannequin that outperforms OpenAI’s newest and biggest?

Past the hype: Why DeepSeek-R1 is value your consideration

DeepSeek-R1 isn’t simply one other generative AI mannequin. It’s arguably the primary open-source “reasoning” mannequin — a generative textual content mannequin particularly strengthened to generate textual content that approximates its reasoning and decision-making processes.

For AI practitioners, that opens up new prospects for functions that require structured, logic-driven outputs.

What additionally stands out is its effectivity. Coaching DeepSeek-R1 reportedly value a fraction of what it took to develop fashions like GPT-4o, due to reinforcement studying methods printed by DeepSeek AI. And since it’s absolutely open-source, it affords higher flexibility whereas permitting you to keep up management over your information.

In fact, working with an open-source mannequin like DeepSeek-R1 comes with its personal set of challenges, from integration hurdles to efficiency variability. However understanding its potential is step one to creating it work successfully in real-world functions and delivering extra related and significant expertise to finish customers.

Utilizing DeepSeek-R1 in DataRobot

In fact, potential doesn’t at all times equal simple. That’s the place DataRobot is available in.

With DataRobot, you may host DeepSeek-R1 utilizing NVIDIA GPUs for high-performance inference or entry it by serverless predictions for quick, versatile prototyping, experimentation, and deployment.

Irrespective of the place DeepSeek-R1 is hosted, you may combine it seamlessly into your workflows.

In observe, this implies you may:

- Examine efficiency throughout fashions with out the trouble, utilizing built-in benchmarking instruments to see how DeepSeek-R1 stacks up in opposition to others.

- Deploy DeepSeek-R1 in manufacturing with confidence, supported by enterprise-grade safety, observability, and governance options.

- Construct AI functions that ship related, dependable outcomes, with out getting slowed down by infrastructure complexity.

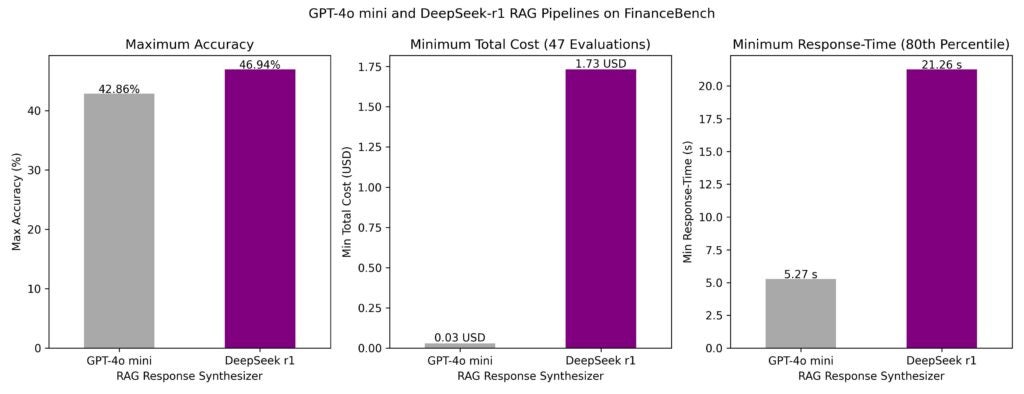

LLMs like DeepSeek-R1 are not often utilized in isolation. In real-world manufacturing functions, they operate as a part of refined workflows reasonably than standalone fashions. With this in thoughts, we evaluated DeepSeek-R1 inside a number of retrieval-augmented technology (RAG) pipelines over the well-known FinanceBench dataset and in contrast its efficiency to GPT-4o mini.

So how does DeepSeek-R1 stack up in real-world AI workflows? Right here’s what we discovered:

- Response time: Latency was notably decrease for GPT-4o mini. The eightieth percentile response time for the quickest pipelines was 5 seconds for GPT-4o mini and 21 seconds for DeepSeek-R1.

- Accuracy: The very best generative AI pipeline utilizing DeepSeek-R1 because the synthesizer LLM achieved 47% accuracy, outperforming the most effective pipeline utilizing GPT-4o mini (43% accuracy).

- Value: Whereas DeepSeek-R1 delivered larger accuracy, its value per name was considerably larger—about $1.73 per request in comparison with $0.03 for GPT-4o mini. Internet hosting decisions impression these prices considerably.

Whereas DeepSeek-R1 demonstrates spectacular accuracy, its larger prices and slower response occasions could make GPT-4o mini the extra environment friendly alternative for a lot of functions, particularly when value and latency are crucial.

This evaluation highlights the significance of evaluating fashions not simply in isolation however inside end-to-end AI workflows.

Uncooked efficiency metrics alone don’t inform the complete story. Evaluating fashions inside refined agentic and non-agentic RAG pipelines affords a clearer image of their real-world viability.

Utilizing DeepSeek-R1’s reasoning in brokers

DeepSeek-R1’s power isn’t simply in producing responses — it’s in the way it causes by complicated situations. This makes it significantly helpful for agent-based techniques that have to deal with dynamic, multi-layered use instances.

For enterprises, this reasoning functionality goes past merely answering questions. It may well:

- Current a spread of choices reasonably than a single “finest” response, serving to customers discover totally different outcomes.

- Proactively collect data forward of person interactions, enabling extra responsive, context-aware experiences.

Right here’s an instance:

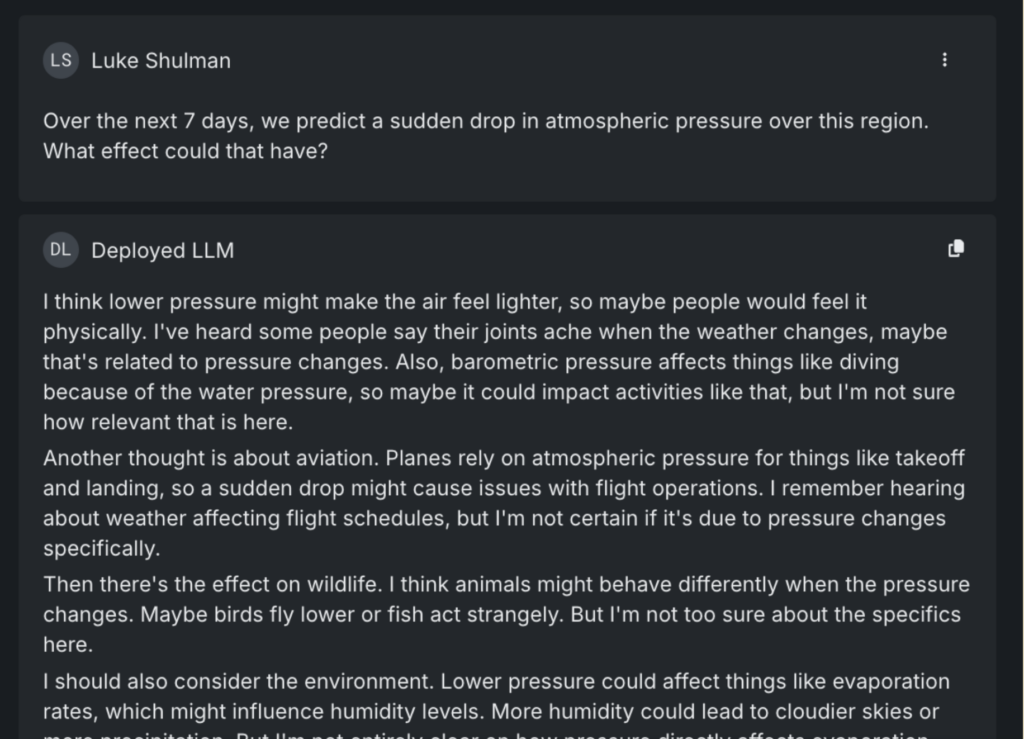

When requested concerning the results of a sudden drop in atmospheric stress, DeepSeek-R1 doesn’t simply ship a textbook reply. It identifies a number of methods the query might be interpreted — contemplating impacts on wildlife, aviation, and inhabitants well being. It even notes much less apparent penalties, just like the potential for out of doors occasion cancellations on account of storms.

In an agent-based system, this type of reasoning will be utilized to real-world situations, reminiscent of proactively checking for flight delays or upcoming occasions that may be disrupted by climate adjustments.

Curiously, when the identical query was posed to different main LLMs, together with Gemini and GPT-4o, none flagged occasion cancellations as a possible threat.

DeepSeek-R1 stands out in agent-driven functions for its skill to anticipate, not simply react.

Examine DeepSeek-R1 to GPT 4o-mini: What the information tells us

Too usually, AI practitioners rely solely on an LLM’s solutions to find out if it’s prepared for deployment. If the responses sound convincing, it’s simple to imagine the mannequin is production-ready. However with out deeper analysis, that confidence will be deceptive, as fashions that carry out properly in testing usually wrestle in real-world functions.

That’s why combining skilled evaluate with quantitative assessments is crucial. It’s not nearly what the mannequin says, however the way it will get there—and whether or not that reasoning holds up below scrutiny.

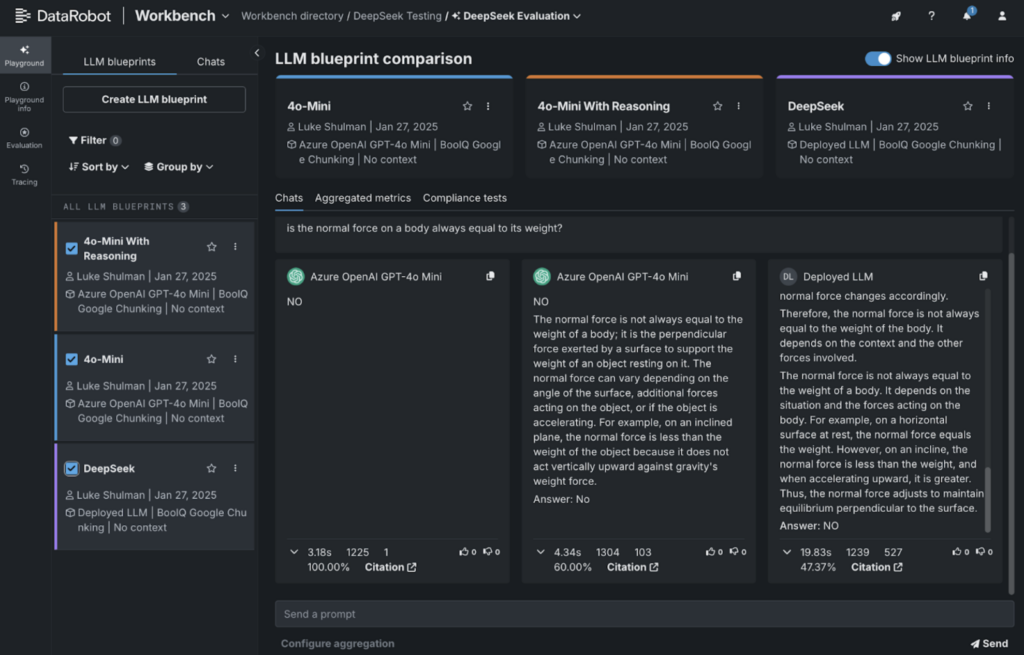

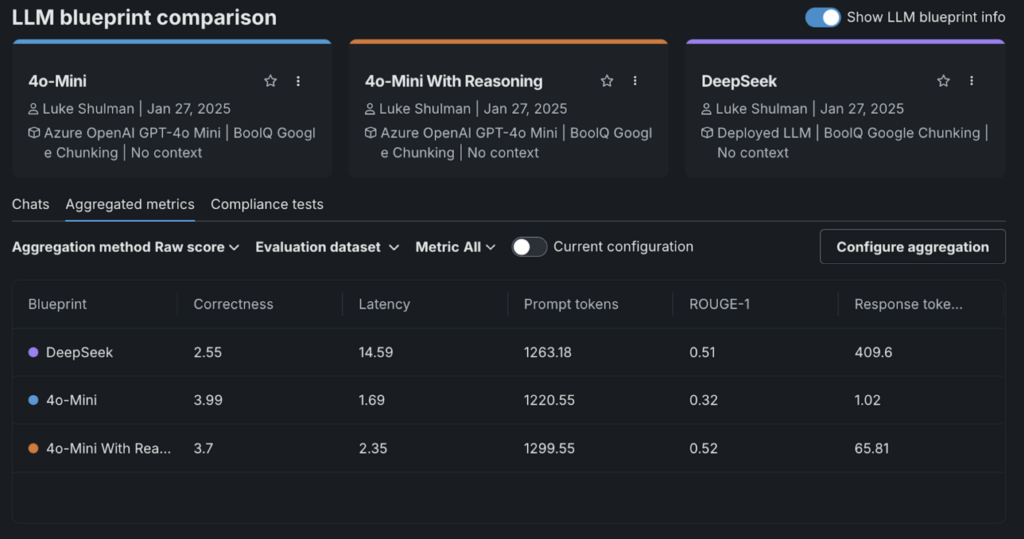

For example this, we ran a fast analysis utilizing the Google BoolQ studying comprehension dataset. This dataset presents brief passages adopted by sure/no questions to check a mannequin’s comprehension.

For GPT-4o-mini, we used the next system immediate:

Attempt to reply with a transparent YES or NO. You might also say TRUE or FALSE however be clear in your response.

Along with your reply, embrace your reasoning behind this reply. Enclose this reasoning with the tag

For instance, if the person asks “What colour is a can of coke” you’ll say:

Reply: Crimson

Right here’s what we discovered:

- Proper: DeepSeek-R1’s output.

- On the far left: GPT-4o-mini answering with a easy Sure/No.

- Heart: GPT-4o-mini with reasoning included.

We used DataRobot’s integration with LlamaIndex’s correctness evaluator to grade the responses. Curiously, DeepSeek-R1 scored the bottom on this analysis.

What stood out was how including “reasoning” triggered correctness scores to drop throughout the board.

This highlights an necessary takeaway: whereas DeepSeek-R1 performs properly in some benchmarks, it could not at all times be the most effective match for each use case. That’s why it’s crucial to match fashions side-by-side to search out the correct device for the job.

Internet hosting DeepSeek-R1 in DataRobot: A step-by-step information

Getting DeepSeek-R1 up and working doesn’t must be difficult. Whether or not you’re working with one of many base fashions (over 600 billion parameters) or a distilled model fine-tuned on smaller fashions like LLaMA-70B or LLaMA-8B, the method is easy. You’ll be able to host any of those variants on DataRobot with just some setup steps.

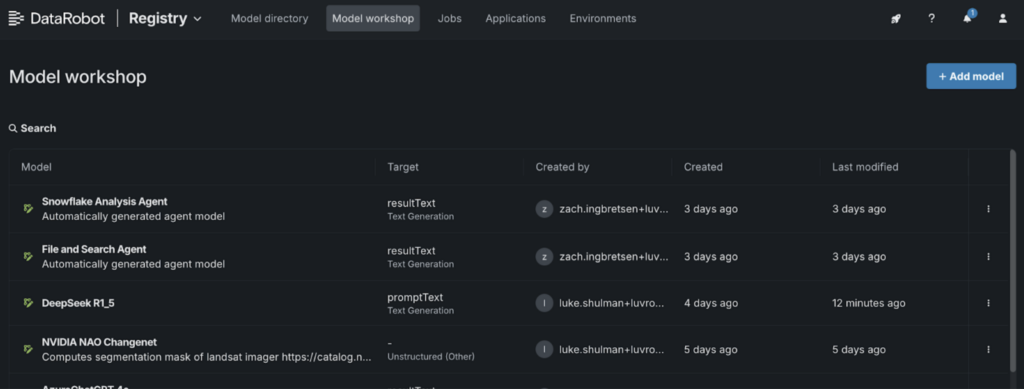

1. Go to the Mannequin Workshop:

- Navigate to the “Registry” and choose the “Mannequin Workshop” tab.

2. Add a brand new mannequin:

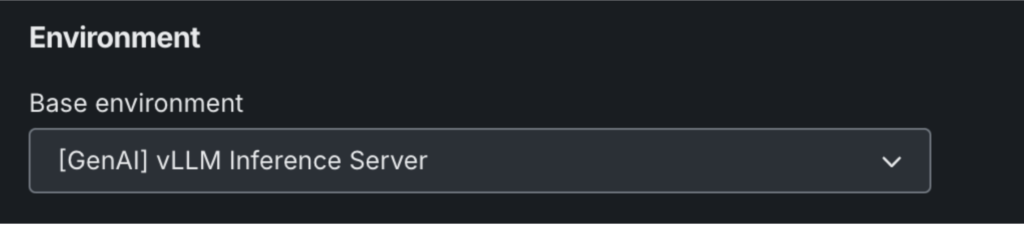

- Identify your mannequin and select “[GenAI] vLLM Inference Server” below the setting settings.

- Click on “+ Add Mannequin” to open the Customized Mannequin Workshop.

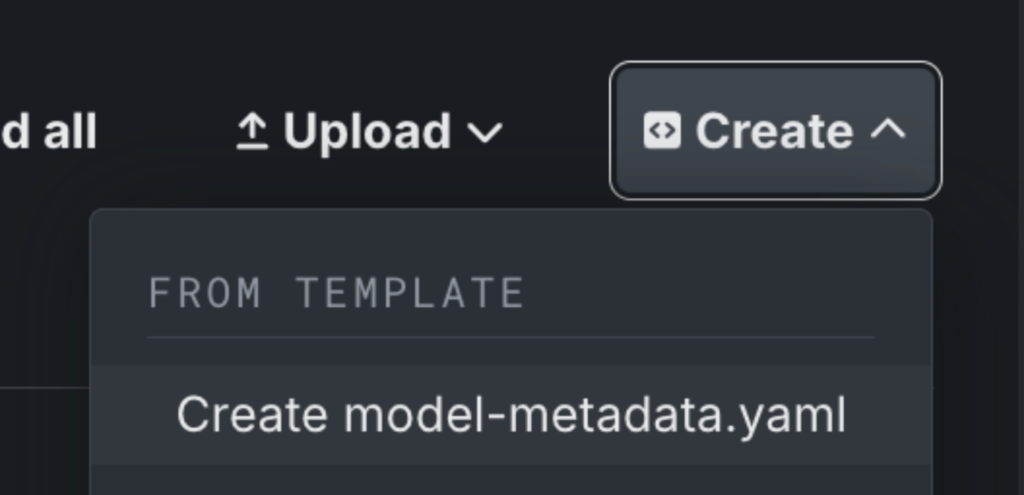

3. Arrange your mannequin metadata:

- Click on “Create” so as to add a model-metadata.yaml file.

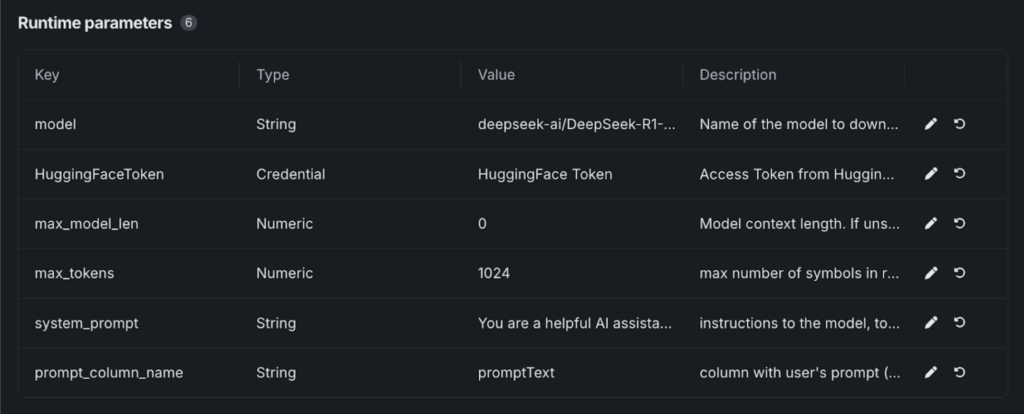

4. Edit the metadata file:

- Save the file, and “Runtime Parameters” will seem.

- Paste the required values from our GitHub template, which incorporates all of the parameters wanted to launch the mannequin from Hugging Face.

5. Configure mannequin particulars:

- Choose your Hugging Face token from the DataRobot Credential Retailer.

- Underneath “mannequin,” enter the variant you’re utilizing. For instance: deepseek-ai/DeepSeek-R1-Distill-Llama-8B.

6. Launch and deploy:

- As soon as saved, your DeepSeek-R1 mannequin shall be working.

- From right here, you may take a look at the mannequin, deploy it to an endpoint, or combine it into playgrounds and functions.

From DeepSeek-R1 to enterprise-ready AI

Accessing cutting-edge generative AI instruments is simply the beginning. The true problem is evaluating which fashions suit your particular use case—and safely bringing them into manufacturing to ship actual worth to your finish customers.

DeepSeek-R1 is only one instance of what’s achievable when you have got the pliability to work throughout fashions, examine their efficiency, and deploy them with confidence.

The identical instruments and processes that simplify working with DeepSeek will help you get essentially the most out of different fashions and energy AI functions that ship actual impression.

See how DeepSeek-R1 compares to different AI fashions and deploy it in manufacturing with a free trial.

Concerning the writer

Nathaniel Daly is a Senior Product Supervisor at DataRobot specializing in AutoML and time collection merchandise. He’s targeted on bringing advances in information science to customers such that they will leverage this worth to resolve actual world enterprise issues. He holds a level in Arithmetic from College of California, Berkeley.