Cloudera is launching and increasing partnerships to create a brand new enterprise synthetic intelligence “AI” ecosystem. Companies more and more acknowledge AI options as important differentiators in aggressive markets and are prepared to take a position closely to streamline their operations, enhance buyer experiences, and enhance top-line progress. That’s why we’re constructing an ecosystem of know-how suppliers to make it simpler, extra economical, and safer for our prospects to maximise the worth they get from AI.

At our current Evolve Convention in New York we had been extraordinarily excited to announce our founding AI ecosystem companions: Amazon Net Providers (“AWS“), NVIDIA, and Pinecone.

Along with these founding companions we’re additionally constructing tight integrations with our ecosystem accelerators: Hugging Face, the main AI group and mannequin hub, and Ray, the best-in-class compute framework for AI workloads.

On this put up we’ll provide you with an summary of those new and expanded partnerships and the way we see them becoming into the rising AI know-how stack that helps the AI utility lifecycle.

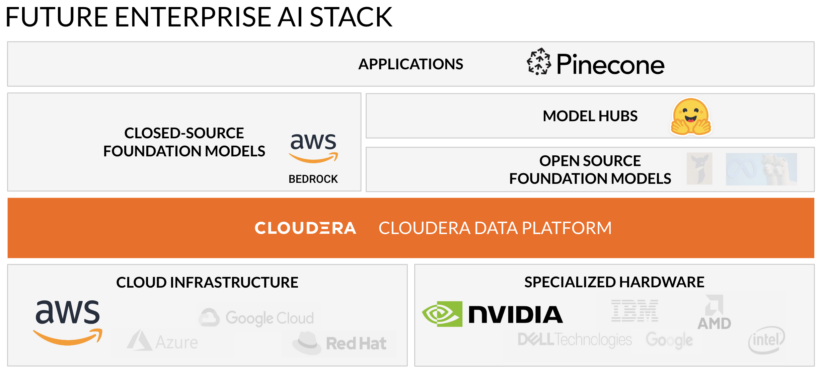

We’ll begin with the enterprise AI stack. We see AI functions like chatbots being constructed on high of closed-source or open supply foundational fashions. These fashions are educated or augmented with knowledge from a knowledge administration platform. The info administration platform, fashions, and finish functions are powered by cloud infrastructure and/or specialised {hardware}. In a stack together with Cloudera Information Platform the functions and underlying fashions may also be deployed from the information administration platform by way of Cloudera Machine Studying.

Right here’s the longer term enterprise AI stack with our founding ecosystem companions and accelerators highlighted:

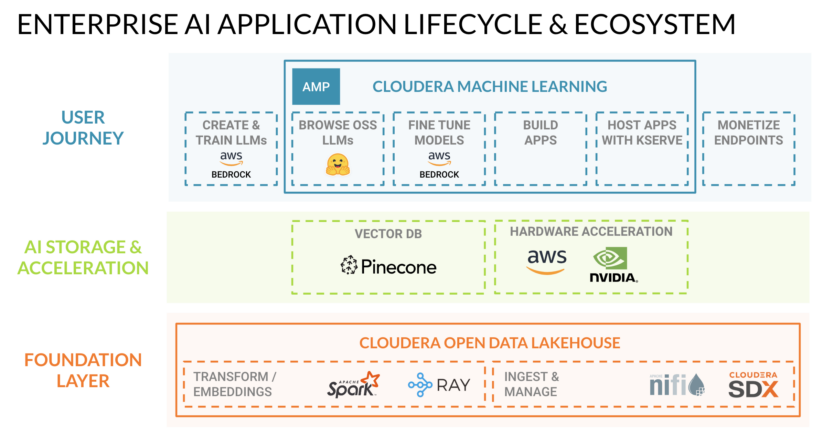

That is how we view that very same stack supporting the enterprise AI utility lifecycle:

Let’s use a easy instance to clarify how this ecosystem allows the AI utility lifecycle:

- An organization needs to deploy a assist chatbot to lower operational prices and enhance buyer experiences.

- They will choose one of the best foundational LLM for the job from Amazon Bedrock (accessed by way of API name) or Hugging Face (accessed by way of obtain) utilizing Cloudera Machine Studying (“CML”).

- Then they will construct the applying on CML utilizing frameworks like Flask.

- They will enhance the accuracy of the chatbot’s responses by checking every query towards embeddings saved in Pinecone’s vector database after which improve the query with knowledge from Cloudera Open Information Lakehouse (extra on how this works beneath).

- Lastly they will deploy the applying utilizing CML’s containerized compute periods powered by NVIDIA GPUs or AWS Inferentia—specialised {hardware} that improves inference efficiency whereas lowering prices.

Learn on to study extra about how every of our founding companions and accelerators are collaborating with Cloudera to make it simpler, extra economical, and safer for our prospects to maximise the worth they get from AI.

Founding AI ecosystem companions | NVIDIA, AWS, Pinecone

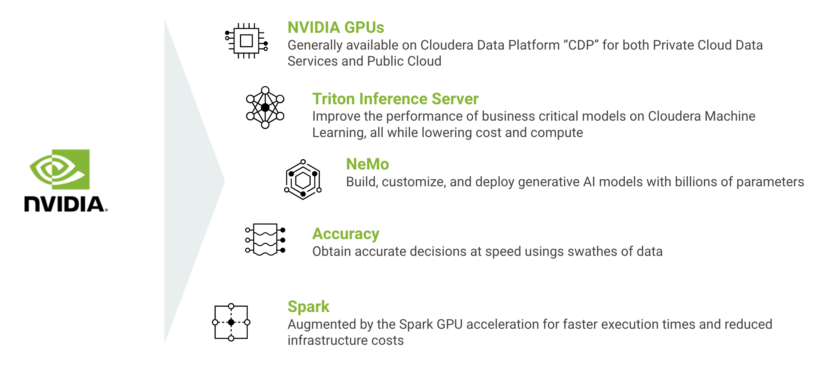

NVIDIA | Specialised {Hardware}

Highlights:

At the moment, NVIDIA GPUs are already out there in Cloudera Information Platform (CDP), permitting Cloudera prospects to get eight instances the efficiency on knowledge engineering workloads at lower than 50 p.c incremental value relative to trendy CPU-only options. This new section in know-how collaboration builds off of that success by including key capabilities throughout the AI-application lifecycle in these areas:

- Speed up AI and machine studying workloads in Cloudera on Public Cloud and on-premises utilizing NVIDIA GPUs

- Speed up knowledge pipelines with GPUs in Cloudera Non-public Cloud

- Deploy AI fashions in CML utilizing NVIDIA Triton Inference Server

- Speed up generative AI fashions in CML utilizing NVIDIA NeMo

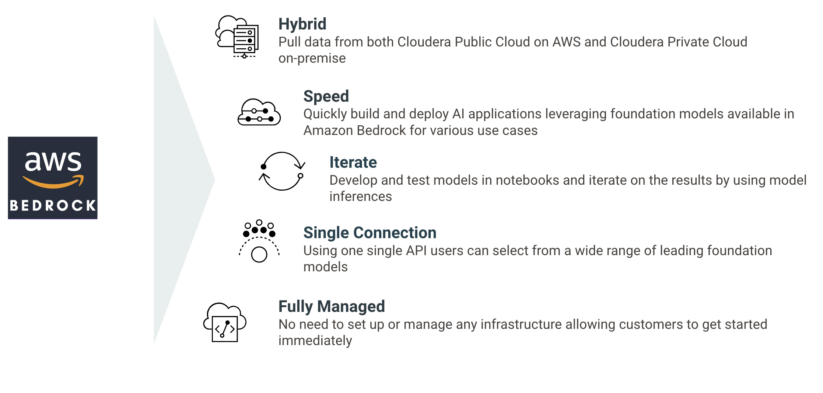

Amazon Bedrock | Closed-Supply Foundational Fashions

Highlights:

We’re constructing generative AI capabilities in Cloudera, utilizing the ability of Amazon Bedrock, a completely managed serverless service. Clients can shortly and simply construct generative AI functions utilizing these new options out there in Cloudera.

With the final availability of Amazon Bedrock, Cloudera is releasing its newest utilized ML prototype (AMP) inbuilt Cloudera Machine Studying: CML Textual content Summarization AMP constructed utilizing Amazon Bedrock. Utilizing this AMP, prospects can use basis fashions out there in Amazon Bedrock for textual content summarization of knowledge managed each in Cloudera Public Cloud on AWS and Cloudera Non-public Cloud on-premise. Extra info could be present in our weblog put up right here.

Cloudera is engaged on integrations of AWS Inferentia and AWS Trainium–powered Amazon EC2 cases into Cloudera Machine Studying service (“CML”). This may give CML prospects the power to spin-up remoted compute periods utilizing these highly effective and environment friendly accelerators purpose-built for AI workloads. Extra info could be present in our weblog put up right here.

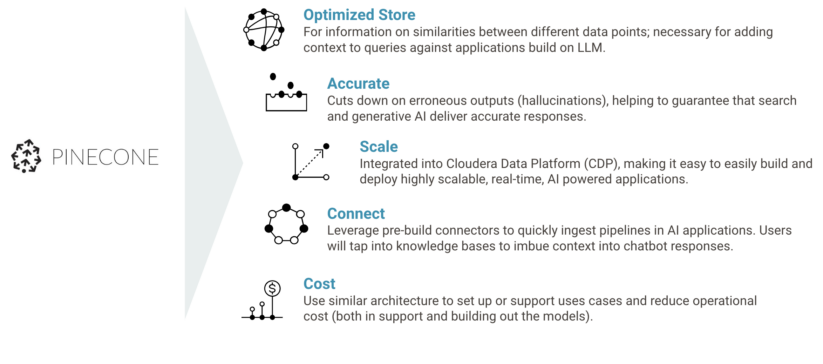

Highlights:

The partnership will see Cloudera combine Pinecone’s best-in-class vector database into Cloudera Information Platform (CDP), enabling organizations to simply construct and deploy extremely scalable, actual time, AI-powered functions on Cloudera.

This consists of the discharge of a brand new Utilized ML Prototype (AMP) that may enable builders to shortly create and increase new data bases from knowledge on their very own web site, in addition to pre-built connectors that may allow prospects to shortly arrange ingest pipelines in AI functions.

Within the AMP, Pinceone’s vector database makes use of these data bases to imbue context into chatbot responses, guaranteeing helpful outputs. Extra info on this AMP and the way vector databases add context to AI functions could be present in our weblog put up right here.

AI ecosystem accelerators | Hugging Face, Ray:

Highlights:

Cloudera is integrating Hugging Faces’ market-leading vary of LLMs, generative AI, and conventional pre-trained machine studying fashions and datasets into Cloudera Information Platform so prospects can considerably cut back time-to-value in deploying AI functions. Cloudera and Hugging Face plan to do that with three key integrations:

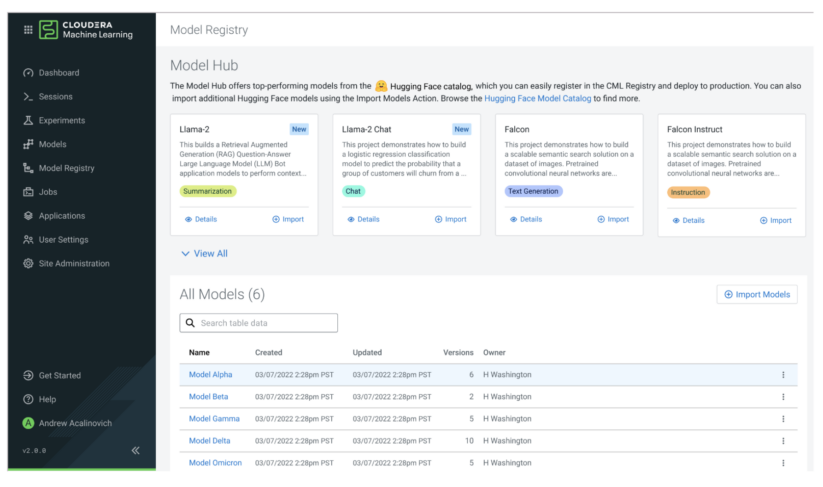

Hugging Face Fashions Integration: Import and deploy any of Hugging Face’s fashions from Cloudera Machine Studying (CML) with a single click on.

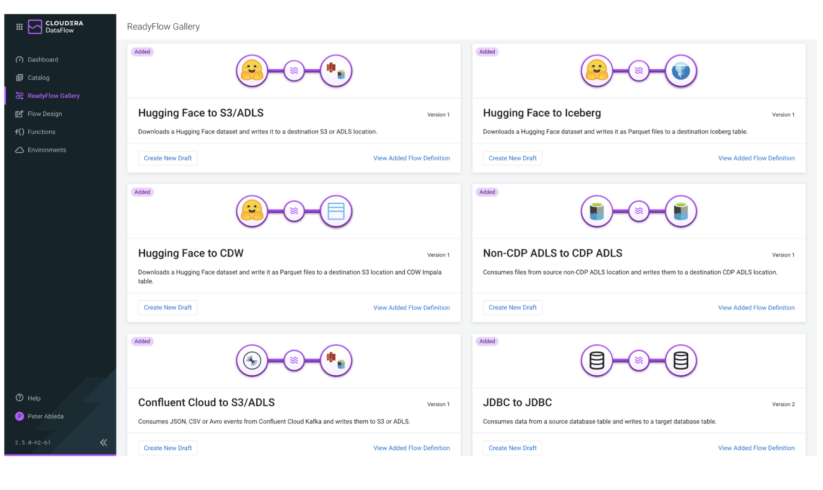

Hugging Face Datasets Integration: Import any of Hugging Face’s datasets by way of pre-built Cloudera Information Circulate ReadyFlows into Iceberg tables in Cloudera Information Warehouse (CDW) with a single click on.

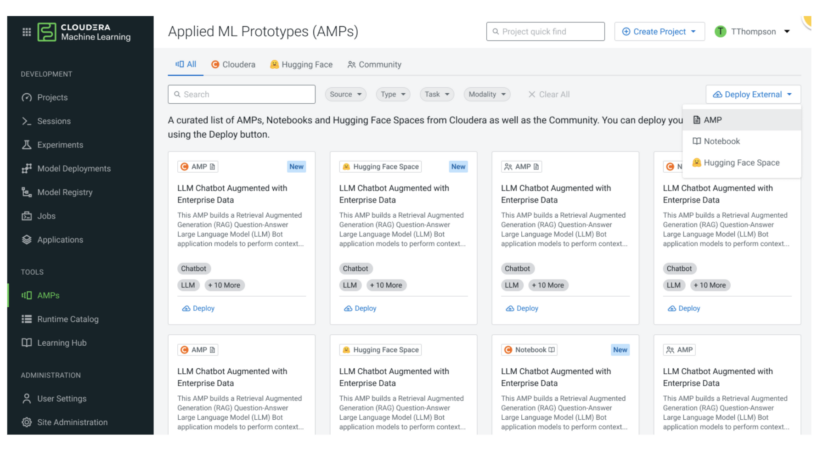

Hugging Face Areas Integration: Import and deploy any of Hugging Face’s Areas (pre-built internet functions for small-scale ML demos) by way of Cloudera Machine Studying with a single click on. These will complement CML’s already strong catalog of Utilized Machine Studying Prototypes (AMPs) that enable builders to shortly launch pre-built AI functions together with an LLM Chatbot developed utilizing an LLM from Hugging Face.

Ray | Distributed Compute Framework

Misplaced within the discuss OpenAI is the great quantity of compute wanted to coach and fine-tune LLMs, like GPT, and generative AI, like ChatGPT. Every iteration requires extra compute and the limitation imposed by Moore’s Regulation shortly strikes that process from single compute cases to distributed compute. To perform this, OpenAI has employed Ray to energy the distributed compute platform to coach every launch of the GPT fashions. Ray has emerged as a preferred framework due to its superior efficiency over Apache Spark for distributed AI compute workloads.

Ray can be utilized in Cloudera Machine Studying’s open-by-design structure to convey quick distributed AI compute to CDP. That is enabled by a Ray Module in cml extension’s Python bundle printed by our staff. Extra details about Ray and the right way to deploy it in Cloudera Machine Studying could be present in our weblog put up right here.