Current progress in AI largely boils down to 1 factor: Scale.

Across the starting of this decade, AI labs seen that making their algorithms—or fashions—ever greater and feeding them extra information constantly led to huge enhancements in what they may do and the way nicely they did it. The newest crop of AI fashions have tons of of billions to over a trillion inside community connections and be taught to put in writing or code like we do by consuming a wholesome fraction of the web.

It takes extra computing energy to coach greater algorithms. So, to get so far, the computing devoted to AI coaching has been quadrupling yearly, in line with nonprofit AI analysis group, Epoch AI.

Ought to that progress proceed by means of 2030, future AI fashions can be educated with 10,000 instances extra compute than right this moment’s cutting-edge algorithms, like OpenAI’s GPT-4.

“If pursued, we’d see by the tip of the last decade advances in AI as drastic because the distinction between the rudimentary textual content era of GPT-2 in 2019 and the delicate problem-solving talents of GPT-4 in 2023,” Epoch wrote in a current analysis report detailing how possible it’s this state of affairs is feasible.

However trendy AI already sucks in a big quantity of energy, tens of 1000’s of superior chips, and trillions of on-line examples. In the meantime, the business has endured chip shortages, and research recommend it might run out of high quality coaching information. Assuming corporations proceed to spend money on AI scaling: Is progress at this fee even technically potential?

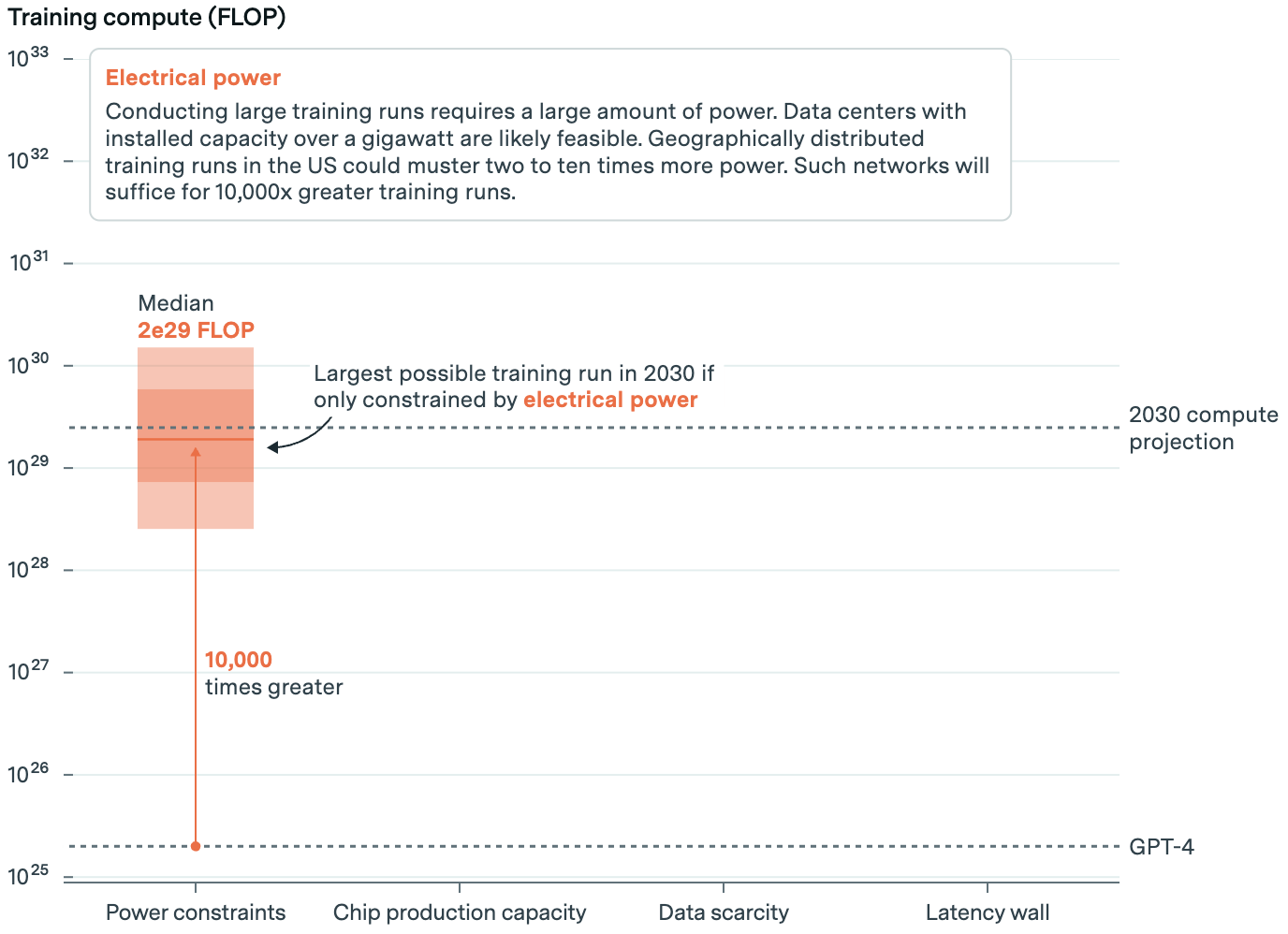

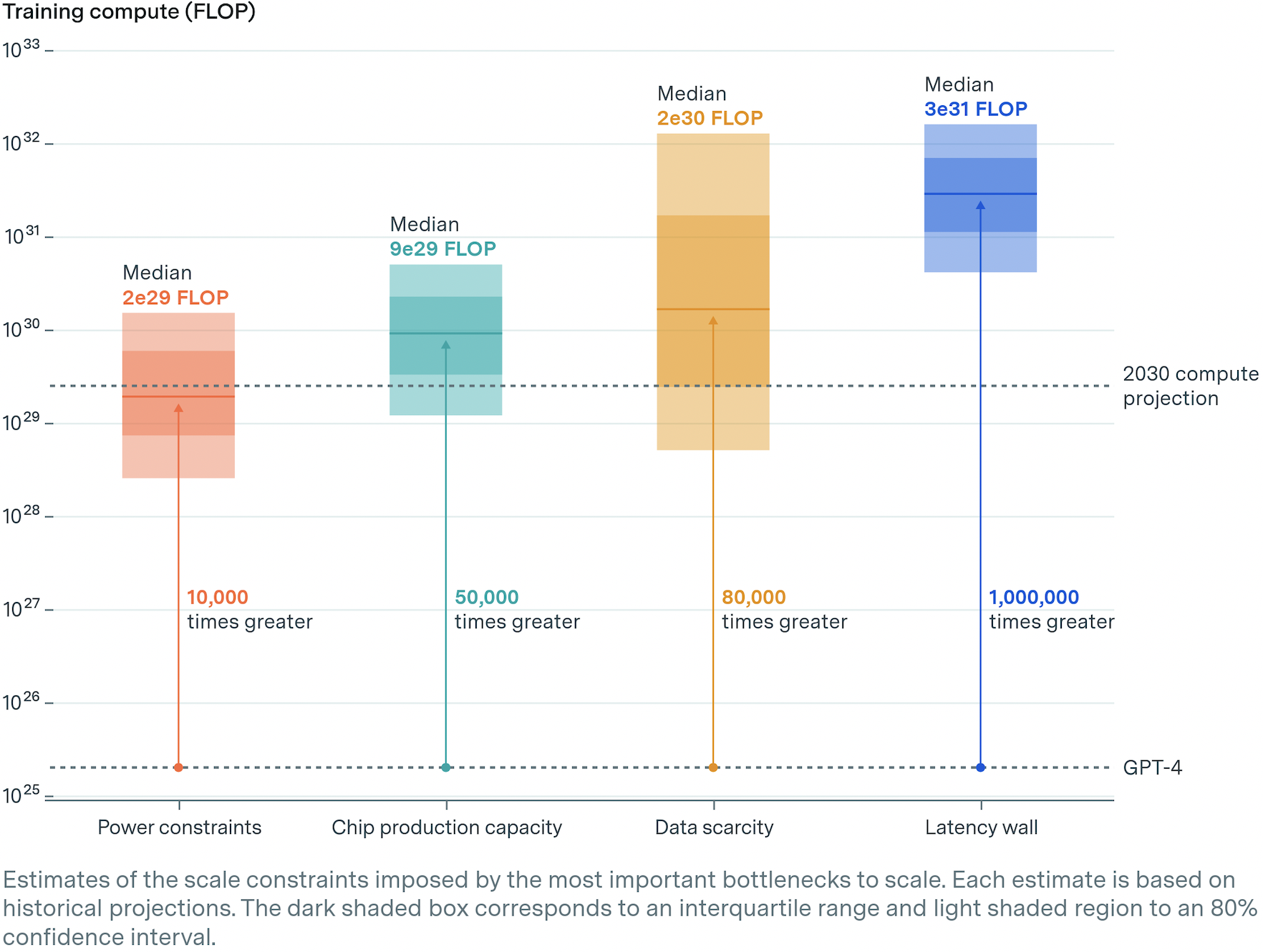

In its report, Epoch checked out 4 of the most important constraints to AI scaling: Energy, chips, information, and latency. TLDR: Sustaining progress is technically potential, however not sure. Right here’s why.

Energy: We’ll Want a Lot

Energy is the most important constraint to AI scaling. Warehouses full of superior chips and the gear to make them run—or information facilities—are energy hogs. Meta’s newest frontier mannequin was educated on 16,000 of Nvidia’s strongest chips drawing 27 megawatts of electrical energy.

This, in line with Epoch, is the same as the annual energy consumption of 23,000 US households. However even with effectivity positive aspects, coaching a frontier AI mannequin in 2030 would want 200 instances extra energy, or roughly 6 gigawatts. That’s 30 % of the facility consumed by all information facilities right this moment.

There are few energy crops that may muster that a lot, and most are possible beneath long-term contract. However that’s assuming one energy station would electrify an information heart. Epoch suggests corporations will search out areas the place they will draw from a number of energy crops by way of the native grid. Accounting for deliberate utilities progress, going this route is tight however potential.

To raised break the bottleneck, corporations could as an alternative distribute coaching between a number of information facilities. Right here, they might break up batches of coaching information between quite a lot of geographically separate information facilities, lessening the facility necessities of anyone. The technique would require lightning-quick, high-bandwidth fiber connections. But it surely’s technically doable, and Google Gemini Extremely’s coaching run is an early instance.

All instructed, Epoch suggests a spread of potentialities from 1 gigawatt (native energy sources) all the best way as much as 45 gigawatts (distributed energy sources). The extra energy corporations faucet, the bigger the fashions they will prepare. Given energy constraints, a mannequin may very well be educated utilizing about 10,000 instances extra computing energy than GPT-4.

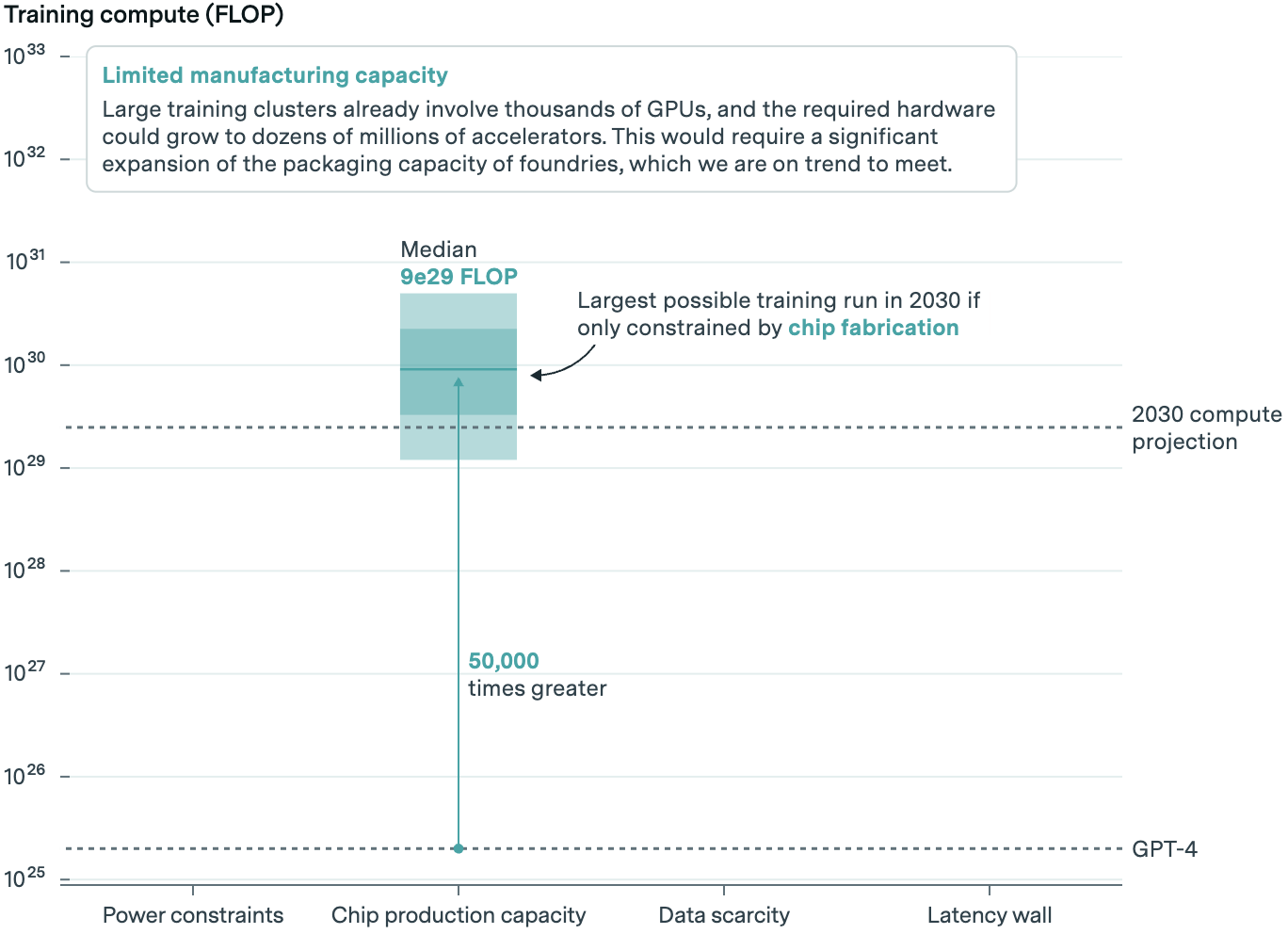

Chips: Does It Compute?

All that energy is used to run AI chips. A few of these serve up accomplished AI fashions to prospects; some prepare the following crop of fashions. Epoch took an in depth take a look at the latter.

AI labs prepare new fashions utilizing graphics processing models, or GPUs, and Nvidia is prime canine in GPUs. TSMC manufactures these chips and sandwiches them along with high-bandwidth reminiscence. Forecasting has to take all three steps into consideration. In response to Epoch, there’s possible spare capability in GPU manufacturing, however reminiscence and packaging could maintain issues again.

Given projected business progress in manufacturing capability, they suppose between 20 and 400 million AI chips could also be accessible for AI coaching in 2030. A few of these will likely be serving up present fashions, and AI labs will solely be capable of purchase a fraction of the entire.

The wide selection is indicative of a superb quantity of uncertainty within the mannequin. However given anticipated chip capability, they consider a mannequin may very well be educated on some 50,000 instances extra computing energy than GPT-4.

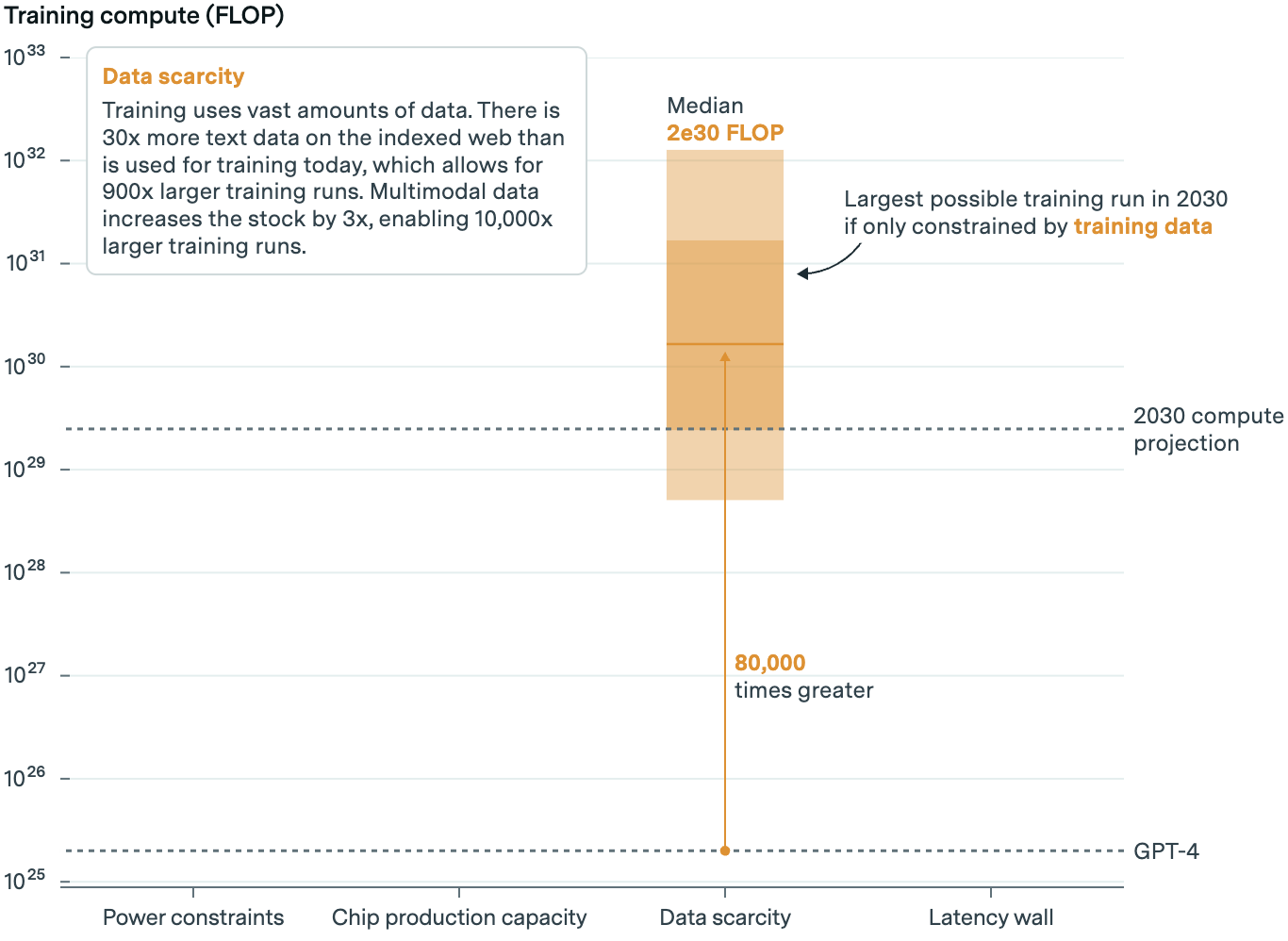

Information: AI’s On-line Schooling

AI’s starvation for information and its impending shortage is a widely known constraint. Some forecast the stream of high-quality, publicly accessible information will run out by 2026. However Epoch doesn’t suppose information shortage will curtail the expansion of fashions by means of at the very least 2030.

At right this moment’s progress fee, they write, AI labs will run out of high quality textual content information in 5 years. Copyright lawsuits might also impression provide. Epoch believes this provides uncertainty to their mannequin. However even when courts resolve in favor of copyright holders, complexity in enforcement and licensing offers like these pursued by Vox Media, Time, The Atlantic and others imply the impression on provide will likely be restricted (although the standard of sources could endure).

However crucially, fashions now devour extra than simply textual content in coaching. Google’s Gemini was educated on picture, audio, and video information, for instance.

Non-text information can add to the availability of textual content information by means of captions and transcripts. It may possibly additionally develop a mannequin’s talents, like recognizing the meals in a picture of your fridge and suggesting dinner. It might even, extra speculatively, end in switch studying, the place fashions educated on a number of information sorts outperform these educated on only one.

There’s additionally proof, Epoch says, that artificial information may additional develop the information haul, although by how a lot is unclear. DeepMind has lengthy used artificial information in its reinforcement studying algorithms, and Meta employed some artificial information to coach its newest AI fashions. However there could also be laborious limits to how a lot can be utilized with out degrading mannequin high quality. And it might additionally take much more—expensive—computing energy to generate.

All instructed, although, together with textual content, non-text, and artificial information, Epoch estimates there’ll be sufficient to coach AI fashions with 80,000 instances extra computing energy than GPT-4.

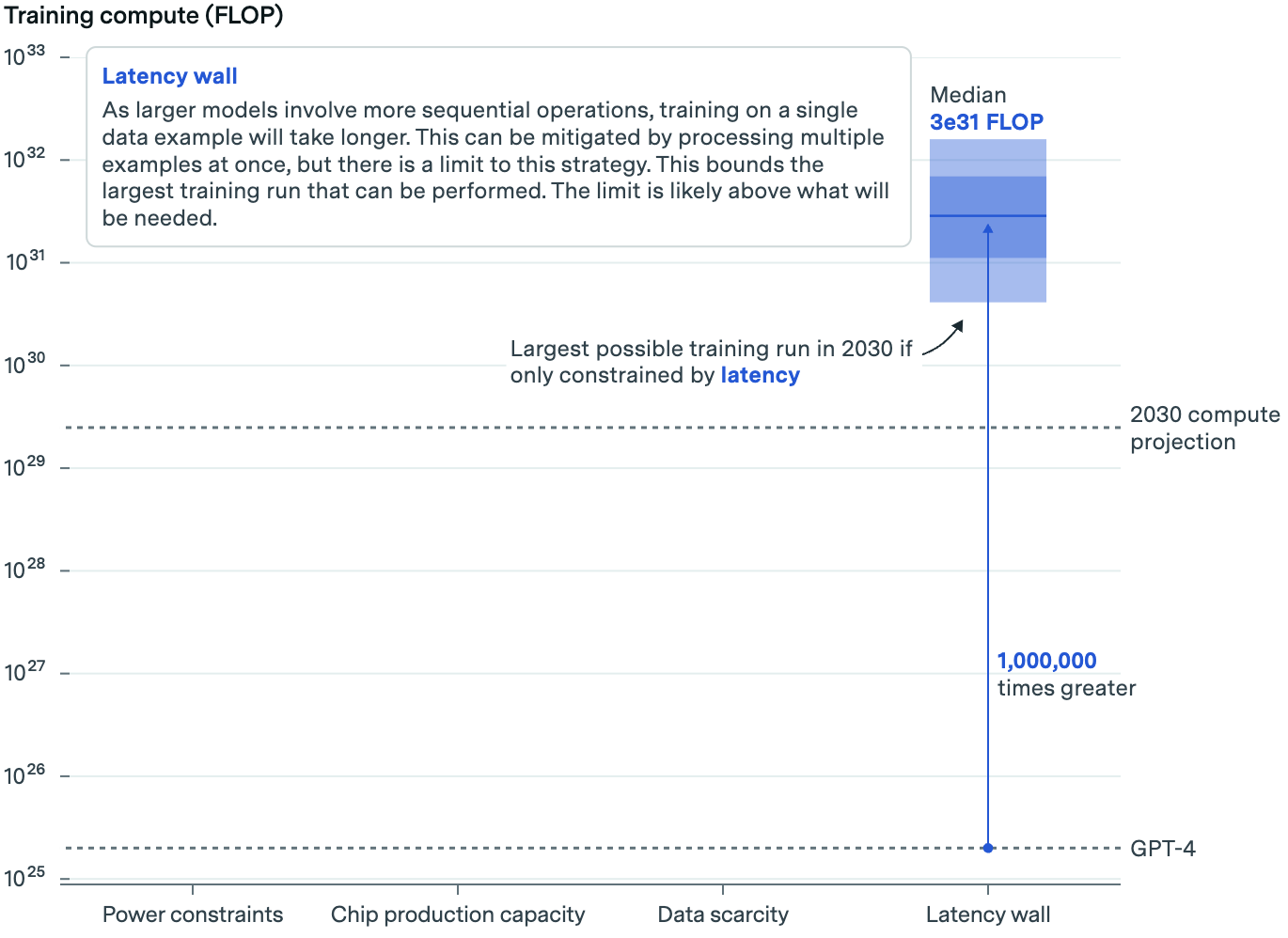

Latency: Greater Is Slower

The final constraint is said to the sheer measurement of upcoming algorithms. The larger the algorithm, the longer it takes for information to traverse its community of synthetic neurons. This might imply the time it takes to coach new algorithms turns into impractical.

This bit will get technical. Briefly, Epoch takes a take a look at the potential measurement of future fashions, the dimensions of the batches of coaching information processed in parallel, and the time it takes for that information to be processed inside and between servers in an AI information heart. This yields an estimate of how lengthy it might take to coach a mannequin of a sure measurement.

The primary takeaway: Coaching AI fashions with right this moment’s setup will hit a ceiling finally—however not for awhile. Epoch estimates that, beneath present practices, we may prepare AI fashions with upwards of 1,000,000 instances extra computing energy than GPT-4.

Scaling Up 10,000x

You’ll have seen the dimensions of potential AI fashions will get bigger beneath every constraint—that’s, the ceiling is increased for chips than energy, for information than chips, and so forth. But when we think about all of them collectively, fashions will solely be potential as much as the primary bottleneck encountered—and on this case, that’s energy. Even so, vital scaling is technically potential.

“When thought of collectively, [these AI bottlenecks] suggest that coaching runs of as much as 2e29 FLOP can be possible by the tip of the last decade,” Epoch writes.

“This might signify a roughly 10,000-fold scale-up relative to present fashions, and it might imply that the historic pattern of scaling may proceed uninterrupted till 2030.”

What Have You Carried out for Me Currently?

Whereas all this implies continued scaling is technically potential, it additionally makes a fundamental assumption: That AI funding will develop as wanted to fund scaling and that scaling will proceed to yield spectacular—and extra importantly, helpful—advances.

For now, there’s each indication tech corporations will preserve investing historic quantities of money. Pushed by AI, spending on the likes of latest gear and actual property has already jumped to ranges not seen in years.

“Whenever you undergo a curve like this, the danger of underinvesting is dramatically higher than the danger of overinvesting,” Alphabet CEO Sundar Pichai mentioned on final quarter’s earnings name as justification.

However spending might want to develop much more. Anthropic CEO Dario Amodei estimates fashions educated right this moment can price as much as $1 billion, subsequent yr’s fashions could close to $10 billion, and prices per mannequin may hit $100 billion within the years thereafter. That’s a dizzying quantity, however it’s a price ticket corporations could also be prepared to pay. Microsoft is already reportedly committing that a lot to its Stargate AI supercomputer, a joint venture with OpenAI due out in 2028.

It goes with out saying that the urge for food to take a position tens or tons of of billions of {dollars}—greater than the GDP of many nations and a big fraction of present annual revenues of tech’s greatest gamers—isn’t assured. Because the shine wears off, whether or not AI progress is sustained could come right down to a query of, “What have you ever completed for me recently?”

Already, buyers are checking the underside line. In the present day, the quantity invested dwarfs the quantity returned. To justify higher spending, companies should present proof that scaling continues to supply increasingly more succesful AI fashions. Which means there’s growing stress on upcoming fashions to transcend incremental enhancements. If positive aspects tail off or sufficient folks aren’t prepared to pay for AI merchandise, the story could change.

Additionally, some critics consider massive language and multimodal fashions will show to be a pricy useless finish. And there’s at all times the possibility a breakthrough, just like the one which kicked off this spherical, exhibits we will accomplish extra with much less. Our brains be taught repeatedly on a light-weight bulb’s value of vitality and nowhere close to an web’s value of knowledge.

That mentioned, if the present method “can automate a considerable portion of financial duties,” the monetary return may quantity within the trillions of {dollars}, greater than justifying the spend, in line with Epoch. Many within the business are prepared to take that wager. Nobody is aware of the way it’ll shake out but.

Picture Credit score: Werclive 👹 / Unsplash