Managing and sustaining deployments of advanced software program current engineers with a large number of challenges: safety vulnerabilities, outdated dependencies, and unpredictable and asynchronous vendor launch cadences, to call a number of.

We describe right here an method to automating key actions within the software program operations course of, with give attention to the setup and testing of updates to third-party code. A key profit is that engineers can extra shortly and confidently deploy the most recent variations of software program. This enables a workforce to extra simply and safely keep updated on software program releases, each to help consumer wants and to remain present on safety patches.

We illustrate this method with a software program engineering course of platform managed by our workforce of researchers within the Utilized Techniques Group of the SEI’s CERT Division. This platform is designed to be compliant with the necessities of the Cybersecurity Maturity Mannequin Certification (CMMC) and NIST SP 800-171. Every of the challenges above current dangers to the soundness and safety compliance of the platform, and addressing these points calls for effort and time.

When system deployment is finished with out automation, system directors should spend time manually downloading, verifying, putting in, and configuring every new launch of any specific software program device. Moreover, this course of should first be executed in a check atmosphere to make sure the software program and all its dependencies could be built-in efficiently and that the upgraded system is totally practical. Then the method is finished once more within the manufacturing atmosphere.

When an engineer’s time is freed up by automation, extra effort could be allotted to delivering new capabilities to the warfighter, with extra effectivity, increased high quality, and fewer danger of safety vulnerabilities. Steady deployment of functionality describes a set of rules and practices that present quicker supply of safe software program capabilities by bettering the collaboration and communication that hyperlinks software program improvement groups with IT operations and safety workers, in addition to with acquirers, suppliers, and different system stakeholders.

Whereas this method advantages software program improvement typically, we advise that it’s particularly essential in high-stakes software program for nationwide safety missions.

On this submit, we describe our method to utilizing DevSecOps instruments for automating the supply of third-party software program to improvement groups utilizing CI/CD pipelines. This method is focused to software program programs which might be container suitable.

Constructing an Automated Configuration Testing Pipeline

Not each workforce in a software-oriented group is targeted particularly on the engineering of the software program product. Our workforce bears accountability for 2 typically competing duties:

- Delivering precious know-how, resembling instruments for automated testing, to software program engineers that permits them to carry out product improvement and

- Deploying safety updates to the know-how.

In different phrases, supply of worth within the steady deployment of functionality might usually not be straight centered on the event of any particular product. Different dimensions of worth embrace “the individuals, processes, and know-how crucial to construct, deploy, and function the enterprise’s merchandise. Usually, this enterprise concern consists of the software program manufacturing unit and product operational environments; nonetheless, it doesn’t include the merchandise.”

To enhance our capability to finish these duties, we designed and applied a customized pipeline that was a variation of the standard steady integration/steady deployment (CI/CD) pipeline discovered in lots of conventional DevSecOps workflows as proven under.

Determine 1: The DevSecOps Infinity diagram, which represents the continual integration/steady deployment (CI/CD) pipeline discovered in lots of conventional DevSecOps workflows.

The principle distinction between our pipeline and a conventional CI/CD pipeline is that we’re not growing the appliance that’s being deployed; the software program is usually offered by a third-party vendor. Our focus is on delivering it to our surroundings, deploying it onto our data programs, working it, and monitoring it for correct performance.

Automation can yield terrific advantages in productiveness, effectivity, and safety all through a company. Which means engineers can maintain their programs safer and handle vulnerabilities extra shortly and with out human intervention, with the impact that programs are extra readily saved compliant, secure, and safe. In different phrases, automation of the related pipeline processes can improve our workforce’s productiveness, implement safety compliance, and enhance the consumer expertise for our software program engineers.

There are, nonetheless, some potential destructive outcomes when it’s executed incorrectly. You will need to acknowledge that as a result of automation permits for a lot of actions to be carried out in fast succession, there’s all the time the likelihood that these actions result in undesirable outcomes. Undesirable outcomes could also be unintentionally launched by way of buggy process-support code that doesn’t carry out the proper checks earlier than taking an motion or an unconsidered edge case in a posh system.

It’s subsequently essential to take precautions when you’re automating a course of. This ensures that guardrails are in place in order that automated processes can’t fail and have an effect on manufacturing purposes, providers, or information. This will embrace, for instance, writing assessments that validate every stage of the automated course of, together with validity checks and secure and non-destructive halts when operations fail.

Growing significant assessments could also be difficult, requiring cautious and artistic consideration of the various methods a course of might fail, in addition to find out how to return the system to a working state ought to failures happen.

Our method to addressing this problem revolves round integration, regression, and practical assessments that will be run robotically within the pipeline. These assessments are required to make sure that the performance of the third-party utility was not affected by adjustments in configuration of the system, and likewise that new releases of the appliance nonetheless interacted as anticipated with older variations’ configurations and setups.

Automating Containerized Deployments Utilizing a CI/CD Pipeline

A Case Examine: Implementing a Customized Steady Supply Pipeline

Groups on the SEI have intensive expertise constructing DevSecOps pipelines. One workforce specifically outlined the idea of making a minimal viable course of to border a pipeline’s construction earlier than diving into improvement. This enables all the teams engaged on the identical pipeline to collaborate extra effectively.

In our pipeline, we began with the primary half of the standard construction of a CI/CD pipeline that was already in place to help third-party software program launched by the seller. This gave us a chance to dive deeper into the later levels of the pipelines: supply, testing, deployment, and operation. The tip outcome was a five-stage pipeline which automated testing and supply for all the software program elements within the device suite within the occasion of configuration adjustments or new model releases.

To keep away from the various complexities concerned with delivering and deploying third-party software program natively on hosts in our surroundings, we opted for a container-based method. We developed the container construct specs, deployment specs, and pipeline job specs in our Git repository. This enabled us to vet any desired adjustments to the configurations utilizing code evaluations earlier than they might be deployed in a manufacturing atmosphere.

A 5-Stage Pipeline for Automating Testing and Supply within the Software Suite

Stage 1: Automated Model Detection

When the pipeline is run, it searches the seller website both for the user-specified launch or the most recent launch of the appliance in a container picture. If a brand new launch is discovered, the pipeline makes use of communication channels set as much as notify engineers of the invention. Then the pipeline robotically makes an attempt to soundly obtain the container picture straight from the seller. If the container picture is unable to be retrieved from the seller, the pipeline fails and alerts engineers to the problem.

Stage 2: Automated Vulnerability Scanning

After downloading the container from the seller website, it’s best follow to run some form of vulnerability scanner to guarantee that no apparent points which may have been missed by the distributors of their launch find yourself within the manufacturing deployment. The pipeline implements this additional layer of safety by using widespread container scanning instruments, If vulnerabilities are discovered within the container picture, the pipeline fails.

Stage 3: Automated Software Deployment

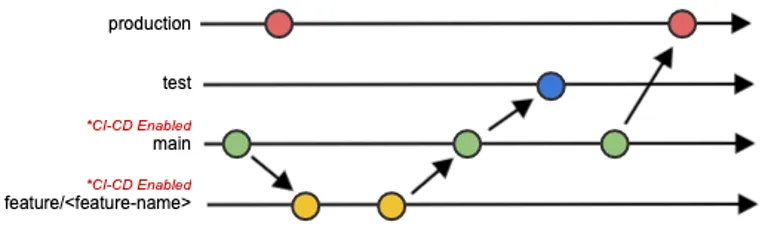

At this level within the pipeline the brand new container picture has been efficiently downloaded and scanned. The subsequent step is to arrange the pipeline’s atmosphere in order that it resembles our manufacturing deployment’s atmosphere as carefully as potential. To realize this, we created a testing system inside a Docker in Docker (DIND) pipeline container that simulates the method of upgrading purposes in an actual deployment atmosphere. The method retains monitor of our configuration recordsdata for the software program and hundreds check information into the appliance to make sure that the whole lot works as anticipated. To distinguish between these environments, we used an environment-based DevSecOps workflow (Determine 2: Git Department Diagram) that provides extra fine-grained management between configuration setups on every deployment atmosphere. This workflow permits us to develop and check on characteristic branches, have interaction in code evaluations when merging characteristic branches into the principle department, automate testing on the principle department, and account for environmental variations between the check and manufacturing code (e.g. totally different units of credentials are required in every atmosphere).

Determine 2: The Git Department Diagram

Since we’re utilizing containers, it’s not related that the container runs in two utterly totally different environments between the pipeline and manufacturing deployments. The result of the testing is anticipated to be the identical in each environments.

Now, the appliance is up and operating contained in the pipeline. To raised simulate an actual deployment, we load check information into the appliance which is able to function a foundation for a later testing stage within the pipeline.

Stage 4: Automated Testing

Automated assessments on this stage of the pipeline fall into a number of classes. For this particular utility, essentially the most related testing methods are regression assessments, smoke assessments, and practical testing.

After the appliance has been efficiently deployed inside the pipeline, we run a collection of assessments on the software program to make sure that it’s functioning and that there are not any points utilizing the configuration recordsdata that we offered. A technique that this may be achieved is by making use of the appliance’s APIs to entry the information that was loaded in throughout Stage 3. It may be useful to learn via the third-party software program’s documentation and search for API references or endpoints which may simplify this course of. This ensures that you just not solely check fundamental performance of the appliance, however that the system is functioning virtually as properly, and that the API utilization is sound.

Stage 5: Automated Supply

Lastly, after all the earlier levels are accomplished efficiently, the pipeline will make the totally examined container picture obtainable to be used in manufacturing deployments. After the container has been totally examined within the pipeline and turns into obtainable, engineers can select to make use of the container in whichever atmosphere they need (e.g., check, high quality assurance, staging, manufacturing, and many others.).

An essential side to supply is the communication channels that the pipeline makes use of to convey the knowledge that has been collected. This SEI weblog submit explains the advantages of speaking straight with builders and DevSecOps engineers via channels which might be already part of their respective workflows.

It is necessary right here to make the excellence between supply and deployment. Supply refers back to the course of of constructing software program obtainable to the programs the place it would find yourself being put in. In distinction, the time period deployment refers back to the strategy of robotically pushing the software program out to the system, making it obtainable to the top customers. In our pipeline, we give attention to supply as a substitute of deployment as a result of the providers for which we’re automating upgrades require a excessive diploma of reliability and uptime. A future objective of this work is to finally implement automated deployments.

Dealing with Pipeline Failures

With this mannequin for a customized pipeline, failures modes are designed into the method. When the pipeline fails, prognosis of the failure ought to establish remedial actions to be undertaken by the engineers. These issues might be points with the configuration recordsdata, software program variations, check information, file permissions, atmosphere setup, or another unexpected error. By operating an exhaustive collection of assessments, engineers can come into the scenario geared up with a higher understanding of potential issues with the setup. This ensures that they will make the wanted changes as successfully as potential and keep away from operating into the incompatibility points on a manufacturing deployment.

Implementation Challenges

We confronted some specific challenges in our experimentation, and we share them right here, since they could be instructive.

The primary problem was deciding how the pipeline could be designed. As a result of the pipeline remains to be evolving, flexibility was required by members of the workforce to make sure there was a constant image concerning the standing of the pipeline and future objectives. We additionally wanted the workforce to remain dedicated to repeatedly bettering the pipeline. We discovered it useful to sync up regularly with progress updates so that everybody stayed on the identical web page all through the pipeline design and improvement processes.

The subsequent problem appeared throughout the pipeline implementation course of. Whereas we have been migrating our information to a container-based platform, we found that most of the containerized releases of various software program wanted in our pipeline lacked documentation. To make sure that all of the data we gained all through the design, improvement, and implementation processes was shared by your complete workforce, , we discovered it crucial to jot down a considerable amount of our personal documentation to function a reference all through the method.

A closing problem was to beat an inclination to stay with a working course of that’s minimally possible, however that fails to profit from trendy course of approaches and tooling. It may be simple to settle into the mindset of “this works for us” and “we’ve all the time executed it this manner” and fail to make the implementation of confirmed rules and practices a precedence. Complexity and the price of preliminary setup is usually a main barrier to vary. Initially, we needed to grasp the trouble of making our personal customized container photos that had the identical functionalities as an present, working programs. At the moment, we questioned whether or not this additional effort was even crucial in any respect. Nonetheless, it grew to become clear that switching to containers considerably lowered the complexity of robotically deploying the software program in our surroundings, and that discount in complexity allowed the time and cognitive house for the addition of intensive automated testing of the improve course of and the performance of the upgraded system.

Now, as a substitute of manually performing all of the assessments required to make sure the upgraded system features appropriately, the engineers are solely alerted when an automatic check fails and requires intervention. You will need to contemplate the assorted organizational boundaries that groups would possibly run into whereas coping with implementing advanced pipelines.

Managing Technical Debt and Different Selections When Automating Your Software program Supply Workflow

When making the choice to automate a serious a part of your software program supply workflow, you will need to develop metrics to show advantages to the group to justify the funding of upfront effort and time into crafting and implementing all of the required assessments, studying the brand new workflow, and configuring the pipeline. In our experimentation, we judged that’s was a extremely worthwhile funding to make the change.

Fashionable CI/CD instruments and practices are among the greatest methods to assist fight technical debt. The automation pipelines that we applied have saved numerous hours for engineers and we count on will proceed to take action over time of operation. By automating the setup and testing stage for updates, engineers can deploy the most recent variations of software program extra shortly and with extra confidence. This enables our workforce to remain updated on software program releases to raised help our clients’ wants and assist them keep present on safety patches. Our workforce is ready to make the most of the newly freed up time to work on different analysis and initiatives that enhance the capabilities of the DoD warfighter.