Organizations converse of operational reporting and analytics as the subsequent technical problem in bettering enterprise processes and effectivity. In a world the place everyone seems to be turning into an analyst, stay dashboards floor up-to-date insights and operationalize real-time knowledge to offer in-time decision-making assist throughout a number of areas of a corporation. We’ll take a look at what it takes to construct operational dashboards and reporting utilizing customary knowledge visualization instruments, like Tableau, Grafana, Redash, and Apache Superset. Particularly, we’ll be specializing in utilizing these BI instruments on knowledge saved in DynamoDB, as we’ve discovered the trail from DynamoDB to knowledge visualization device to be a standard sample amongst customers of operational dashboards.

Creating knowledge visualizations with present BI instruments, like Tableau, might be a great match for organizations with fewer assets, much less strict UI necessities, or a need to shortly get a dashboard up and working. It has the additional advantage that many analysts on the firm are already accustomed to easy methods to use the device. If you’re serious about crafting your individual customized dashboard, examine Customized Stay Dashboards on DynamoDB as an alternative.

We think about a number of approaches, all of which use DynamoDB Streams however differ in how the dashboards are served:

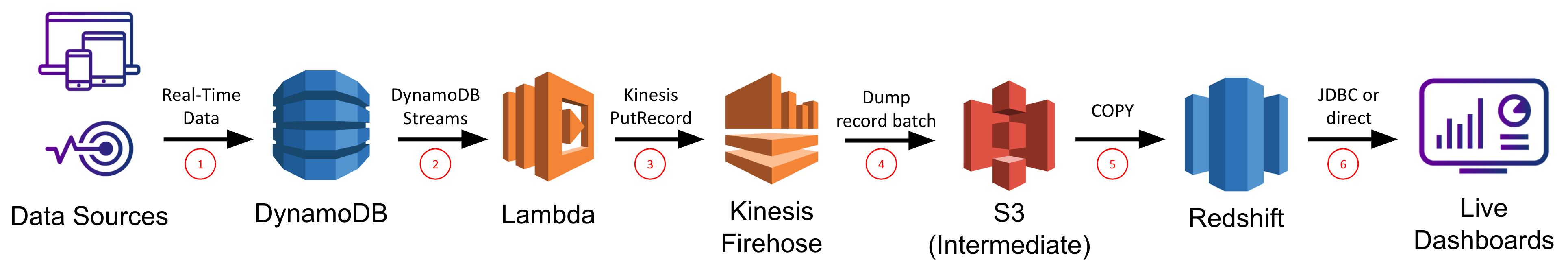

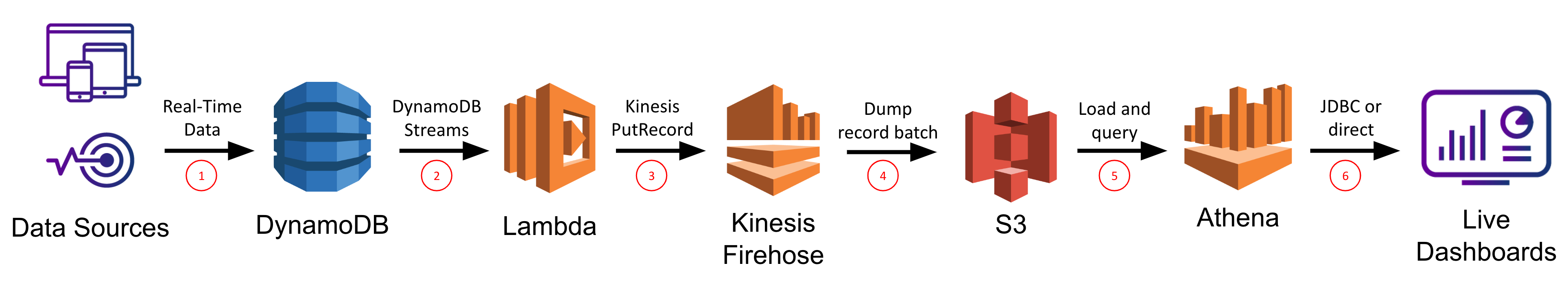

1. DynamoDB Streams + Lambda + Kinesis Firehose + Redshift

2. DynamoDB Streams + Lambda + Kinesis Firehose + S3 + Athena

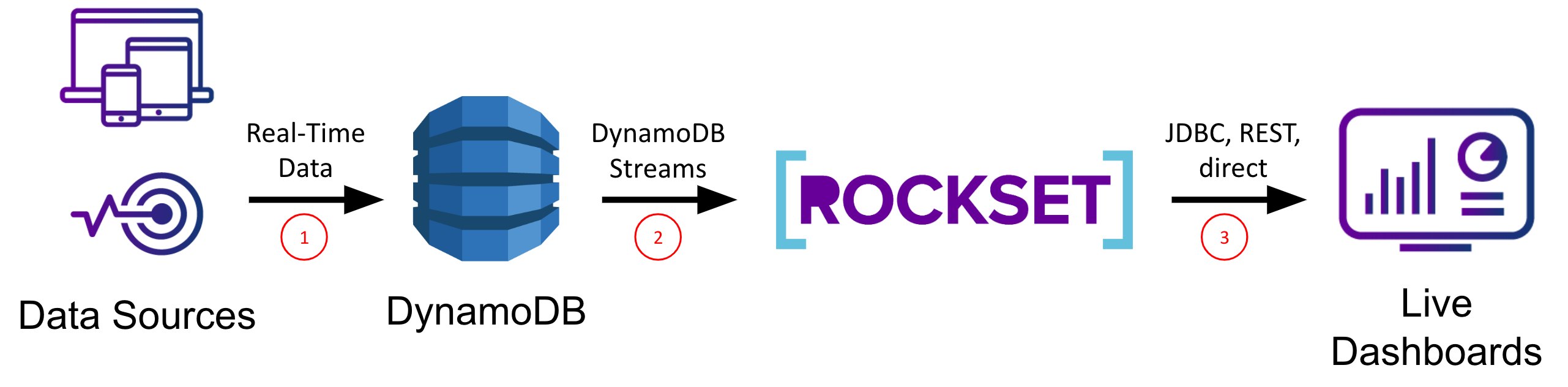

3. DynamoDB Streams + Rockset

We’ll consider every strategy on its ease of setup/upkeep, knowledge latency, question latency/concurrency, and system scalability so you possibly can choose which strategy is finest for you based mostly on which of those standards are most necessary in your use case.

Issues for Constructing Operational Dashboards Utilizing Normal BI Instruments

Constructing stay dashboards is non-trivial as any answer must assist extremely concurrent, low latency queries for quick load instances (or else drive down utilization/effectivity) and stay sync from the information sources for low knowledge latency (or else drive up incorrect actions/missed alternatives). Low latency necessities rule out immediately working on knowledge in OLTP databases, that are optimized for transactional, not analytical, queries. Low knowledge latency necessities rule out ETL-based options which improve your knowledge latency above the real-time threshold and inevitably result in “ETL hell”.

DynamoDB is a completely managed NoSQL database supplied by AWS that’s optimized for level lookups and small vary scans utilizing a partition key. Although it’s extremely performant for these use instances, DynamoDB shouldn’t be a sensible choice for analytical queries which usually contain giant vary scans and sophisticated operations akin to grouping and aggregation. AWS is aware of this and has answered clients requests by creating DynamoDB Streams, a change-data-capture system which can be utilized to inform different companies of latest/modified knowledge in DynamoDB. In our case, we’ll make use of DynamoDB Streams to synchronize our DynamoDB desk with different storage methods which can be higher suited to serving analytical queries.

To construct your stay dashboard on prime of an present BI device primarily means that you must present a SQL API over a real-time knowledge supply, after which you need to use your BI device of selection–Tableau, Superset, Redash, Grafana, and so forth.–to plug into it and create your entire knowledge visualizations on DynamoDB knowledge. Due to this fact, right here we’ll deal with making a real-time knowledge supply with SQL assist and go away the specifics of every of these instruments for one more submit.

Kinesis Firehose + Redshift

We’ll begin off this finish of the spectrum by contemplating utilizing Kinesis Firehose to synchronize your DynamoDB desk with a Redshift desk, on prime of which you’ll run your BI device of selection. Redshift is AWS’s knowledge warehouse providing that’s particularly tailor-made for OLAP workloads over very giant datasets. Most BI instruments have express Redshift integrations obtainable, and there’s an ordinary JDBC connection to can be utilized as effectively.

The very first thing to do is create a brand new Redshift cluster, and inside it create a brand new database and desk that will likely be used to carry the information to be ingested from DynamoDB. You possibly can connect with your Redshift database by means of an ordinary SQL consumer that helps a JDBC connection and the PostgreSQL dialect. You’ll have to explicitly outline your desk with all subject names, knowledge varieties, and column compression varieties at this level earlier than you possibly can proceed.

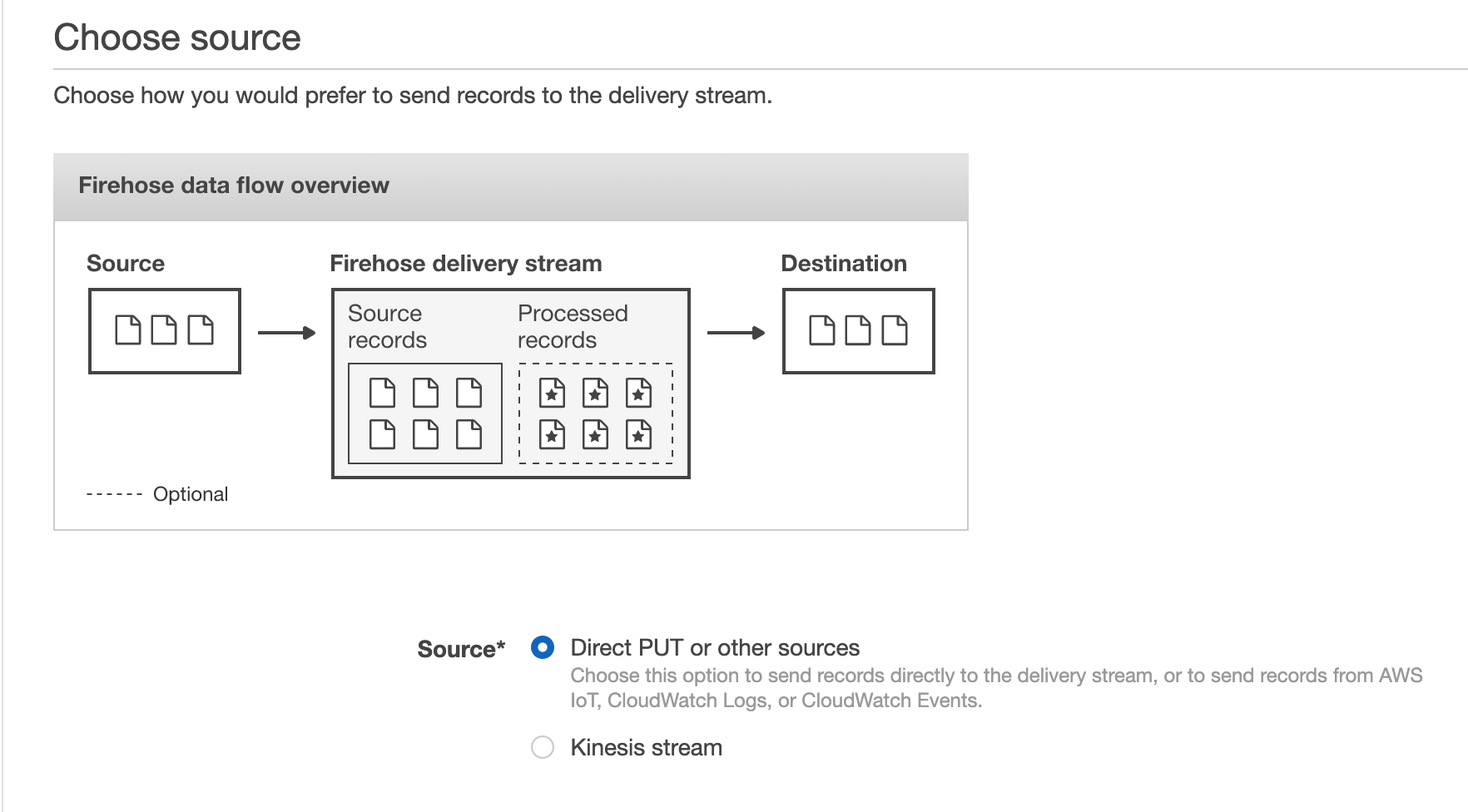

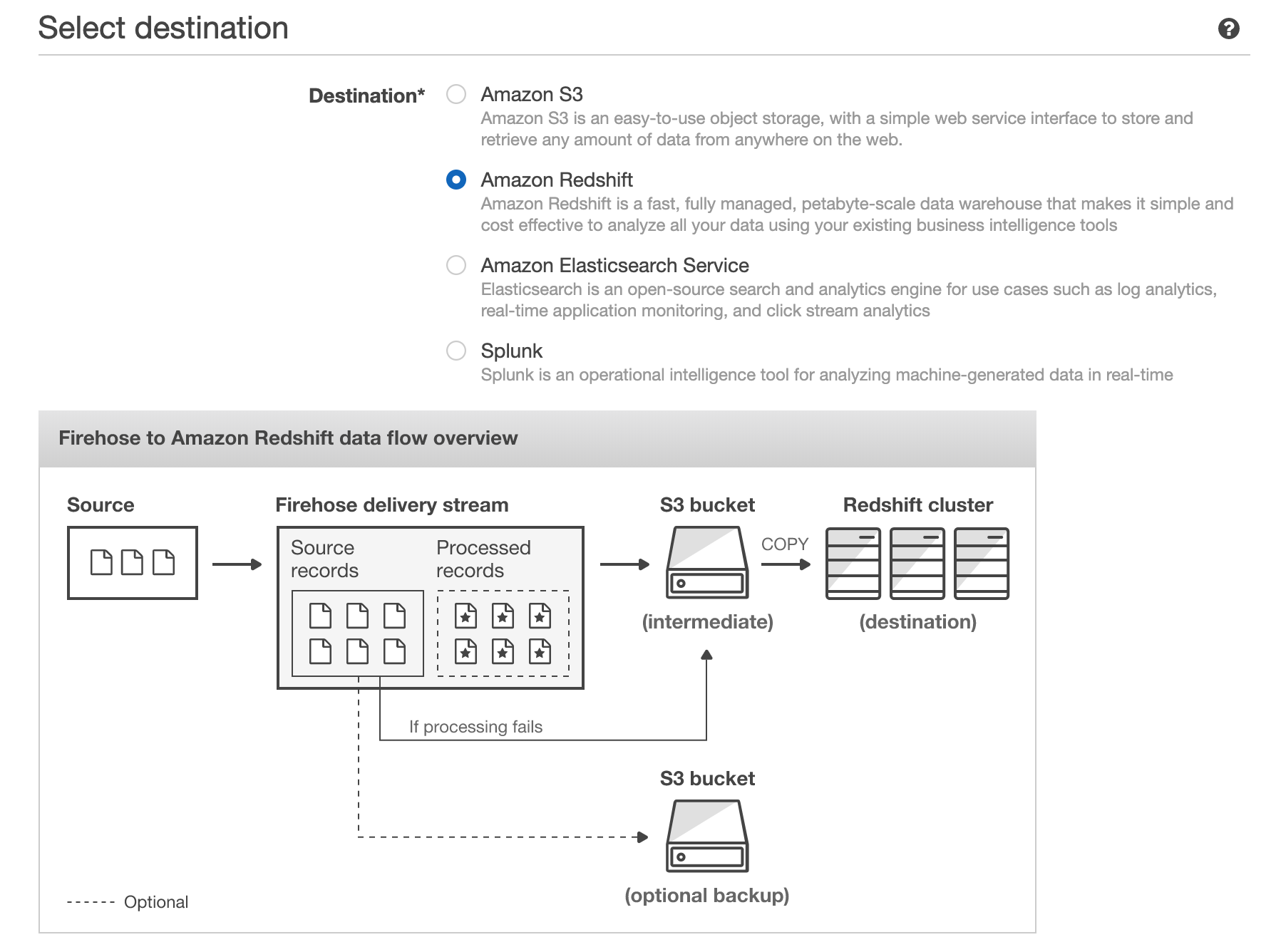

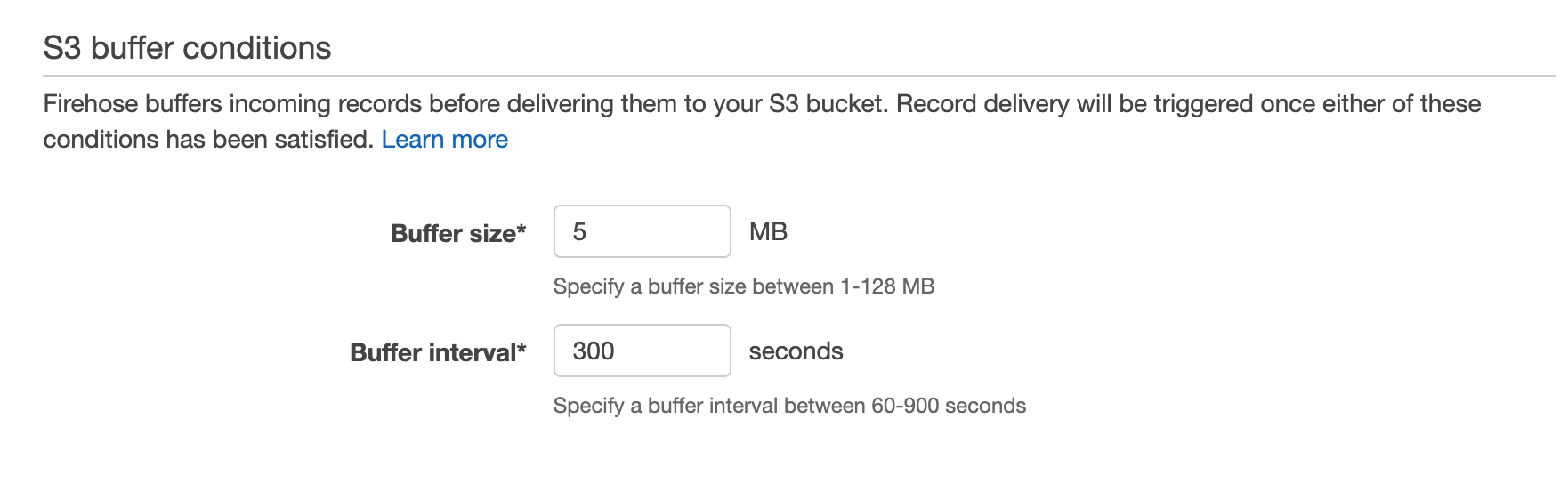

Subsequent, you’ll have to go to the Kinesis dashboard and create a brand new Kinesis Firehose, which is the variant AWS offers to stream occasions to a vacation spot bucket in S3 or a vacation spot desk in Redshift. We’ll select the supply choice Direct PUT or different sources, and we’ll choose our Redshift desk because the vacation spot. Right here it provides you some useful optimizations you possibly can allow like staging the information in S3 earlier than performing a COPY command into Redshift (which results in fewer, bigger writes to Redshift, thereby preserving treasured compute assets in your Redshift cluster and supplying you with a backup in S3 in case there are any points in the course of the COPY). We are able to configure the buffer dimension and buffer interval to regulate how a lot/typically Kinesis writes in a single chunk. For instance, a 100MB buffer dimension and 60s buffer interval would inform Kinesis Firehose to jot down as soon as it has acquired 100MB of information, or 60s has handed, whichever comes first.

Lastly, you possibly can arrange a Lambda operate that makes use of the DynamoDB Streams API to retrieve current modifications to the DynamoDB desk. This operate will buffer these modifications and ship a batch of them to Kinesis Firehose utilizing its PutRecord or PutRecordBatch API. The operate would look one thing like

exports.handler = async (occasion, context) => {

for (const document of occasion.Information) {

let platform = document.dynamodb['NewImage']['platform']['S'];

let quantity = document.dynamodb['NewImage']['amount']['N'];

let knowledge = ... // format in accordance with your Redshift schema

var params = {

Information: knowledge

StreamName: 'check'

PartitionKey: '1234'

};

kinesis.putRecord(params, operate(err, knowledge) {

if (err) console.log(err, err.stack); // an error occurred

else console.log(knowledge); // profitable response

});

}

return `Efficiently processed ${occasion.Information.size} data.`;

};

Placing this all collectively we get the next chain response every time new knowledge is put into the DynamoDB desk:

- The Lambda operate is triggered, and makes use of the DynamoDB Streams API to get the updates and writes them to Kinesis Firehose

- Kinesis Firehose buffers the updates it will get and periodically (based mostly on buffer dimension/interval) flushes them to an intermediate file in S3

- The file in S3 is loaded into the Redshift desk utilizing the Redshift COPY command

- Any queries in opposition to the Redshift desk (e.g. from a BI device) mirror this new knowledge as quickly because the COPY completes

On this method, any dashboard constructed by means of a BI device that’s built-in with Redshift will replace in response to modifications in your DynamoDB desk.

Execs:

- Redshift can scale to petabytes

- Many BI instruments (e.g. Tableau, Redash) have devoted Redshift integrations

- Good for advanced, compute-heavy queries

- Based mostly on acquainted PostgreSQL; helps full-featured SQL, together with aggregations, sorting, and joins

Cons:

- Have to provision/keep/tune Redshift cluster which is dear, time consuming, and fairly difficult

- Information latency on the order of a number of minutes (or extra relying on configurations)

- Because the DynamoDB schema evolves, tweaks will likely be required to the Redshift desk schema / the Lambda ETL

- Redshift pricing is by the hour for every node within the cluster, even if you happen to’re not utilizing them or there’s little knowledge on them

- Redshift struggles with extremely concurrent queries

TLDR:

- Contemplate this feature if you happen to don’t have many energetic customers in your dashboard, don’t have strict real-time necessities, and/or have already got a heavy funding in Redshift

- This strategy makes use of Lambdas and Kinesis Firehose to ETL your knowledge and retailer it in Redshift

- You’ll get good question efficiency, particularly for advanced queries over very giant knowledge

- Information latency gained’t be nice although and Redshift struggles with excessive concurrency

- The ETL logic will most likely break down as your knowledge modifications and want fixing

- Administering a manufacturing Redshift cluster is a big enterprise

For extra info on this strategy, try the AWS documentation for loading knowledge from DynamoDB into Redshift.

S3 + Athena

Subsequent we’ll think about Athena, Amazon’s service for working SQL on knowledge immediately in S3. That is primarily focused for rare or exploratory queries that may tolerate longer runtimes and save on price by not having the information copied right into a full-fledged database or cache like Redshift, Redis, and so forth.

Very similar to the earlier part, we are going to use Kinesis Firehose right here, however this time will probably be used to shuttle DynamoDB desk knowledge into S3. The setup is identical as above with choices for buffer interval and buffer dimension. Right here this can be very necessary to allow compression on the S3 recordsdata since that can result in each sooner and cheaper queries since Athena expenses you based mostly on the information scanned. Then, just like the earlier part, you possibly can register a Lambda operate and use the DynamoDB streams API to make calls to the Kinesis Firehose API as modifications are made to our DynamoDB desk. On this method you’ll have a bucket in S3 storing a replica of your DynamoDB knowledge over a number of compressed recordsdata.

Be aware: You possibly can moreover save on price and enhance efficiency by utilizing a extra optimized storage format and partitioning your knowledge.

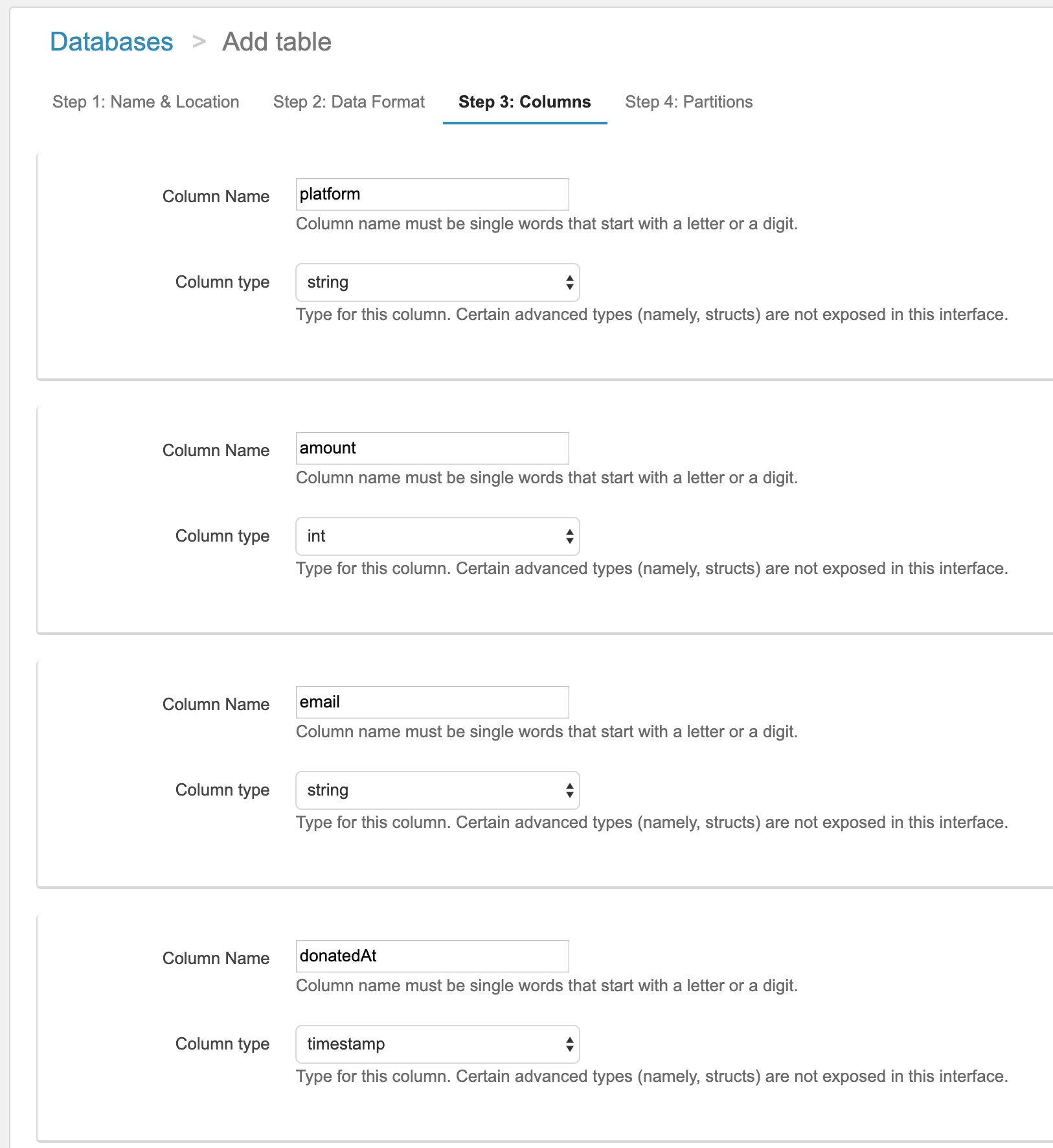

Subsequent within the Athena dashboard you possibly can create a brand new desk and outline the columns there both by means of the UI or utilizing Hive DDL statements. Like Hive, Athena has a schema on learn system, which means as every new document is learn in, the schema is utilized to it (vs. being utilized when the file is written).

As soon as your schema is outlined, you possibly can submit queries by means of the console, by means of their JDBC driver, or by means of BI device integrations like Tableau and Amazon Quicksight. Every of those queries will result in your recordsdata in S3 being learn, the schema being utilized to all of data, and the question outcome being computed throughout the data. Because the knowledge shouldn’t be optimized in a database, there are not any indexes and studying every document is costlier for the reason that bodily format shouldn’t be optimized. Because of this your question will run, however it’s going to tackle the order of minutes to doubtlessly hours.

Execs:

- Works at giant scales

- Low knowledge storage prices since all the things is in S3

- No always-on compute engine; pay per question

Cons:

- Very excessive question latency– on the order of minutes to hours; can’t use with interactive dashboards

- Have to explicitly outline your knowledge format and format earlier than you possibly can start

- Combined varieties within the S3 recordsdata attributable to DynamoDB schema modifications will result in Athena ignoring data that don’t match the schema you specified

- Except you set within the time/effort to compress your knowledge, ETL your knowledge into Parquet/ORC format, and partition your knowledge recordsdata in S3, queries will successfully at all times scan your complete dataset, which will likely be very gradual and really costly

TLDR:

- Contemplate this strategy if price and knowledge dimension are the driving components in your design and provided that you possibly can tolerate very lengthy and unpredictable run instances (minutes to hours)

- This strategy makes use of Lambda + Kinesis Firehose to ETL your knowledge and retailer it in S3

- Greatest for rare queries on tons of information and DynamoDB reporting / dashboards that do not should be interactive

Check out this AWS weblog for extra particulars on easy methods to analyze knowledge in S3 utilizing Athena.

Rockset

The final choice we’ll think about on this submit is Rockset, a serverless search and analytics service. Rockset’s knowledge engine has robust dynamic typing and sensible schemas which infer subject varieties in addition to how they modify over time. These properties make working with NoSQL knowledge, like that from DynamoDB, straight ahead. Rockset additionally integrates with each customized dashboards and BI instruments.

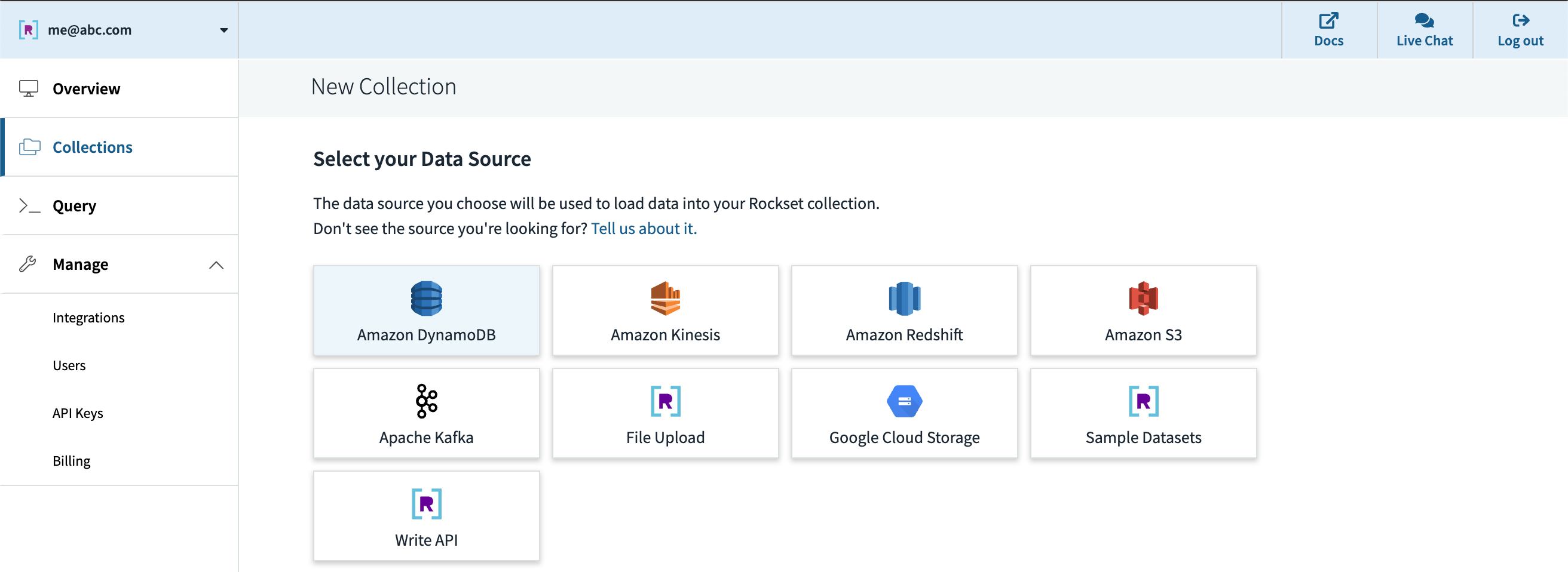

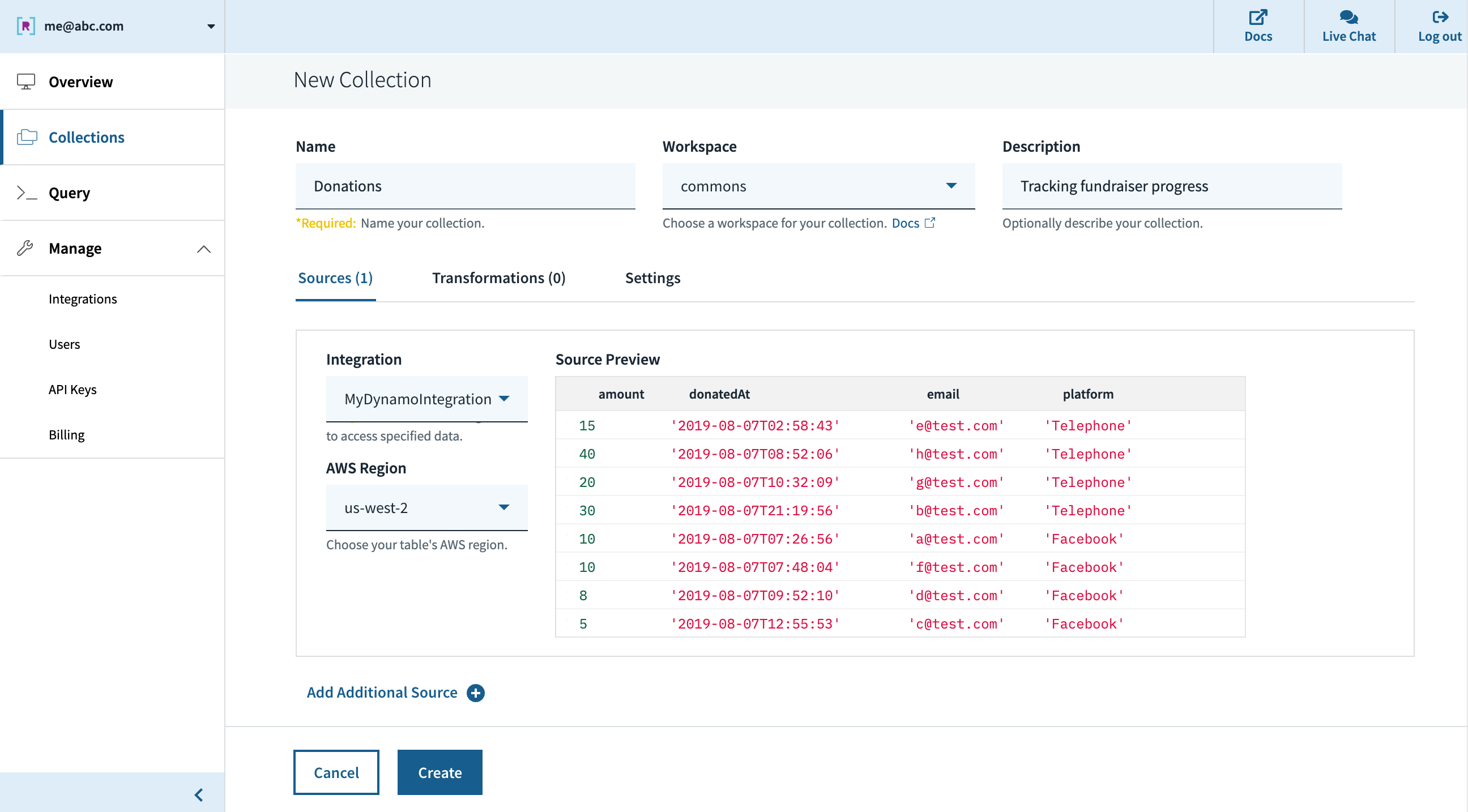

After creating an account at www.rockset.com, we’ll use the console to arrange our first integration– a set of credentials used to entry our knowledge. Since we’re utilizing DynamoDB as our knowledge supply, we’ll present Rockset with an AWS entry key and secret key pair that has correctly scoped permissions to learn from the DynamoDB desk we wish. Subsequent we’ll create a set– the equal of a DynamoDB/SQL desk– and specify that it ought to pull knowledge from our DynamoDB desk and authenticate utilizing the mixing we simply created. The preview window within the console will pull just a few data from the DynamoDB desk and show them to verify all the things labored appropriately, after which we’re good to press “Create”.

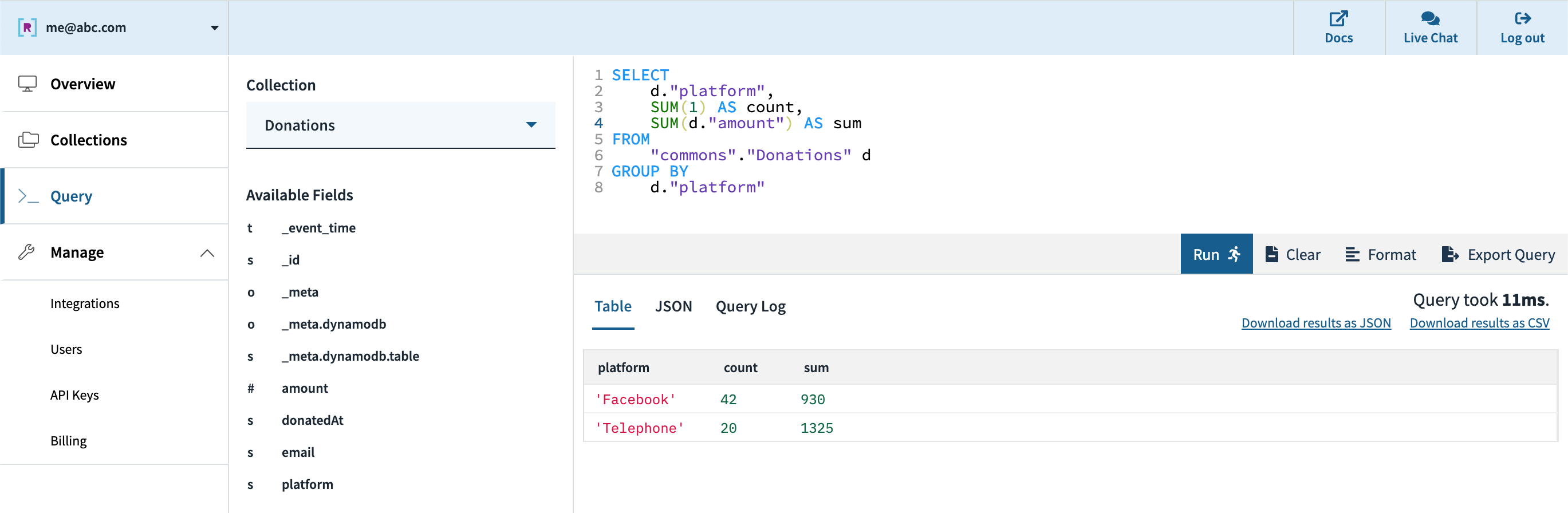

Quickly after, we are able to see within the console that the gathering is created and knowledge is streaming in from DynamoDB. We are able to use the console’s question editor to experiment/tune the SQL queries that will likely be utilized in our stay dashboard. Since Rockset has its personal question compiler/execution engine, there may be first-class assist for arrays, objects, and nested knowledge constructions.

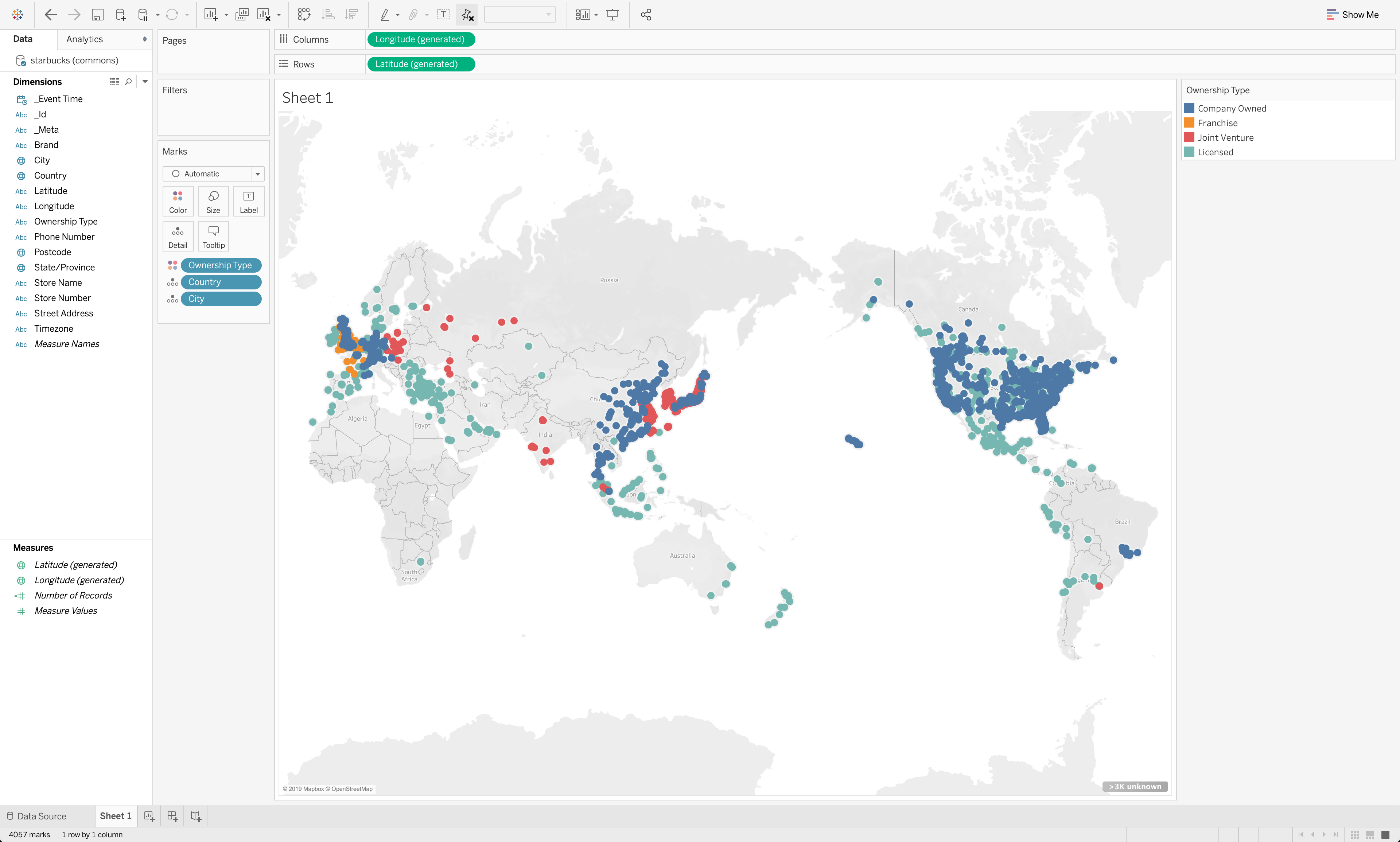

Subsequent, we are able to create an API key within the console which will likely be utilized by the dashboard for authentication to Rockset’s servers. Our choices for connecting to a BI device like Tableau, Redash, and so forth. are the JDBC driver that Rockset offers or the native Rockset integration for people who have one.

We have now efficiently gone from DynamoDB knowledge to a quick, interactive dashboard on Tableau, or different BI device of selection. Rockset’s cloud-native structure permits it to scale question efficiency and concurrency dynamically as wanted, enabling quick queries even on giant datasets with advanced, nested knowledge with inconsistent varieties.

Execs:

- Serverless– quick setup, no-code DynamoDB integration, and 0 configuration/administration required

- Designed for low question latency and excessive concurrency out of the field

- Integrates with DynamoDB (and different sources) in real-time for low knowledge latency with no pipeline to keep up

- Sturdy dynamic typing and sensible schemas deal with blended varieties and works effectively with NoSQL methods like DynamoDB

- Integrates with quite a lot of BI instruments (Tableau, Redash, Grafana, Superset, and so forth.) and customized dashboards (by means of consumer SDKs, if wanted)

Cons:

- Optimized for energetic dataset, not archival knowledge, with candy spot as much as 10s of TBs

- Not a transactional database

- It’s an exterior service

TLDR:

- Contemplate this strategy when you have strict necessities on having the newest knowledge in your real-time dashboards, have to assist giant numbers of customers, or wish to keep away from managing advanced knowledge pipelines

- Constructed-in integrations to shortly go from DynamoDB (and lots of different sources) to stay dashboards

- Can deal with blended varieties, syncing an present desk, and tons of quick queries

- Greatest for knowledge units from just a few GBs to 10s of TBs

For extra assets on easy methods to combine Rockset with DynamoDB, try this weblog submit that walks by means of a extra advanced instance.

Conclusion

On this submit, we thought of just a few approaches to enabling customary BI instruments, like Tableau, Redash, Grafana, and Superset, for real-time dashboards on DynamoDB, highlighting the professionals and cons of every. With this background, it is best to have the ability to consider which choice is true in your use case, relying in your particular necessities for question and knowledge latency, concurrency, and ease of use, as you implement operational reporting and analytics in your group.

Different DynamoDB assets: