That is the second publish in a sequence by Rockset’s CTO Dhruba Borthakur on Designing the Subsequent Technology of Knowledge Programs for Actual-Time Analytics. We’ll be publishing extra posts within the sequence within the close to future, so subscribe to our weblog so you do not miss them!

Posts printed to this point within the sequence:

- Why Mutability Is Important for Actual-Time Knowledge Analytics

- Dealing with Out-of-Order Knowledge in Actual-Time Analytics Functions

- Dealing with Bursty Site visitors in Actual-Time Analytics Functions

- SQL and Complicated Queries Are Wanted for Actual-Time Analytics

- Why Actual-Time Analytics Requires Each the Flexibility of NoSQL and Strict Schemas of SQL Programs

Corporations in every single place have upgraded, or are at present upgrading, to a trendy knowledge stack, deploying a cloud native event-streaming platform to seize a wide range of real-time knowledge sources.

So why are their analytics nonetheless crawling by way of in batches as a substitute of actual time?

It’s in all probability as a result of their analytics database lacks the options essential to ship data-driven choices precisely in actual time. Mutability is an important functionality, however shut behind, and intertwined, is the power to deal with out-of-order knowledge.

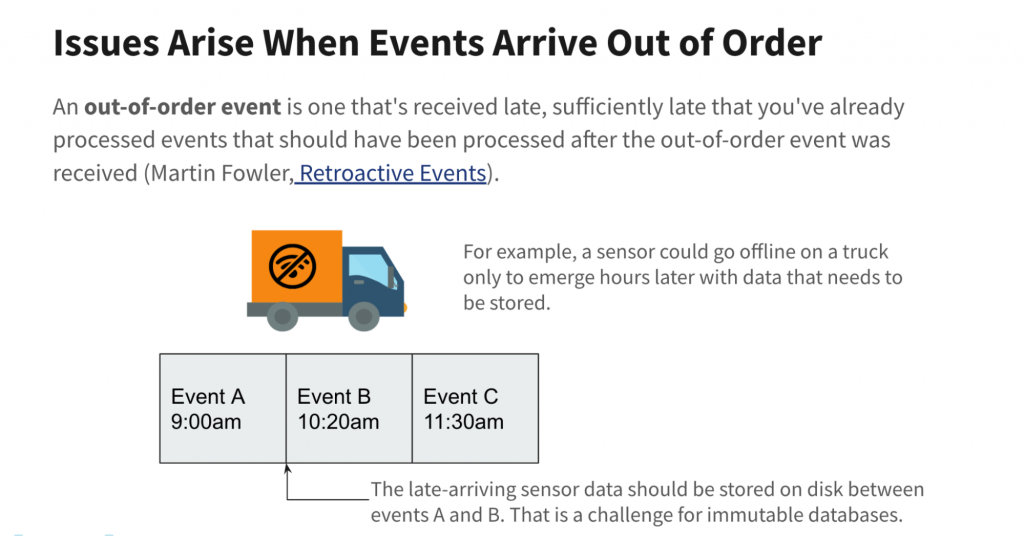

Out-of-order knowledge are time-stamped occasions that for a variety of causes arrive after the preliminary knowledge stream has been ingested by the receiving database or knowledge warehouse.

On this weblog publish, I’ll clarify why mutability is a must have for dealing with out-of-order knowledge, the three the explanation why out-of-order knowledge has turn into such a difficulty in the present day and the way a contemporary mutable real-time analytics database handles out-of-order occasions effectively, precisely and reliably.

The Problem of Out-of-Order Knowledge

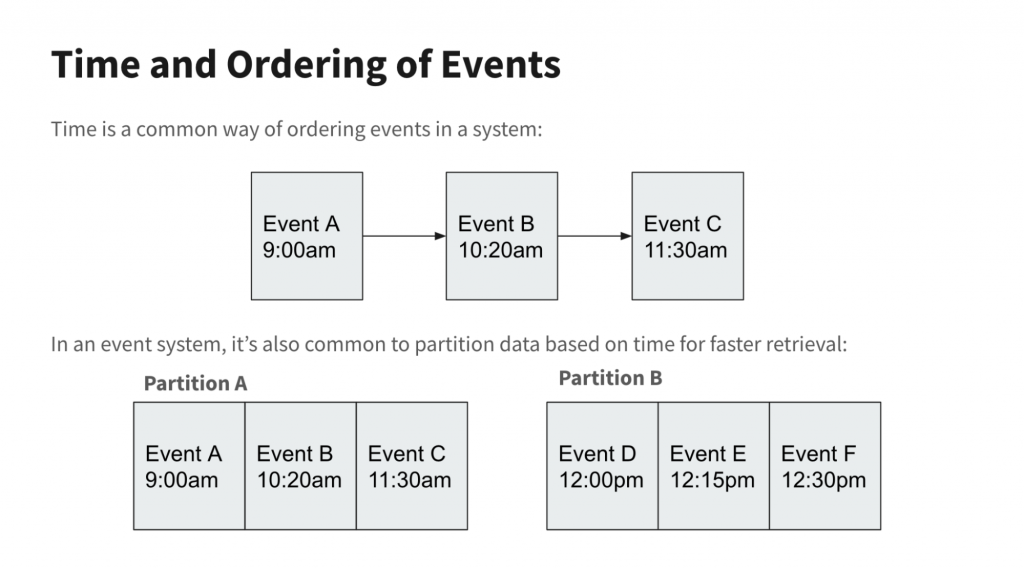

Streaming knowledge has been round because the early Nineteen Nineties below many names — occasion streaming, occasion processing, occasion stream processing (ESP), and many others. Machine sensor readings, inventory costs and different time-ordered knowledge are gathered and transmitted to databases or knowledge warehouses, which bodily retailer them in time-series order for quick retrieval or evaluation. In different phrases, occasions which are shut in time are written to adjoining disk clusters or partitions.

Ever since there was streaming knowledge, there was out-of-order knowledge. The sensor transmitting the real-time location of a supply truck may go offline due to a useless battery or the truck touring out of wi-fi community vary. An online clickstream might be interrupted if the web site or occasion writer crashes or has web issues. That clickstream knowledge would have to be re-sent or backfilled, doubtlessly after the ingesting database has already saved it.

Transmitting out-of-order knowledge is just not the difficulty. Most streaming platforms can resend knowledge till it receives an acknowledgment from the receiving database that it has efficiently written the info. That known as at-least-once semantics.

The difficulty is how the downstream database shops updates and late-arriving knowledge. Conventional transactional databases, equivalent to Oracle or MySQL, had been designed with the idea that knowledge would have to be repeatedly up to date to take care of accuracy. Consequently, operational databases are nearly at all times totally mutable in order that particular person data will be simply up to date at any time.

Immutability and Updates: Pricey and Dangerous for Knowledge Accuracy

Against this, most knowledge warehouses, each on-premises and within the cloud, are designed with immutable knowledge in thoughts, storing knowledge to disk completely because it arrives. All updates are appended somewhat than written over current knowledge data.

This has some advantages. It prevents unintended deletions, for one. For analytics, the important thing boon of immutability is that it permits knowledge warehouses to speed up queries by caching knowledge in quick RAM or SSDs with out fear that the supply knowledge on disk has modified and turn into old-fashioned.

(Martin Fowler: Retroactive Occasion)

Nonetheless, immutable knowledge warehouses are challenged by out-of-order time-series knowledge since no updates or modifications will be inserted into the unique knowledge data.

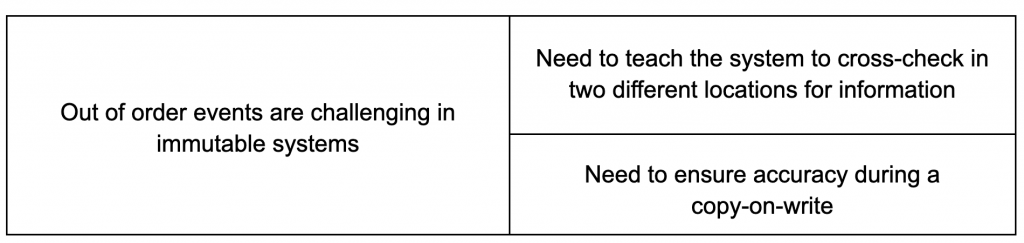

In response, immutable knowledge warehouse makers had been compelled to create workarounds. One technique utilized by Snowflake, Apache Druid and others known as copy-on-write. When occasions arrive late, the info warehouse writes the brand new knowledge and rewrites already-written adjoining knowledge so as to retailer all the things accurately to disk in the appropriate time order.

One other poor answer to take care of updates in an immutable knowledge system is to maintain the unique knowledge in Partition A (see diagram above) and write late-arriving knowledge to a distinct location, Partition B. The appliance, and never the info system, has to maintain monitor of the place all linked-but-scattered data are saved, in addition to any ensuing dependencies. This observe known as referential integrity, and it ensures that the relationships between the scattered rows of knowledge are created and used as outlined. As a result of the database doesn’t present referential integrity constraints, the onus is on the appliance developer(s) to know and abide by these knowledge dependencies.

Each workarounds have vital issues. Copy-on-write requires a major quantity of processing energy and time — tolerable when updates are few however intolerably pricey and gradual as the quantity of out-of-order knowledge rises. For instance, if 1,000 data are saved inside an immutable blob and an replace must be utilized to a single document inside that blob, the system must learn all 1,000 data right into a buffer, replace the document and write all 1,000 data again to a brand new blob on disk — and delete the previous blob. That is vastly inefficient, costly and time-wasting. It might rule out real-time analytics on knowledge streams that often obtain knowledge out-of-order.

Utilizing referential integrity to maintain monitor of scattered knowledge has its personal points. Queries should be double-checked that they’re pulling knowledge from the appropriate areas or run the danger of knowledge errors. Simply think about the overhead and confusion for an software developer when accessing the newest model of a document. The developer should write code that inspects a number of partitions, de-duplicates and merges the contents of the identical document from a number of partitions earlier than utilizing it within the software. This considerably hinders developer productiveness. Trying any question optimizations equivalent to data-caching additionally turns into way more sophisticated and riskier when updates to the identical document are scattered in a number of locations on disk.

The Drawback with Immutability Right this moment

All the above issues had been manageable when out-of-order updates had been few and pace much less essential. Nonetheless, the setting has turn into way more demanding for 3 causes:

1. Explosion in Streaming Knowledge

Earlier than Kafka, Spark and Flink, streaming got here in two flavors: Enterprise Occasion Processing (BEP) and Complicated Occasion Processing (CEP). BEP offered easy monitoring and immediate triggers for SOA-based programs administration and early algorithmic inventory buying and selling. CEP was slower however deeper, combining disparate knowledge streams to reply extra holistic questions.

BEP and CEP shared three traits:

- They had been provided by massive enterprise software program distributors.

- They had been on-premises.

- They had been unaffordable for many corporations.

Then a brand new technology of event-streaming platforms emerged. Many (Kafka, Spark and Flink) had been open supply. Most had been cloud native (Amazon Kinesis, Google Cloud Dataflow) or had been commercially tailored for the cloud (Kafka ⇒ Confluent, Spark ⇒ Databricks). And so they had been cheaper and simpler to begin utilizing.

This democratized stream processing and enabled many extra corporations to start tapping into their pent-up provides of real-time knowledge. Corporations that had been beforehand locked out of BEP and CEP started to reap web site person clickstreams, IoT sensor knowledge, cybersecurity and fraud knowledge, and extra.

Corporations additionally started to embrace change knowledge seize (CDC) so as to stream updates from operational databases — suppose Oracle, MongoDB or Amazon DynamoDB — into their knowledge warehouses. Corporations additionally began appending further associated time-stamped knowledge to current datasets, a course of known as knowledge enrichment. Each CDC and knowledge enrichment boosted the accuracy and attain of their analytics.

As all of this knowledge is time-stamped, it might probably doubtlessly arrive out of order. This inflow of out-of-order occasions places heavy stress on immutable knowledge warehouses, their workarounds not being constructed with this quantity in thoughts.

2. Evolution from Batch to Actual-Time Analytics

When corporations first deployed cloud native stream publishing platforms together with the remainder of the trendy knowledge stack, they had been advantageous if the info was ingested in batches and if question outcomes took many minutes.

Nonetheless, as my colleague Shruti Bhat factors out, the world goes actual time. To keep away from disruption by cutting-edge rivals, corporations are embracing e-commerce buyer personalization, interactive knowledge exploration, automated logistics and fleet administration, and anomaly detection to forestall cybercrime and monetary fraud.

These real- and near-real-time use circumstances dramatically slender the time home windows for each knowledge freshness and question speeds whereas amping up the danger for knowledge errors. To help that requires an analytics database able to ingesting each uncooked knowledge streams in addition to out-of-order knowledge in a number of seconds and returning correct leads to lower than a second.

The workarounds employed by immutable knowledge warehouses both ingest out-of-order knowledge too slowly (copy-on-write) or in a sophisticated approach (referential integrity) that slows question speeds and creates vital knowledge accuracy danger. In addition to creating delays that rule out real-time analytics, these workarounds additionally create additional value, too.

3. Actual-Time Analytics Is Mission Essential

Right this moment’s disruptors usually are not solely data-driven however are utilizing real-time analytics to place rivals within the rear-view window. This may be an e-commerce web site that boosts gross sales by way of customized presents and reductions, an internet e-sports platform that retains gamers engaged by way of immediate, data-optimized participant matches or a building logistics service that ensures concrete and different supplies arrive to builders on time.

The flip aspect, after all, is that advanced real-time analytics is now completely important to an organization’s success. Knowledge should be contemporary, right and updated in order that queries are error-free. As incoming knowledge streams spike, ingesting that knowledge should not decelerate your ongoing queries. And databases should promote, not detract from, the productiveness of your builders. That could be a tall order, however it’s particularly troublesome when your immutable database makes use of clumsy hacks to ingest out-of-order knowledge.

How Mutable Analytics Databases Resolve Out-of-Order Knowledge

The answer is easy and chic: a mutable cloud native real-time analytics database. Late-arriving occasions are merely written to the parts of the database they’d have been if that they had arrived on time within the first place.

Within the case of Rockset, a real-time analytics database that I helped create, particular person fields in a knowledge document will be natively up to date, overwritten or deleted. There isn’t any want for costly and gradual copy-on-writes, a la Apache Druid, or kludgy segregated dynamic partitions.

Rockset goes past different mutable real-time databases, although. Rockset not solely repeatedly ingests knowledge, but in addition can “rollup” the info as it’s being generated. By utilizing SQL to mixture knowledge as it’s being ingested, this drastically reduces the quantity of knowledge saved (5-150x) in addition to the quantity of compute wanted queries (boosting efficiency 30-100x). This frees customers from managing gradual, costly ETL pipelines for his or her streaming knowledge.

We additionally mixed the underlying RocksDB storage engine with our Aggregator-Tailer-Leaf (ALT) structure in order that our indexes are immediately, totally mutable. That ensures all knowledge, even freshly-ingested out-of-order knowledge, is obtainable for correct, ultra-fast (sub-second) queries.

Rockset’s ALT structure additionally separates the duties of storage and compute. This ensures easy scalability if there are bursts of knowledge visitors, together with backfills and different out-of-order knowledge, and prevents question efficiency from being impacted.

Lastly, RocksDB’s compaction algorithms robotically merge previous and up to date knowledge data. This ensures that queries entry the newest, right model of knowledge. It additionally prevents knowledge bloat that may hamper storage effectivity and question speeds.

In different phrases, a mutable real-time analytics database designed like Rockset gives excessive uncooked knowledge ingestion speeds, the native means to replace and backfill data with out-of-order knowledge, all with out creating further value, knowledge error danger, or work for builders and knowledge engineers. This helps the mission-critical real-time analytics required by in the present day’s data-driven disruptors.

In future weblog posts, I’ll describe different must-have options of real-time analytics databases equivalent to bursty knowledge visitors and complicated queries. Or, you’ll be able to skip forward and watch my current discuss at the Hive on Designing the Subsequent Technology of Knowledge Programs for Actual-Time Analytics, out there under.

Embedded content material: https://www.youtube.com/watch?v=NOuxW_SXj5M

Dhruba Borthakur is CTO and co-founder of Rockset and is answerable for the corporate’s technical route. He was an engineer on the database group at Fb, the place he was the founding engineer of the RocksDB knowledge retailer. Earlier at Yahoo, he was one of many founding engineers of the Hadoop Distributed File System. He was additionally a contributor to the open supply Apache HBase challenge.

Rockset is the real-time analytics database within the cloud for contemporary knowledge groups. Get quicker analytics on more energizing knowledge, at decrease prices, by exploiting indexing over brute-force scanning.